Ollama Just Got Blazing Fast on Macs: Full MLX Support Brings 2× Speedups and NVIDIA-Quality 4-Bit Inference

Ollama — the go-to open-source tool for running powerful LLMs locally — has officially switched to Apple’s MLX framework on Apple Silicon. The update (Ollama 0.19 preview) turns every Mac with sufficient unified memory into a significantly faster local AI machine.

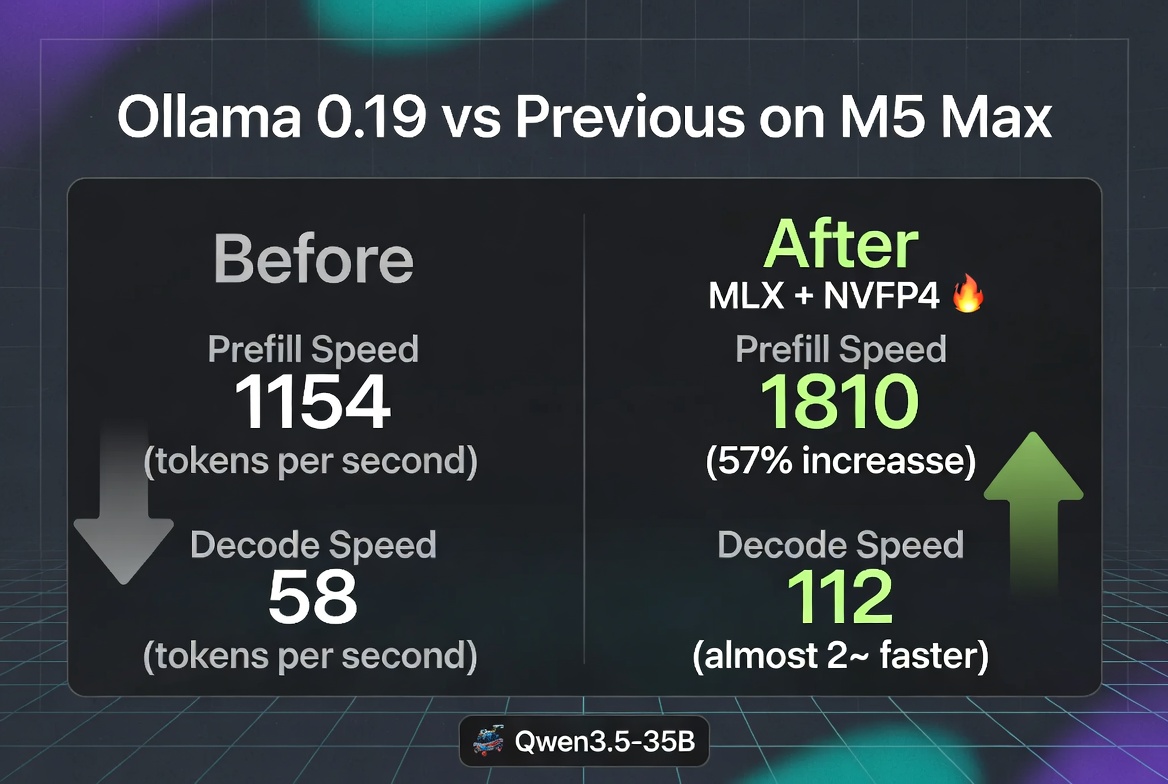

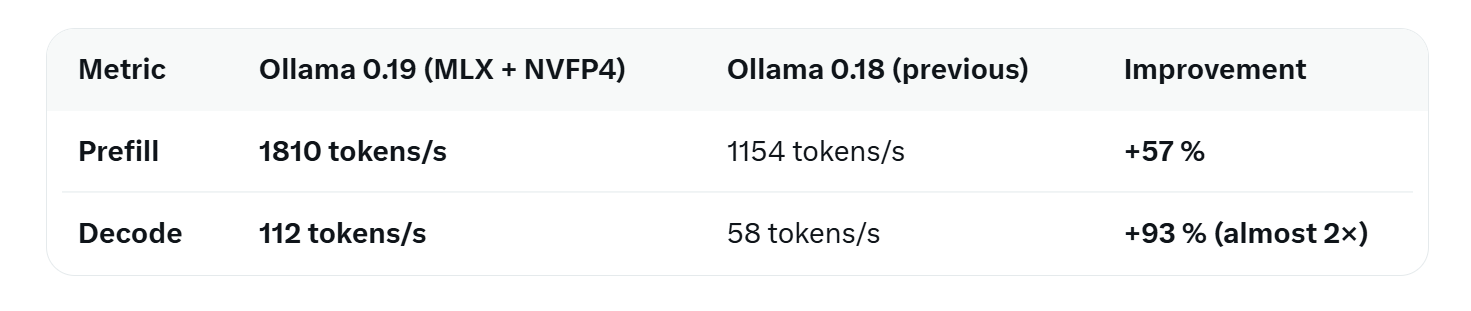

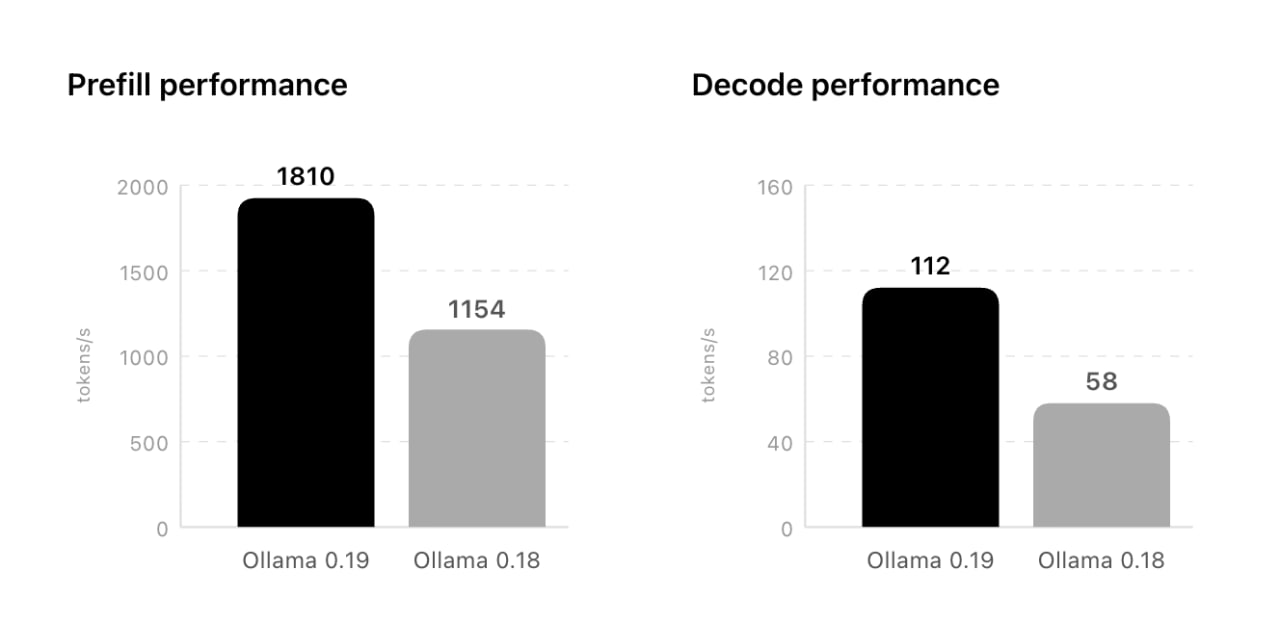

The results speak for themselves. On an M5 Max, using the new Qwen3.5-35B-A3B model quantized with NVIDIA’s NVFP4 format:

Even higher numbers are possible with int4 quantization: up to 1851 tokens/s prefill and 134 tokens/s decode.

112 tokens per second on a 35-billion-parameter model is *fire*. That’s the kind of speed where a powerful coding agent can generate, review, and iterate on code changes faster than most developers can read them.

What Changed Under the Hood

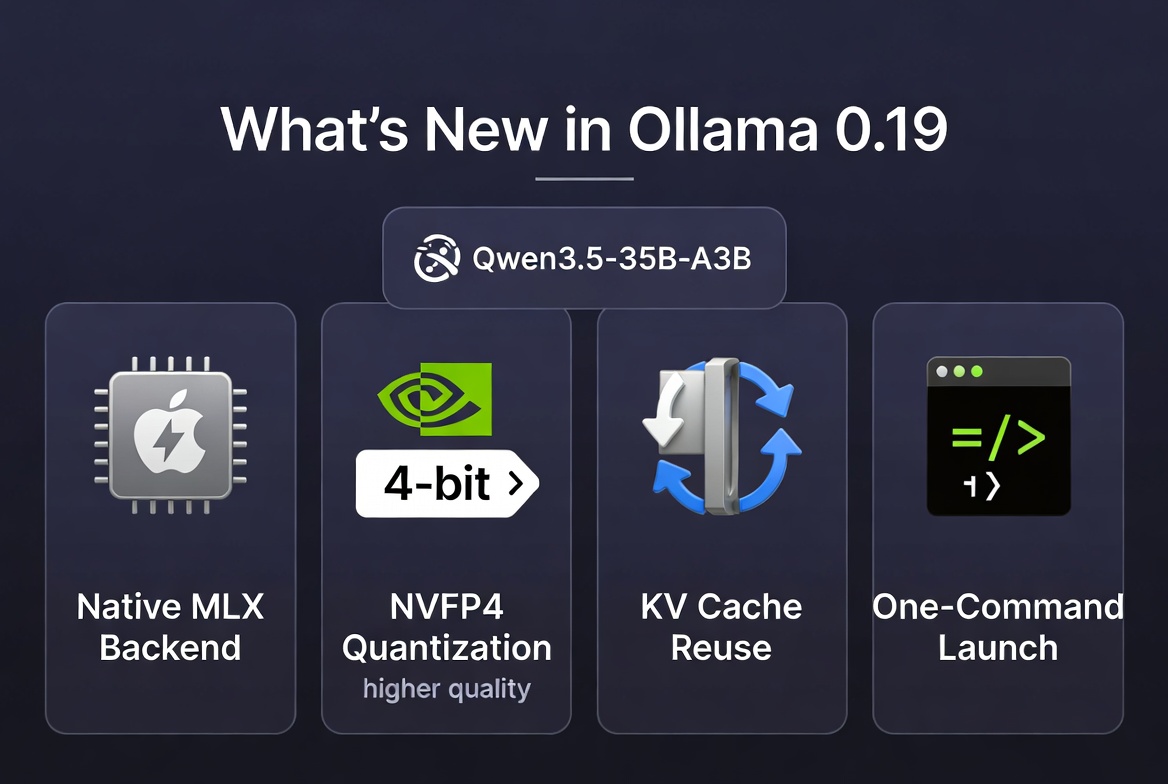

Ollama is now built directly on top of Apple’s open-source MLX framework. It takes full advantage of unified memory architecture and the new GPU Neural Accelerators on M5-series chips. No more fighting between CPU and GPU memory pools — everything just flies.

2. NVIDIA NVFP4 Quantization

For the first time, Ollama supports NVIDIA’s NVFP4 format. This 4-bit quantization delivers noticeably higher response quality than traditional 4-bit methods while slashing memory usage and bandwidth. The model feels almost full-precision in practice.

3. Smart KV Cache Reuse

Cache snapshots are now intelligently stored and reused across conversations. Shared prompts (common in agentic workflows or coding sessions) hit the cache far more often, dramatically cutting down on repeated prompt processing.

Starting the right model for a specific task is now dead simple.

Example:

```bash

ollama launch claude --model qwen3.5:35b-a3b-coding-nvfp4

Currently Available

The spotlight is on Qwen3.5-35B-A3B (tagged `qwen3.5:35b-a3b-coding-nvfp4`), a sparse Mixture-of-Experts model heavily optimized for coding and agentic tasks. It requires a Mac with more than 32 GB of unified memory to run comfortably.

More models and easier import of custom fine-tunes are already in the works.

Also read:

- JPMorgan Eyes Prediction Markets: Jamie Dimon Says the World’s Largest Bank Is Studying a Launch

- Google’s Wake-Up Call: Quantum Computers Could Crack Bitcoin With 20x Fewer Resources Than Anyone Thought

- Google Just Released the Real-Life “Pied Piper” Algorithm — And the Memory Market Is Having a Meltdown

Why This Matters

Whether you’re running Claude-style coding agents, OpenClaw, or your own fine-tuned models, the experience just jumped a generation ahead. And because it’s all local, private, and offline, you keep full control of your data.

Ready to try it?

Download Ollama 0.19 preview from ollama.com/download and fire up the new Qwen3.5 coding model.

Mac users just got one of the best local LLM upgrades of 2026.

The gap between “runs locally” and “feels like a cloud supercomputer” has never been smaller.