Google Just Released the Real-Life “Pied Piper” Algorithm — And the Memory Market Is Having a Meltdown

Remember the entire premise of the HBO show Silicon Valley? A scrappy startup called Pied Piper invents a revolutionary compression algorithm that shrinks files to a fraction of their size with zero quality loss. It was supposed to be the holy grail of data efficiency.

In 2026, it’s no longer fiction — except the breakthrough didn’t come from a garage startup. It came from Google.

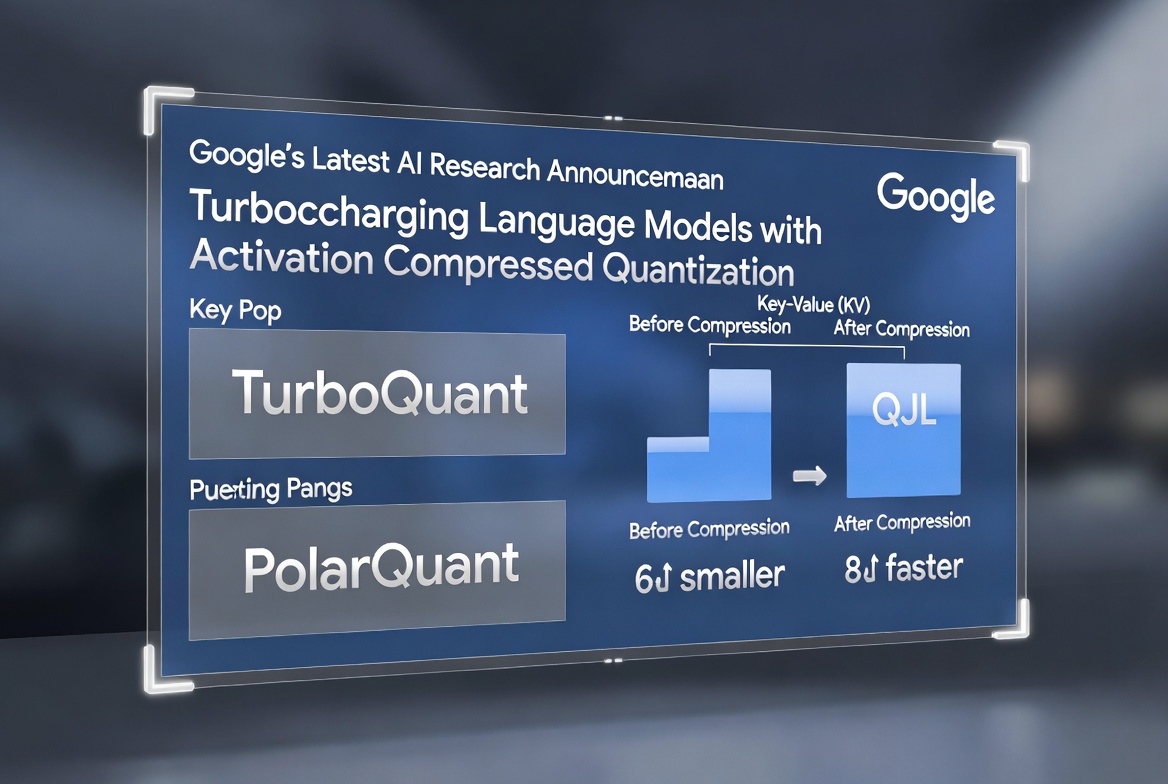

The results are absurdly good: at least 6× reduction in KV-cache memory usage, up to 8× faster attention computation on NVIDIA H100 GPUs, and — crucially — zero measurable accuracy loss. Internet immediately dubbed it “Pied Piper for AI.”

The Market’s Instant Panic

Within hours of the announcement, memory-chip stocks cratered. Samsung and SK Hynix each dropped around 6% in a single session. Micron Technologies fell even harder. The narrative on forums and trading desks was swift and brutal: “Memory is dead. AI just got Pied Piper’d. Long-term holders are cooked.”

It’s easy to see why people freaked out. KV-cache has been one of the biggest bottlenecks (and biggest memory hogs) in running large models at scale. If Google’s technique makes that memory requirement collapse by 6×, surely demand for HBM, DRAM, and high-bandwidth memory is about to evaporate, right?

Not so fast.

Why the Long-Term Memory Bull Case Is Still Intact

Two structural reasons explain why this breakthrough is not the death knell for the memory market.

Inference is when an already-trained model generates answers. The KV-cache stores context so the model doesn’t have to recompute everything from scratch. It’s relatively “static” data during a single forward pass, which makes aggressive compression feasible.

Training is an entirely different beast. During pre-training and fine-tuning, the model is constantly updating billions of weights and gradients. Those values are dynamic, noisy, and extremely sensitive. Applying the same kind of lossless compression there would break convergence. The vast majority of today’s memory demand — especially the ultra-high-bandwidth HBM that powers the biggest training clusters — is still driven by training, not inference. TurboQuant doesn’t touch that.

2. Jevons Paradox is about to kick in — hard.

In the 1860s, British economist William Stanley Jevons noticed something counterintuitive about coal: when James Watt’s steam engine made coal use far more efficient than the old Newcomen engine, total coal consumption didn’t fall. It exploded.

Why? Because cheaper, more efficient engines made steam power economically viable for thousands of new factories, ships, and industries that couldn’t afford it before. Efficiency didn’t reduce demand — it supercharged it.

- Cheaper automation created more jobs overall, not fewer.

- Dirt-cheap internet didn’t reduce data usage; it put the internet in every light bulb, fridge, and doorbell, driving compute demand through the roof.

TurboQuant does exactly the same thing for AI inference. Suddenly, longer contexts, bigger models, and far more concurrent users become dramatically cheaper to serve. Companies that previously couldn’t afford to run large-context LLMs at scale will now deploy them everywhere. The total volume of inference compute — and therefore the total memory required across the ecosystem — is likely to increase, not decrease.

Short-term efficiency gains almost always lose to long-term demand explosion in tech.

Also read:

- The AI Compute Chokepoint: Welcome to the Strait of Hormuz for Silicon and Power

- Phota Studio: The New AI “Photo Lab” That Actually Remembers Your Face

- Finally, an IMDb for the Creator Economy: Mosaic and the CGA Rider Are Professionalizing Creator Work

- The Month’s Most Disastrous Rebrand: Cracker Barrel’s Logo Overhaul Backfires Spectacularly

The Bigger Strategic Question: What Google Does Next

If this were Meta, OpenAI, or Anthropic, you could safely bet they’d keep the technique proprietary to maintain a cost moat. But this is Google. Google has a long, consistent history of publishing fundamental research and often open-sourcing it (see: Transformers, BERT, TPU papers, etc.).

If TurboQuant (or large parts of it) ends up in the public domain, it becomes a rising tide that lifts every LLM boat. Suddenly every developer, every startup, and every cloud provider can run dramatically more efficient inference. The playing field tilts toward whoever can best productize the gains rather than whoever hoards the secret sauce.

That’s the real “Pied Piper” moment. Not that one company invented compression — but that the compression just became democratized at the exact moment when inference demand is about to go parabolic.

The memory-chip makers will have a bumpy few quarters. Some investors who piled into the AI memory trade purely on the “infinite demand” thesis will get hurt. But the broader secular tailwind for high-performance memory remains very much alive.

Google didn’t kill the memory market.

It just made AI so much cheaper that we’re all going to need a lot more of it.