The AI Compute Chokepoint: Welcome to the Strait of Hormuz for Silicon and Power

The majority of businesses that already depend on the largest LLM providers — and will depend on them even more in the coming years — do not fully grasp the underlying dynamics of the compute market. We are living in the final years, and perhaps even the final months, of an era in which compute is not yet the primary constraint on the global economy. It is akin to the Strait of Hormuz for oil, except this chokepoint depends on roughly three advanced semiconductor fabrication facilities in the world.

The Three-Way Compute Dilemma for AI Labs

- Inference: Deploying models to serve paying customers and generate immediate revenue.

- Training and RL (reinforcement learning): Continuously improving or scaling existing models through pre-training and post-training to avoid falling behind competitors.

- Research into new architectures: Investing in fundamental breakthroughs that could determine long-term survival, rather than incremental gains on today's transformer-based paradigms.

Spending heavily on inference today brings cash flow, but risks technological obsolescence. Pouring resources into training keeps you competitive in the short-to-medium term, yet it may starve the risky, high-upside research needed to leapfrog the field. Neglecting any one of these paths can be fatal in an industry moving at breakneck speed.

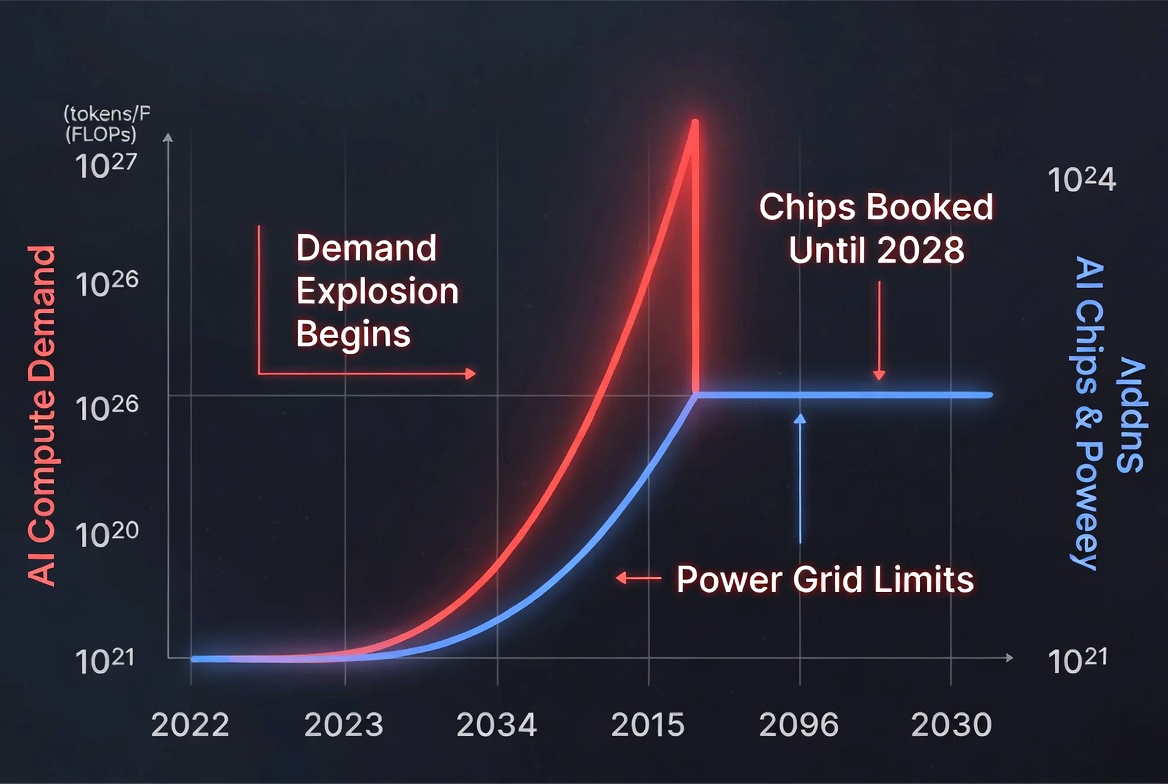

Explosive Demand Growth Just Beginning

Enterprises are already integrating LLMs into core workflows — customer service, code generation, data analysis, and increasingly agentic systems. As these become mission-critical and scale to millions of interactions daily, the token bill compounds rapidly. Early adopters are seeing this shift; the broader market has not yet internalized how quickly it will accelerate.

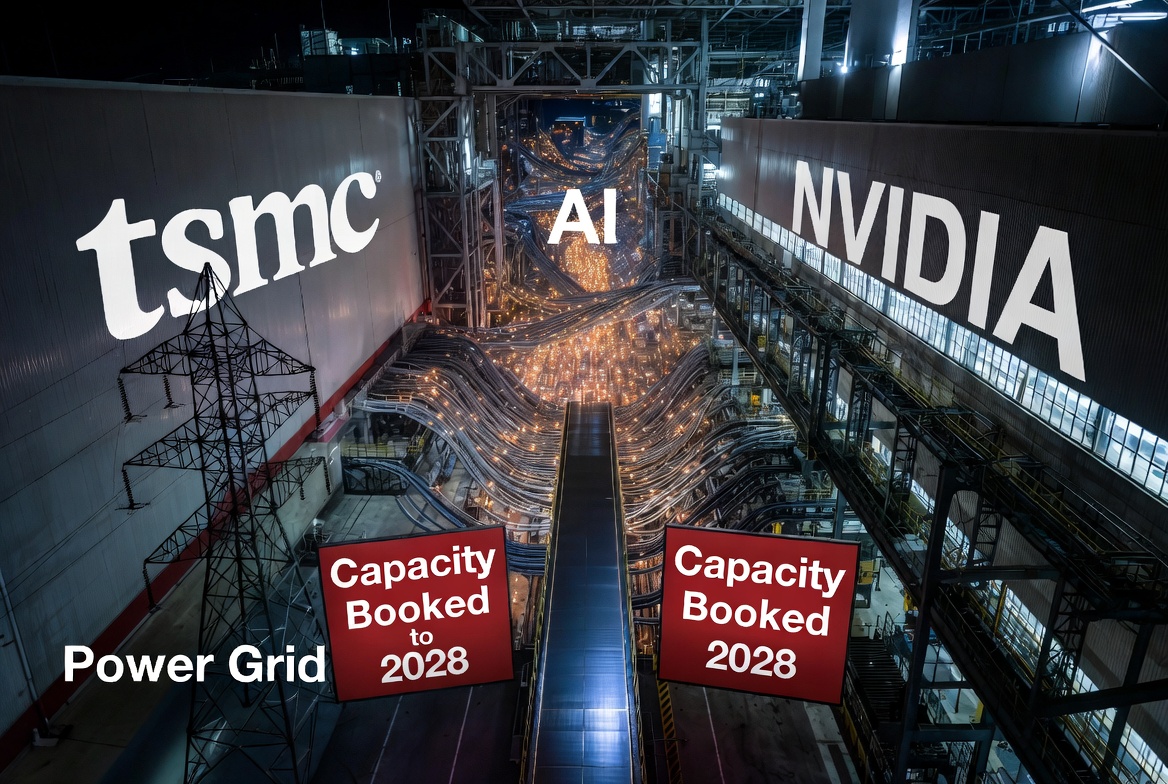

Supply Constraints: Chips and Power

Today, virtually every major manufacturer of advanced chips, high-bandwidth memory (HBM), and related components has its production capacity fully booked with orders stretching into 2028 and beyond. New fabs and manufacturing capacity cannot be built overnight; they require years of planning, massive capital expenditure, specialized equipment (much of it also supply-constrained), and skilled labor. TSMC, the dominant player in advanced semiconductor manufacturing, faces intense pressure from AI-driven demand, with order backlogs pushing lead times deep into the future.

Power is the second critical bottleneck, and it may prove even harder to scale. Data centers already consume a growing share of global and U.S. electricity. Projections show U.S. data center power demand potentially reaching 74 GW or more by 2028, with significant shortfalls in grid capacity. Globally, data center electricity use could double or more by 2030, driven overwhelmingly by AI workloads. Building new power plants, upgrading grids, and securing permits takes time that the market simply does not have.

The result is a classic supply-demand imbalance: demand is exploding while supply expands linearly at best, constrained by long-lead-time capital projects.

The Hormuz Analogy for AI Compute

Most companies today view AI costs through the lens of software-as-a-service subscriptions or per-token pricing. They do not yet see the underlying hardware scarcity that will increasingly dictate pricing, availability, and even which models can be served at scale. When compute becomes the binding constraint, those who secured capacity early (or built alternative strategies) will hold a decisive advantage.

- Vibe-Metaversing Is Here: Google Just Made Building XR Worlds as Easy as “Vibe It”

- Phota Studio: The New AI “Photo Lab” That Actually Remembers Your Face

- And What Are You Going to Do About It? Netflix Raises Prices Again

- Skild Brain: The AI That Keeps Robots Running—Even When They're Falling Apart

Strategic Implications for Businesses

- Diversify dependencies — Relying exclusively on one or two frontier LLM providers is risky when their own compute allocation decisions can shift overnight.

- Model the true economics — Factor in not just today's token costs, but the likelihood of rising prices or rationing as demand outstrips supply. Token budgets may soon rival or exceed human salary budgets for certain functions.

- Invest in efficiency and alternatives — Techniques like model distillation, quantization, smaller specialized models, and on-premises or hybrid deployments can reduce exposure. Exploration of non-transformer architectures or open-source options may gain strategic value.

- Think long-term on infrastructure — Partnerships with cloud providers, direct hardware deals, or even power-generation co-investments could become competitive necessities rather than luxuries.

The AI boom is real and transformative, but it rests on a physical foundation of silicon and electrons that cannot scale infinitely or instantly. The next few years will separate organizations that treat compute as an abundant commodity from those that recognize it as the new strategic chokepoint.

We are not yet in the world where compute fully limits economic growth — but we are approaching it rapidly. The businesses that understand the dynamics of this market today will be the ones positioned to thrive when scarcity truly bites. The window for complacency is closing.