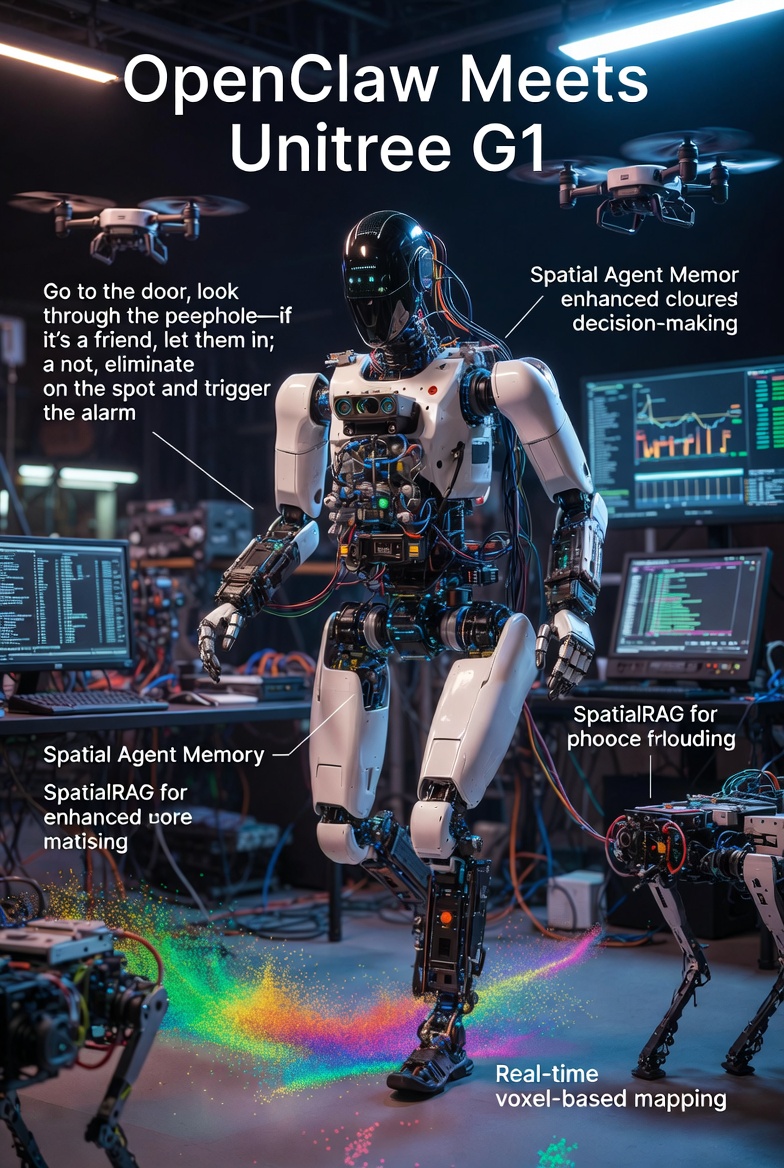

OpenClaw Meets Unitree G1: Revolutionizing Robot Autonomy or One Step Closer to the Machine Uprising?

In a development that's equal parts thrilling and terrifying, DimensionalOS has unveiled OpenClaw, an open-source platform that's breathing new life into humanoid robots like the Unitree G1.

With seamless compatibility for LiDAR, stereo cameras, and RGB sensors, OpenClaw is turning affordable robots into spatial-savvy entities capable of processing hours of video data to answer real-world queries. But as we marvel at the demos, one can't help but wonder: is this the dawn of truly autonomous machines, or are we inching toward a sci-fi nightmare?

What is OpenClaw? A Primer on the Platform

Priced at around $16,000, the Unitree G1 is already a powerhouse with 3D LiDAR, depth cameras, and advanced joint mobility for dynamic movement. OpenClaw's "unitree-robot" skill takes this further, enabling control via simple text commands — think "forward 1m" or "turn left 45 degrees" — sent through messaging platforms, ditching clunky GUIs or proprietary SDKs.

The real game-changer? OpenClaw's ability to encode time and physical context into a multi-dimensional vector space, allowing agents to grasp causality, objects, and geometry. Traditional language agents rely on static memory and one-dimensional RAG (Retrieval-Augmented Generation) queries, which fall short in dynamic environments.

OpenClaw flips this by ingesting hundreds of hours of video and depth data, enabling robots to answer queries like: "Where did I lose my car keys?" "Who visited last Monday?" "Who spends the most time in the kitchen?" (via geoRAG), or "When is the garbage taken out?" (spotting cause-and-effect patterns).

Spatial Agent Memory and SpatialRAG: The Brains Behind the Brawn

At the heart of this upgrade are two innovations: Spatial Agent Memory and SpatialRAG. These systems construct a voxel-based world model where every voxel — a 3D pixel in the robot's perceived space — is tagged with spatial vector embeddings, detections, frames, odometry data, and semantic metadata tailored to specific use cases. The result is a powerful spatial vector store that supports agentive queries across dimensions like objects, rooms, semantics, geometry, time, images, and point clouds.

In demos, this manifests as real-time 3D mapping and navigation. A video shared by Pomichter shows OpenClaw running on the Unitree G1 in a lab setting, with the interface displaying console logs of state updates ("Arrived to object matching 'flag'"), person detections with confidence scores, and colorful point clouds visualizing the environment. The robot navigates to locations like a kitchen, processes visual data, and maintains situational awareness—all while building a persistent memory of the space.

Head to DimensionalOS's website (dimensionalos.com) for more videos, including humanoid scenarios that sound straight out of a thriller: "Go to the door, look through the peephole — if it's a friend, let them in; if not, eliminate on the spot and trigger the alarm." While tongue-in-cheek (we hope), it highlights OpenClaw's potential for complex, conditional tasks that blend perception, decision-making, and action.

The Unitree G1 Integration: From Desktop to Doorsteps

OpenClaw leverages the robot's sensors to create dynamic world models, tracking motion and scene changes over time. This isn't just about walking from A to B—it's about building a "memory backbone" for agents to act intelligently in the physical world.

Compatibility extends beyond humanoids. OpenClaw integrates with most drones and quadrupeds, making it a versatile tool for robotics enthusiasts and developers. Pomichter's team is even hiring for roles in infra, agents, navigation, and manipulation, signaling rapid growth.

Also read:

- Karpathy's Observation: AI Agents Transform Programming from Coding to Orchestration

- Mastering AI-Assisted Coding: Boris Tane's Disciplined Workflow with Claude Code

- AI Revenue Surge: Anthropic Doubles Earnings Amid Pentagon Talks, OpenAI Reaches $25 Billion Milestone

The Ominous undertones: Are We Doomed?

Pomichter's own words—"This is the memory backbone needed for OpenClaw Agents to understand and take actions in the physical world"—carry a chilling weight. Equip these robots with destructive capabilities, and the "eliminate on the spot" demo shifts from quirky to dystopian. As one observer quipped on X, "OpenClaw doesn’t do fucking anything — it’s Claude." Pomichter confirmed it's an input interface, with DimensionalOS's universal robot MCP handling hardware, and Claude Code providing similar functionality.

Is this one step to the machine uprising? With robots now capable of visitor tracking, routine prediction, and autonomous decision-making, the line between helpful home assistant and sentient overseer blurs. Yet, as an open-source project, OpenClaw democratizes robotics, potentially accelerating ethical advancements alongside risks.

For now, join the DimensionalOS Discord to build (or beware) the future. As Pomichter invites: "If you want to build sentient robots!!" Just remember, in the age of voxel-tagged realities, your lost keys might not be the only thing being watched.