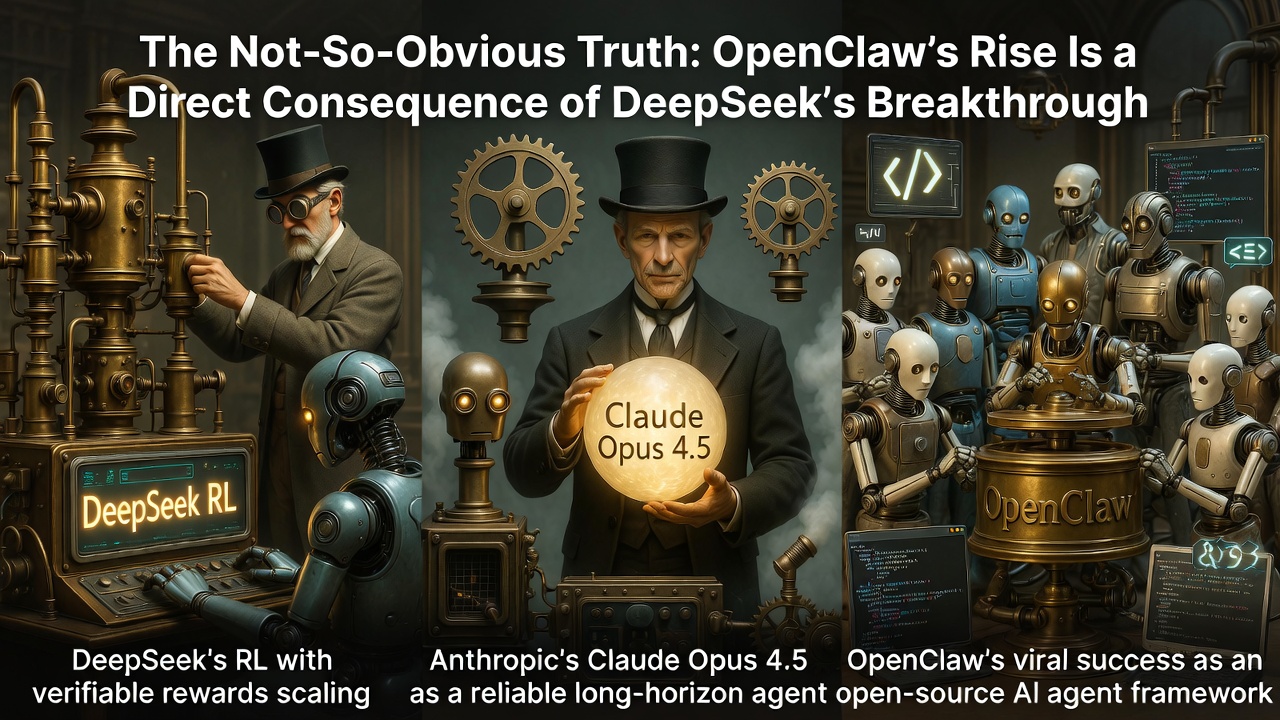

The Not-So-Obvious Truth: OpenClaw's Rise Is a Direct Consequence of DeepSeek's Breakthrough

Not everyone sees it yet, but the explosive popularity of OpenClaw — the open-source, local-first AI agent framework that went viral in early 2026 (formerly Clawdbot/Moltbot) — is no accident. It is the direct downstream result of DeepSeek's pioneering work on scaling reinforcement learning (RL) with verifiable rewards, a paradigm shift that began in 2024–2025 and quietly changed the trajectory of frontier AI capabilities.

Here's the chain of causation, step by step.

Step 1: DeepSeek Proves RL with Verifiable Rewards Scales

Unlike traditional RLHF, which relies on expensive human preferences and learned reward models, verifiable rewards come from deterministic checkers — unit tests for code, math solvers, format validators — making the signal clean, cheap, and scalable.

DeepSeek showed that applying RL (via algorithms like Group Relative Policy Optimization — GRPO) in these environments unlocks emergent reasoning behaviors that pure pre-training alone cannot reach at the same efficiency.

While OpenAI's o1 series (late 2024) hinted at similar ideas through chain-of-thought distillation and RL, they did not publish a clear, reproducible paper at the time. DeepSeek did — and open-sourced much of the insight — proving that verifiable RL can produce massive capability jumps in math, coding, and logical reasoning.

This was the spark: the community (and frontier labs) realized RL was no longer a finicky side-channel; it was a parallel scaling axis to pre-training.

Step 2: A Year of Building Truly Scalable Long-Horizon Environments

These environments needed:

- Reliable verifiers for intermediate and final steps;

- Stable reward signals that don't collapse or hack;

- Efficient algorithms (GRPO variants, mean+variance normalization, dynamic sampling, decoupled clipping) to handle the variance explosion in long trajectories.

By late 2025, the pieces were in place. Labs had internalized DeepSeek's lesson: if you can define verifiable success for long-horizon tasks (e.g., "does the agent eventually produce working code after navigating a repo?"), then RL can teach models to stay on track, self-recover from dead ends, and reason coherently over extended contexts.

Step 3: Anthropic Delivers Opus 4.5 — The First RL-Supercharged Agent Model

Described as the strongest model yet for coding, agents, and computer use, Opus 4.5 exhibited precisely the behaviors enabled by scaled RL with verifiable rewards:

- It no longer "got lost" in long tasks;

- It navigated bash shells reliably;

- It self-corrected, iterated, and returned to the "path of truth" even after hundreds of steps;

- It powered heavy-duty agentic workflows (GitHub Copilot integrations, autonomous refinement loops) far better than previous generations.

Anthropic's internal RL investments — building on the verifiable-rewards playbook popularized by DeepSeek — turned Opus 4.5 into the first truly reliable long-horizon agent model.

Early benchmarks and user reports confirmed it crossed a qualitative threshold: agents could now handle real work without constant human babysitting.

The Bigger Picture: Two Scaling Laws Now Operate in Parallel

GRPO / RL with Verifiable Rewards scaling

More environment rollouts, more verifiable tasks, more RL compute → dramatically better reasoning, agentic reliability, and long-context coherence.

The result? In the most conservative estimate, the effective "intelligence growth rate" of frontier LLMs roughly **doubled** in 2025. In reality, it's closer to exponential in certain domains (coding, math, agent workflows) because the two axes compound: better base models make RL more sample-efficient, and better RL makes base models stronger at reasoning, which feeds back into pre-training.

Also read:

- Who Will Benefit Most from AI? The World in 2028–2029 and Regional Divides

- Vibe Coding and ICOs: The Hamster Wheel of Hype for the Average User

- How to Become the Top 1% in the Age of AI

- Building a Solid Foundation for Marketing Strategy Development Using ChatGPT, Gemini, and Claude

Why OpenClaw Exploded

Without DeepSeek's 2024–2025 demonstration that verifiable RL scales — and the subsequent year of environment-building and algorithmic refinement — no frontier model in early 2026 would have been reliable enough to power something like OpenClaw without constant crashes or hallucinations. The viral success of OpenClaw is downstream proof: the RL-with-verifiable-rewards revolution has escaped the labs and is now reshaping how ordinary people (and companies) interact with AI.

In short: DeepSeek lit the fuse. A year of quiet scaling built the bomb. Opus 4.5 detonated it. OpenClaw is the shrapnel — and it's only the beginning.