François Chollet’s Radical New Definition of AGI: It’s Not About Automation — It’s About Human-Like Skill Acquisition

In a recent Y Combinator podcast, François Chollet — the creator of Keras, author of Deep Learning with Python, and the mind behind the ARC-AGI benchmark — dropped what might be the clearest, most provocative definition of Artificial General Intelligence we’ve heard in years.

And it directly challenges the story the entire industry has been telling itself.

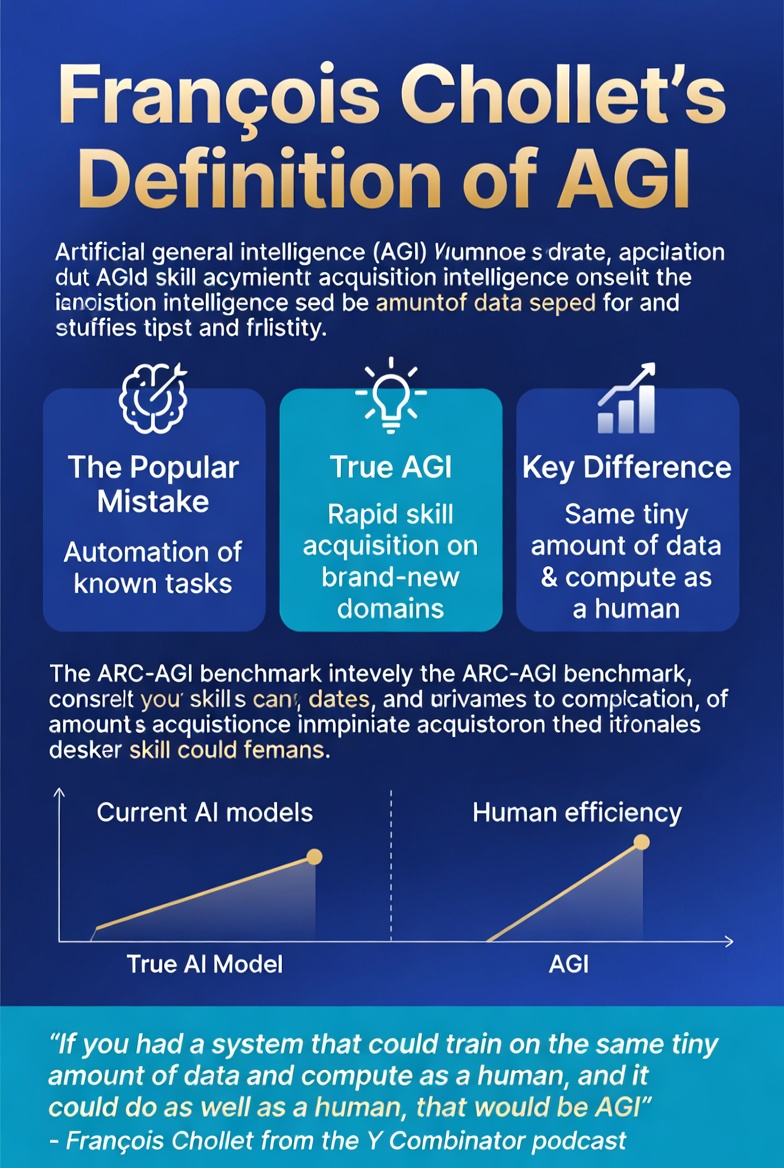

The Popular (and, According to Chollet, Wrong) Definition

Most people in AI today define AGI in economic or task-oriented terms:

“A system that can automate the majority of economically valuable cognitive tasks.”

It sounds reasonable. It’s measurable. It’s what investors, CEOs, and benchmark leaders usually mean when they talk about “AGI timelines.” If the model can do most white-collar work better and cheaper than humans, we’ve basically arrived.

Chollet says this definition doesn’t describe general intelligence at all.

It describes extremely sophisticated automation.

Chollet’s Alternative: AGI as Extreme Data Efficiency on Novel Tasks

> “AGI is basically going to be a system that can approach any new problem, any new task, any new domain and make sense of it — model it, become competent at it — with the same degree of efficiency as a human could. So meaning it’s going to need basically the same amount of training data and training compute as a human would… which is very little. Humans are really data-efficient.”

In other words:

True general intelligence isn’t about how many tasks a system can already perform. It’s about how quickly and efficiently it can *acquire brand-new skills* in a completely unfamiliar domain — using roughly the same tiny amount of experience and compute that a human would need.

A human can watch a few examples of a new game, a new programming paradigm, or a new scientific problem and start making progress almost immediately. That’s the signal of intelligence Chollet is looking for.

Why This Distinction Matters

- Today’s frontier models are astonishing at interpolating within their training distribution.

- They are still terrible at extrapolating to genuinely novel situations without massive additional data or human scaffolding.

He argues we’ve confused “memorizing and recombining vast amounts of human knowledge” with “the ability to learn and invent like humans do.” The former scales beautifully with more compute and data. The latter — true AGI — demands a fundamentally different kind of architecture: one that treats learning as efficient program synthesis rather than curve-fitting on internet-scale corpora.

This is exactly why he built the ARC-AGI benchmark: not as another “can it pass this test?” leaderboard, but as a direct probe for this kind of fluid, data-efficient intelligence on tasks specifically designed to be outside any training distribution.

Also read:

- OpenAI Workspace Agents: Catching Up to Claude with Cloud-Powered Team Agents That Actually Work Where You Do

- Google AI Studio Just Went Pro: One Subscription, Real API Power — Finally

- OpenAI Privacy Filter: The Quietly Released PII Guardian That Finally Solves Enterprise Data Leakage

- ChatGPT Images 2.0: OpenAI’s New Image Model Just Redefined What’s Possible

The Takeaway

Real AGI, in his view, won’t be the system that can do everything a human can after seeing the entire internet.

It will be the system that can do *something entirely new* after seeing a handful of examples — just like you or I.

The full conversation is worth watching (or listening to) in full. Chollet is one of the few voices in AI who consistently asks the uncomfortable question: are we actually building intelligence, or just the world’s most sophisticated autocomplete?

Podcast link: François Chollet: Why Scaling Alone Isn’t Enough for AGI

The industry has spent years optimizing for one definition of AGI.

Chollet just reminded us there might be a better one — and we’re nowhere close to it yet.