OpenAI Privacy Filter: The Quietly Released PII Guardian That Finally Solves Enterprise Data Leakage

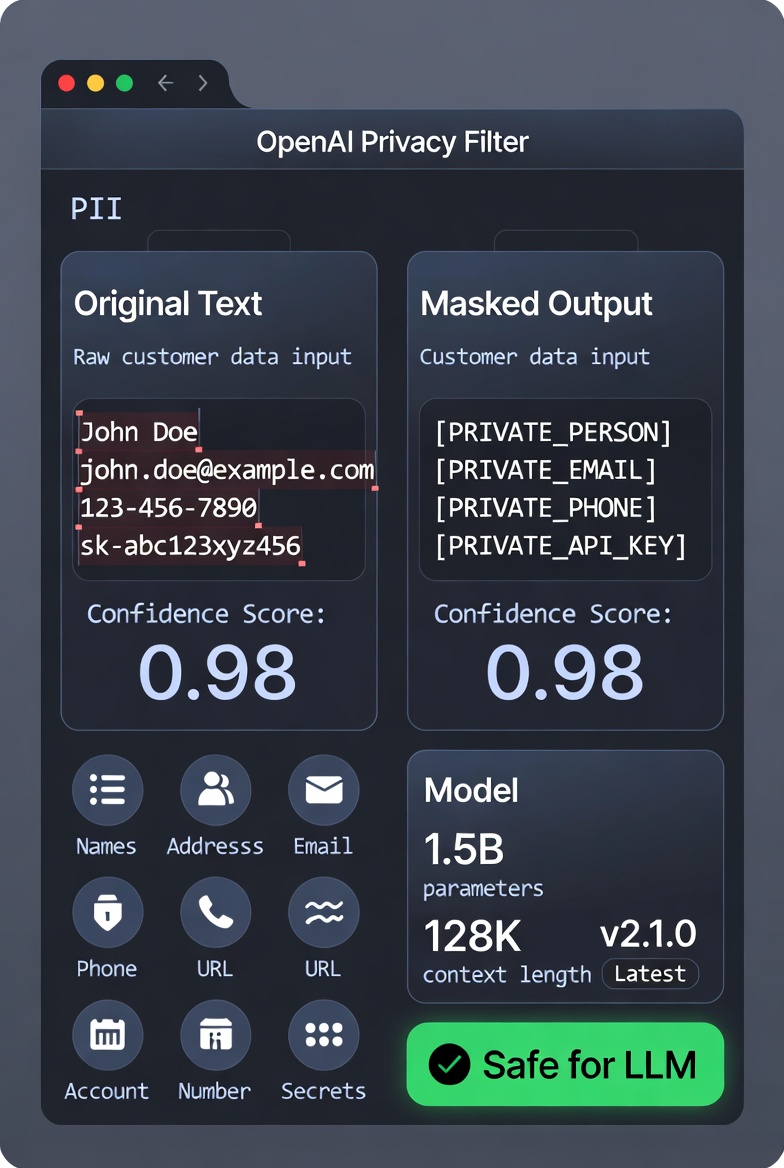

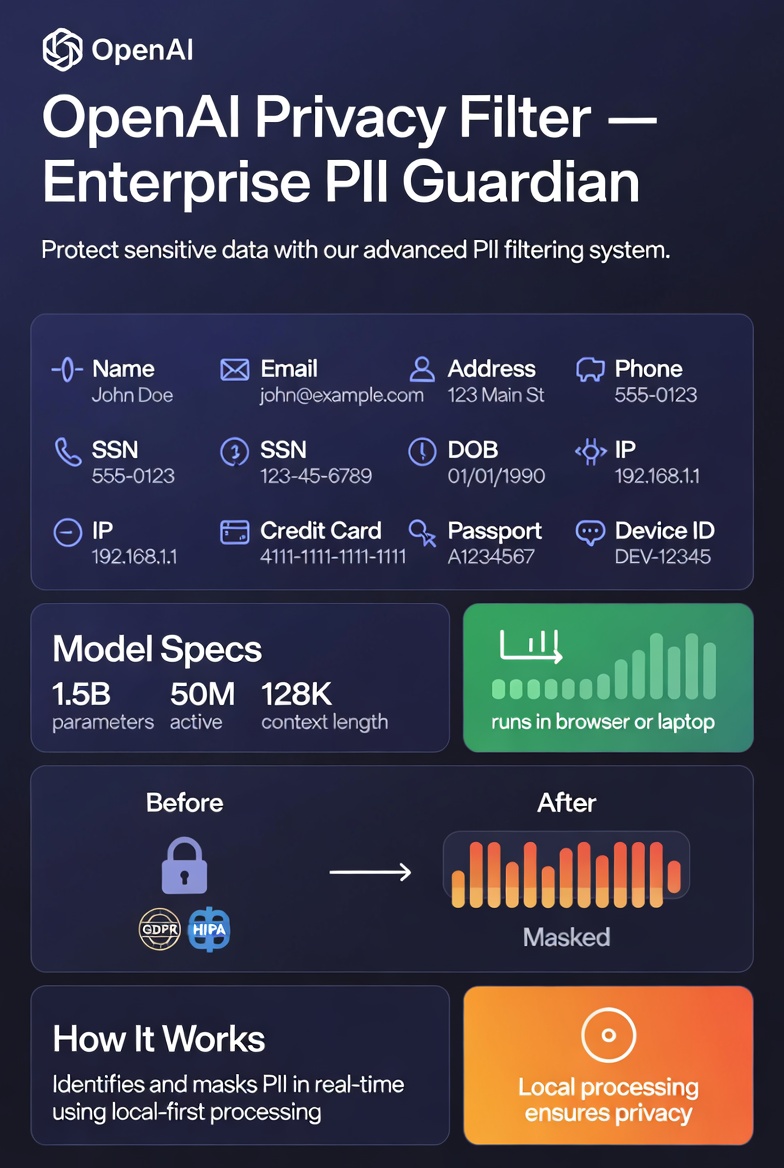

OpenAI has just dropped a specialized open-weight model on Hugging Face that many AI teams have been quietly begging for: OpenAI Privacy Filter. It’s a lightweight, context-aware tool designed specifically for detecting and redacting personally identifiable information (PII) in text — perfect for enterprise LLM workflows, customer data pipelines, and any scenario where sensitive information must never touch a third-party API.

Why This Matters (And Why It’s a Big Deal)

Every team building AI products that touch real customer data faces the same nightmare:

- You can’t send raw prompts to ChatGPT, Claude, or any cloud LLM without risking GDPR, CCPA, or HIPAA violations.

- Classic regex patterns and spaCy-style NER models miss context, nicknames, regional formats, or cleverly obfuscated secrets.

- Heavy NLP libraries or larger local models are either too slow, too inaccurate, or too resource-hungry for high-throughput pipelines.

Privacy Filter closes that exact gap. It’s small enough to run on a laptop or even directly in the browser, yet powerful enough to deliver frontier-level PII detection in a single forward pass.

What’s Inside the Model

- Architecture: Built on a compact version of OpenAI’s gpt-oss-style architecture — a bidirectional token-classification transformer with grouped-query attention, rotary embeddings, and sparse Mixture-of-Experts (MoE) feed-forward blocks.

- Size: 1.5B total parameters, but only 50M active thanks to MoE efficiency.

- Context window: Massive 128K tokens — enough to process entire documents, long chat histories, or bulk customer records at once.

- Inference: Runs locally via Hugging Face Transformers (Python) or Transformers.js (JavaScript + WebGPU). Zero API calls required.

Eight Critical PII Categories, Handled with Precision

1. `private_person` (names, including nicknames and context-aware references)

2. `private_address` (physical addresses)

3. `private_email`

4. `private_phone`

5. `private_url`

6. `private_date`

7. `account_number` (bank accounts, credit cards, etc.)

8. `secret` (API keys, tokens, passwords, and other high-entropy credentials)

It doesn’t just slap regex over the text — it uses constrained Viterbi decoding over BIOES tags to produce coherent, contextually accurate spans. After detection, you can mask the data for safe LLM processing and (if needed) reverse the masking downstream with your own mapping logic.

From Pain Point to Production-Ready Solution

- Microsoft’s Presidio;

- Custom spaCy pipelines;

- Tiny local classifiers;

- Or, worst-case, manual review.

Now you have a single, high-recall model that runs on-device, supports 128K context, and was explicitly designed for on-prem / air-gapped / GDPR-perimeter environments. OpenAI even uses a version of it internally for their own privacy-preserving workflows.

It’s not a full anonymization suite or a compliance silver bullet (the model card is very clear about that), but it’s the best practical first layer the industry has seen for data minimization at scale.

How to Get Started (It’s Stupidly Easy)

The model is live right now:

- Hugging Face: https://huggingface.co/openai/privacy-filter;

- GitHub: https://github.com/openai/privacy-filter;

- Demo space: Try it instantly in the browser

A basic Python example:

```python

from transformers import pipeline

classifier = pipeline("token-classification", model="openai/privacy-filter")

result = classifier("My name is Alice Johnson, email is [email protected], and my API key is sk-abc123xyz.")

The output gives clean entity groups with confidence scores — ready to mask or log.

- From Mortgage Crisis to Million-Dollar Soul Food Empire: How Rene Johnson Turned Her Grandmother’s Recipes into Success

- The Rise of “Neuro-Slop”: American Companies Are Flooding Documents with AI’s Favorite Corporate Phrase

- DeviantArt Bet Big on Generative AI — And It Paid Off Handsomely

- 44% of All New Music on Deezer Is AI-Generated — But Almost Nobody Is Actually Listening to It

The Bottom Line

In an era where every AI product is one accidental data leak away from headlines, OpenAI Privacy Filter feels like a genuinely thoughtful release. It’s fast, local-first, open-source, and laser-focused on solving a real, painful problem that every enterprise AI team shares.

If you’re building anything that processes customer messages, support tickets, forms, or internal documents before they hit an LLM — this is the tool you’ve been waiting for.

Go grab it. Your compliance team (and your legal department) will thank you.