Finally, Something Truly New from Mira Murati (And Yes — the $2 Billion Was Absolutely Worth It)

On May 11, 2026, Mira Murati’s Thinking Machines Lab dropped something that actually feels fresh in the voice-AI space: Interaction Models.

Not another incremental tweak to ChatGPT Voice or Gemini Live. Not a slightly better TTS or a marginally smarter VAD. This is a ground-up rethink of how AI should talk to humans — and it might be the first real leap in multimodal interaction since the original voice mode launched.

The Problem Everyone Pretended Wasn’t There

Current voice assistants are basically held together with duct tape and hope:

- VAD waits for you to stop talking → model goes deaf.

- Model starts generating → it goes blind and can’t react to anything new.

- Separate TTS, separate dialogue manager, separate tool-calling layer.

The result? Robotic turn-taking, awkward pauses, zero ability to interrupt, no real understanding of timing, and absolutely no native awareness of what you’re showing on camera.

Everyone knew it sucked. No one had a clean architectural fix.

Until now.

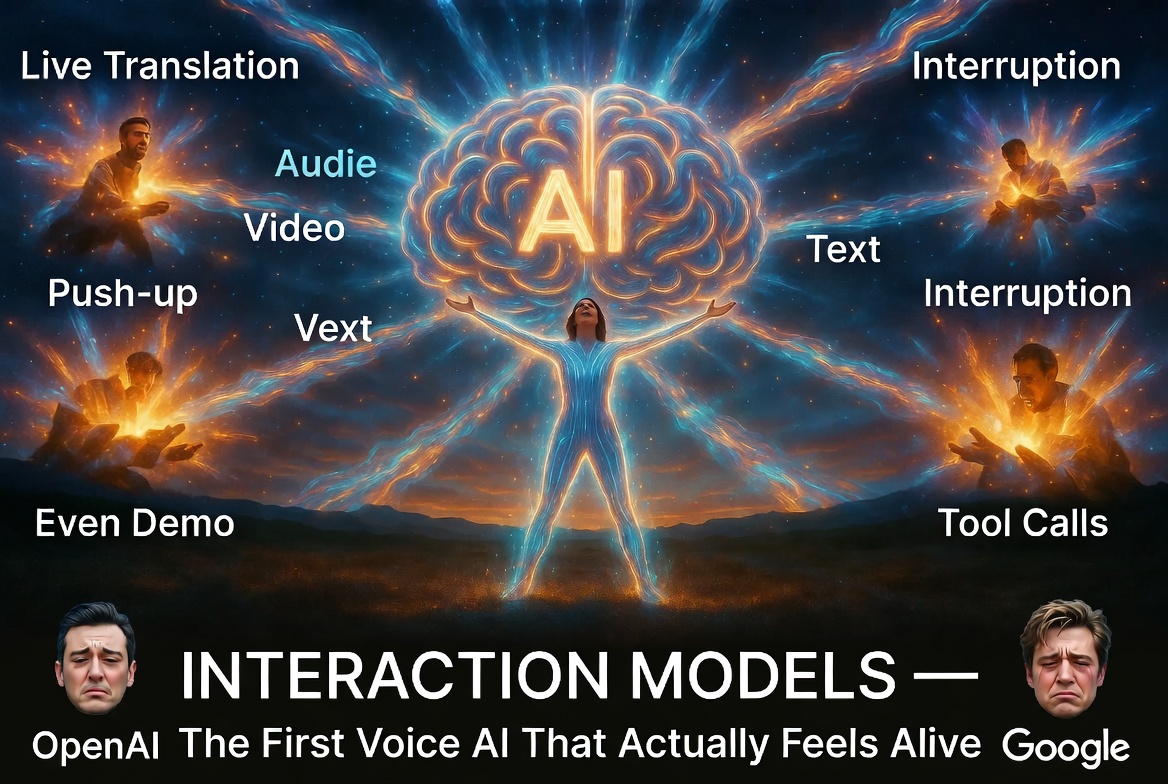

Interaction Models: Real-Time, Full-Duplex, Native Multimodality

Thinking Machines built TML-Interaction-Small — a 276-billion-parameter Mixture-of-Experts model (only 12B active at any time) trained from scratch for continuous interaction.

The core idea is brutally elegant:

- Everything happens in 200-millisecond micro-chunks.

- Audio, video, and text streams run in parallel the entire time.

- A lightweight Interaction Model stays in constant live dialogue (listening, backchanneling, interrupting, reacting to visuals).

- A separate Background Model runs heavy reasoning, tool calls, and web searches asynchronously and injects results into the conversation at exactly the right moment.

No more “I’m thinking…” blackouts. No more “please wait while I process.” The AI feels present.

What This Actually Unlocks (And What No One Else Can Do Yet)

The demos are ridiculous in the best way:

- The model interrupts you mid-sentence when you say something wrong.

- It can speak at the same time as you — perfect live translation or running commentary.

- It watches your camera and reacts to visual triggers: “Count my push-ups,” “Tell me when I raise a finger,” “Start counting when the game begins.”

- It has native time awareness: “Remind me to breathe every 4 seconds” actually works.

- It runs web searches and tool calls **while still talking to you** without dropping the conversation.

This isn’t “voice mode with extra steps.” This is the first model that treats interaction as a first-class citizen instead of an afterthought bolted on top.

The Numbers Don’t Lie

On FD-bench v1.5 (the current gold standard for full-duplex interaction quality), TML-Interaction-Small scores 77.8 — while GPT-Realtime and Gemini Live sit in the 46–54 range.

Turn-taking latency? 400 ms — basically human conversation speed and currently the best in the industry.

On the new internal benchmarks designed specifically for these new capabilities (TimeSpeak, CueSpeak, RepCount, Charades, ProactiveVideoQA), the competitors score zero or single-digit percentages. Nobody else even has the architecture to attempt these tasks properly.

Under the Hood Magic

The team went deep on stability and real-time optimization: encoder-free early fusion, streaming sessions that keep context in GPU memory, custom kernels for deterministic training/inference, and a bunch of low-level tricks to keep everything buttery smooth even at frontier scale.

They didn’t just make the model smarter — they made the entire interaction loop fundamentally different.

Also read:

- Former OpenAI Technical Director Exposes Sam Altman's Lies About AI Safety

- The Perfect Degenerate Time-Killer: Halupedia — The Infinite Hallucinating Wikipedia

- WooCommerce + AI in 2026: The Tools, Plugins and Workflows That Actually Save Time

Status and What’s Next

Right now it’s a closed research preview. Limited access is rolling out in the coming months, with a wider release planned for later in 2026. They’re also offering research grants to help the community build better interaction benchmarks.

But the signal is clear: after all the hype cycles and incremental updates, someone finally decided to solve the actual problem instead of polishing the old one.

Mira Murati and the Thinking Machines team just reminded the entire industry why $2 billion in funding matters — sometimes it’s not about building a slightly bigger model. It’s about building the right one.

Watch the demo video here: https://youtu.be/A12AVongNN4

Read the full technical post: https://thinkingmachines.ai/blog/interaction-models/

Finally. A voice AI that doesn’t just wait for its turn to speak.

It listens. It watches. It thinks. And it talks back like it’s actually here with you.