DeepSeek-V4: The Open-Source Model That Just Made 1 Million Tokens Routine

DeepSeek has dropped the preview of DeepSeek-V4 — a direct, no-compromise open-source challenger to Claude, GPT-5, and Gemini.

If this model had landed in January 2026, it would have been called a generational leap. In late April, it’s simply “the new king of open-source.” And that’s exactly why it matters: V4 isn’t flashy hype — it’s a meticulously engineered platform built on every major architectural breakthrough of the past year. The kind of base model that fine-tuning teams will turn into frontier-beaters in months, not years.

Two Versions, One Clear Strategy

DeepSeek released two variants right out of the gate:

- V4-Pro — the 1.6-trillion-parameter flagship. Built for heavy reasoning, competitive coding, and serious agentic workflows.

- V4-Flash — the 284-billion-parameter speed demon. Faster and dramatically cheaper, yet still smarter than most previous-generation giants.

Both share the same core DNA, but Flash is aggressively optimized for production and cost-sensitive use cases.

The Real Headline: 1 Million Tokens That Actually Work

DeepSeek solved the three classic problems:

- 4× lower compute** on 1M-token context compared to DeepSeek-V3.2;

- 10× smaller KV-cache memory footprint;

- Flash version goes even further: just 10 % of the compute and 7 % of the memory of the previous generation.

All of this runs efficiently even on affordable Huawei Ascend processors. Long-context AI just stopped being a premium feature and became table stakes. For anyone building agents or working with massive document corpora, this is a genuine economic reset.

Three Thinking Modes That Actually Change Behavior

DeepSeek introduced explicit reasoning modes that go beyond simple temperature tweaks:

- Non-think — instant answers for simple queries;

- Think High — deep analysis, planning, and multi-step reasoning;

- Think Max — maximum effort mode. The model writes out every step, stress-tests hypotheses, and refuses to take shortcuts.

Crucially, Think Max now preserves the entire reasoning history between user turns (a major upgrade from V3.2, where it was discarded). Combined with reliable 1M context, this makes V4-Pro a far stronger foundation for long-running autonomous agents.

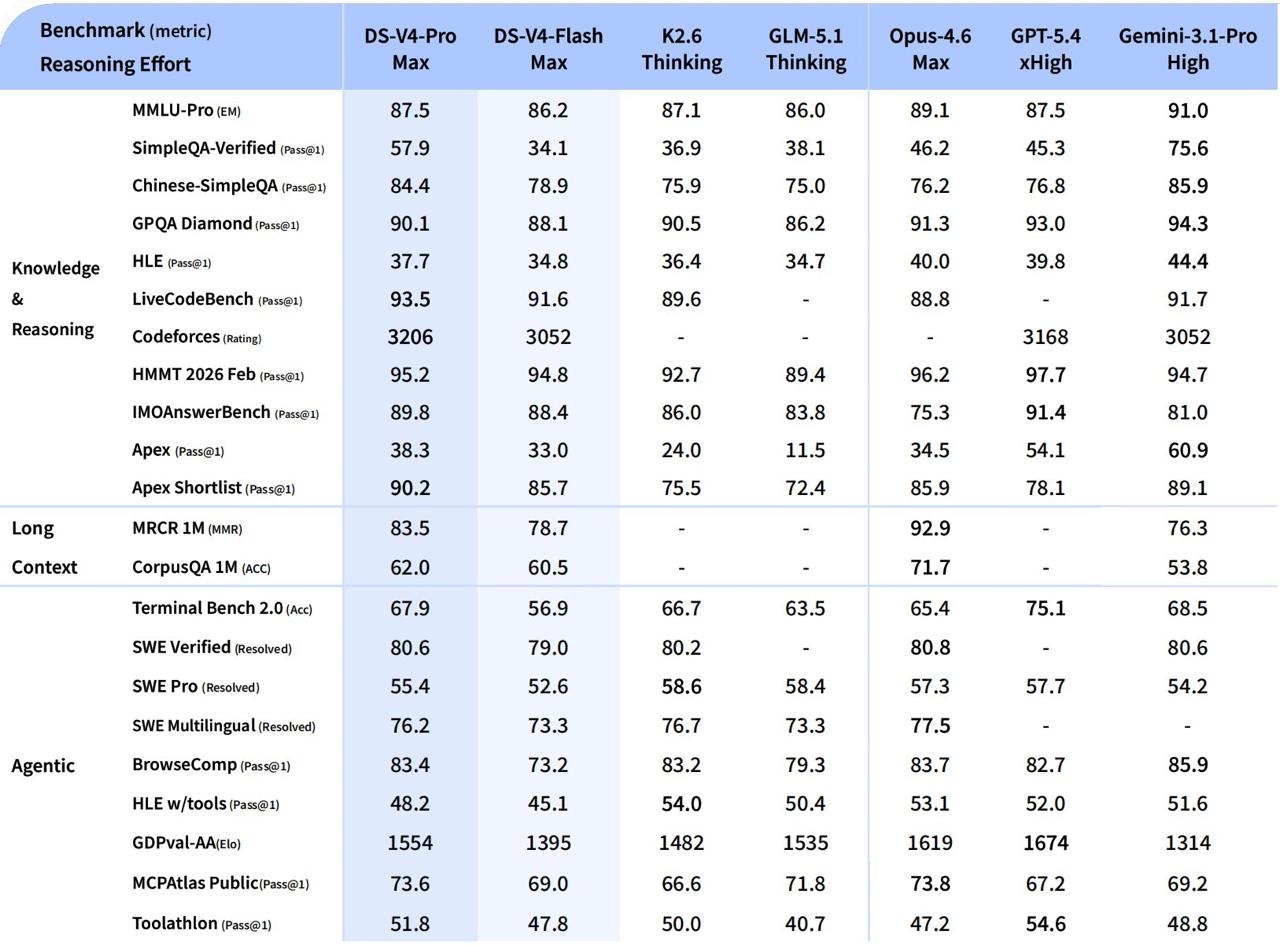

Benchmarks: Frontier Pressure, Not Quite Frontier Lead

- Competitive Coding: Codeforces rating 3206 — 23rd place among living human programmers worldwide. First time an open-source model has reached parity with the closed frontier (roughly GPT-5.4 level).

- Mathematics: HMMT 2026 — 95.2, IMOAnswerBench — **89.8**. Beats the majority of closed models.

- Knowledge: SimpleQA Verified — 57.9 (Opus 4.6 scores 46.2; Gemini 3.1 Pro still leads at 75.6).

- General Reasoning: Trails GPT-5.4 and Gemini 3.1 Pro by roughly 3–6 months, but sits comfortably at the edge of the frontier.

- Internal R&D Coding: 67 % success rate — sits between Sonnet 4.5 (47 %) and Opus 4.5 (70 %).

More importantly, the model was explicitly trained on real enterprise workflows: data analysis, report generation, document editing, and iterative tool use (web search, code execution, etc.). In DeepSeek’s internal evaluation, V4-Pro-Max outperformed Opus 4.6-Max on 63 % of realistic tasks — largely because it proactively adds verification steps and delivers coherent narratives instead of bullet-point dumps.

Internal Poll at DeepSeek Says It All

When 85 of their own developers were asked whether they would switch to V4-Pro as their primary coding model:

- 52 % said yes immediately;

- 39 % said they are leaning strongly toward yes;

- Less than 9 % said no.

That’s not marketing spin — that’s engineers who have been living with the model for months.

The Deeper Story: Architecture Over Raw Scale

The real lesson of V4 isn’t another line on a leaderboard. It’s that smart architectural innovations (sparse attention, FP4 quantization, hybrid KV-cache, and more) now deliver more performance than simply throwing more parameters at the problem. V4-Flash activates only ~13 billion parameters during inference yet routinely beats models several times its size.

Also read:

- Sierra AI Just Reinvented the Software Engineering Interview — And It’s Brilliant

- Hey CEO Who Can’t Stop Asking ChatGPT for Strategy Advice — HBR Just Dropped the Receipts

- François Chollet’s Radical New Definition of AGI: It’s Not About Automation — It’s About Human-Like Skill Acquisition

- Chatbots and the Crazies: Danish Study Confirms What We All Suspected — ChatGPT Is Rocket Fuel for Psychosis

Why This Matters for the Entire Industry

DeepSeek continues its signature playbook: identify the best ideas across the ecosystem, combine them ruthlessly, and ship them for free while others charge enterprise premiums. They aren’t “overtaking everyone” in one dramatic leap — they’re methodically closing the gap, month after month.

And that steady pressure is precisely what keeps the closed labs honest. Every time an open-source model like V4 makes million-token context cheap and reliable, it forces OpenAI, Anthropic, and Google to rethink their pricing and product strategy.

The age of “good enough” closed models dominating every use case is ending. The age of open, efficient, long-context intelligence that anyone can run, fine-tune, or build agents on has begun.

Welcome to DeepSeek-V4.

The open-source frontier just moved — again.