Claude Is the Most Expensive AI — If You Don’t Speak English

There’s a quiet, expensive reality hiding inside every prompt you send to Claude: if you’re not writing in English, you’re paying a steep linguistic tax.

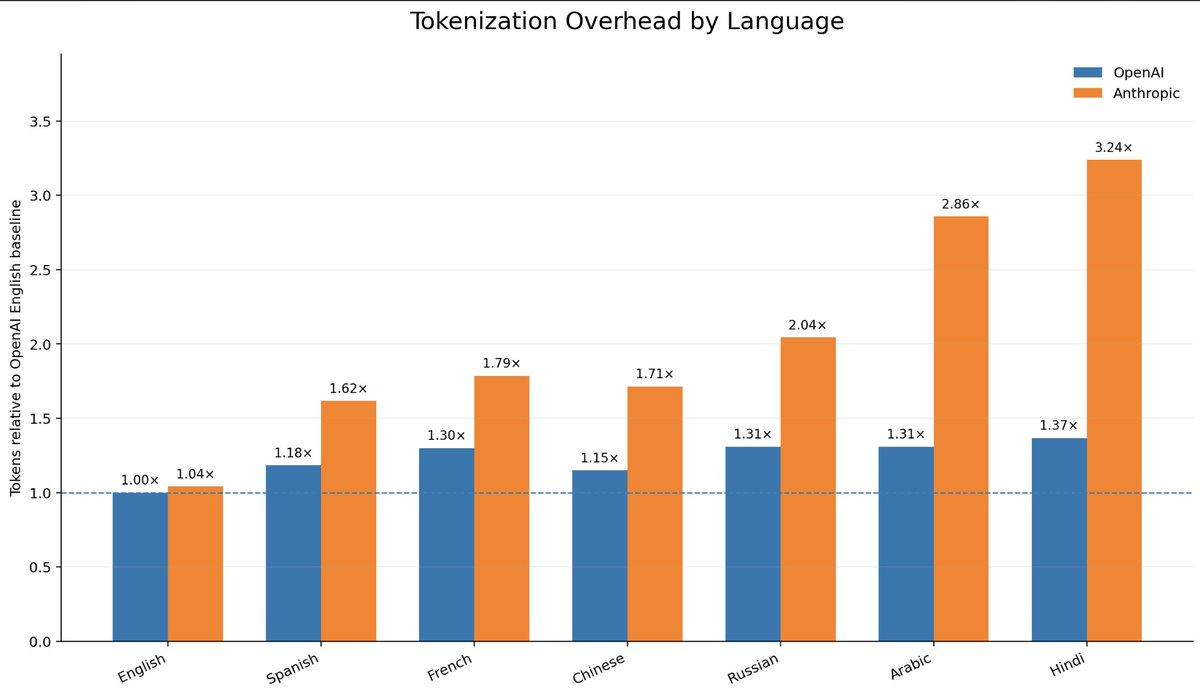

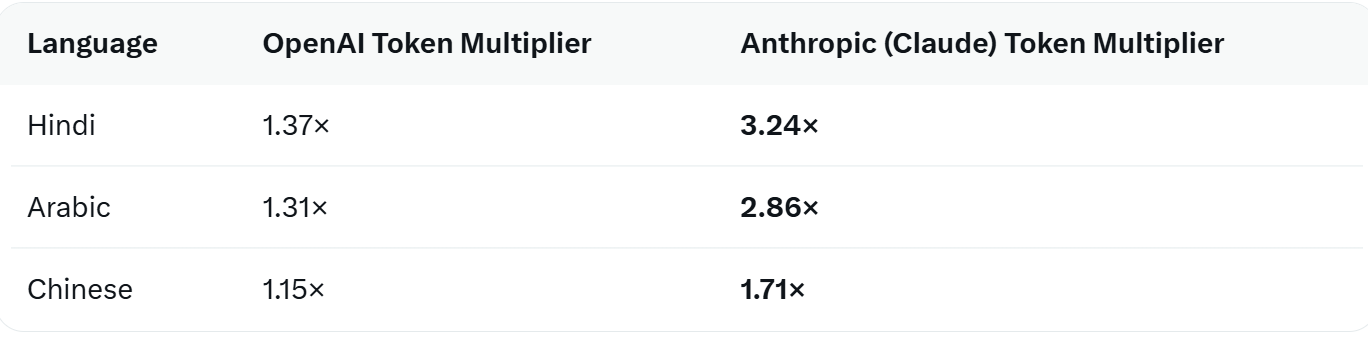

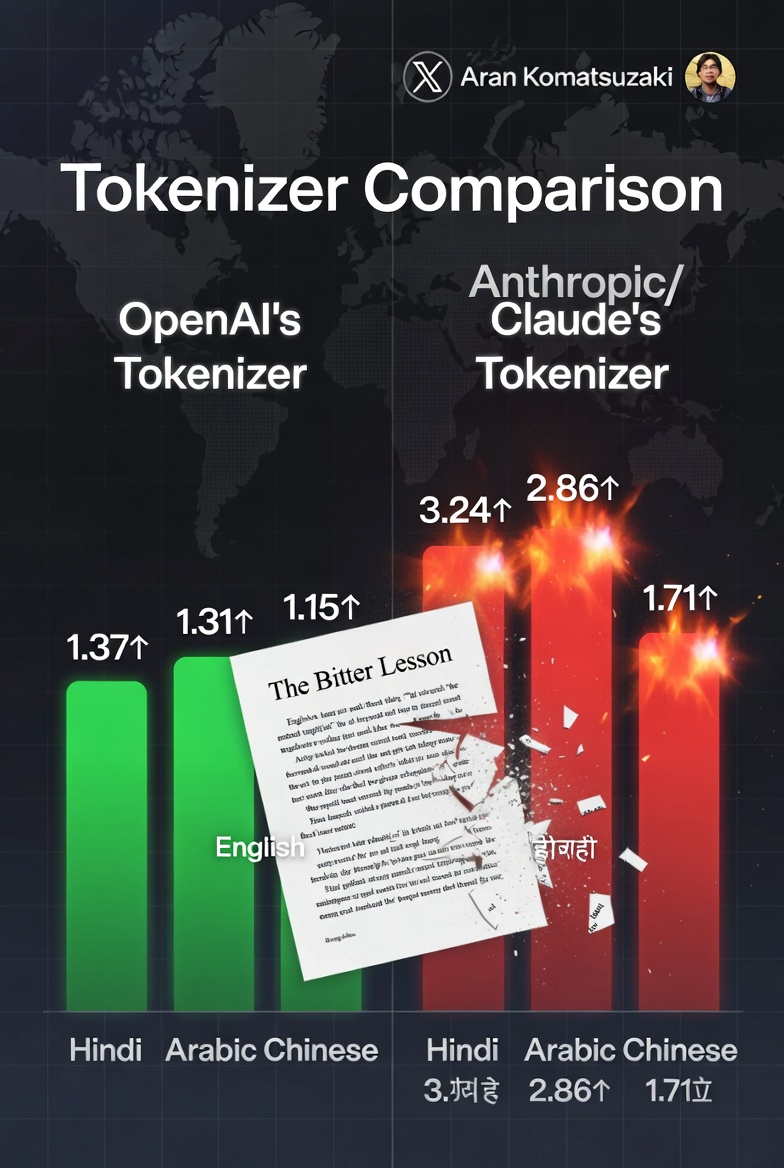

On April 28, 2026, AI researcher Aran Komatsuzaki ran a simple but devastating experiment. He took Rich Sutton’s famous 2019 essay The Bitter Lesson — a foundational text in AI — translated it into multiple languages, and fed each version through the tokenizers of OpenAI and Anthropic (Claude). He then normalized everything against the English version on OpenAI’s tokenizer as the 1.0× baseline.

The results were brutal for non-English speakers — and especially brutal for Claude users.

The Numbers Don’t Lie

Same text. Same meaning. But on Claude, a Hindi user burns through more than three times as many tokens as an English speaker would. An Arabic speaker pays nearly 3×. Even Chinese — one of the world’s most widely used languages — costs 71% more on Claude than the English baseline.

This isn’t a minor inefficiency. It’s a direct hit to your wallet and your context window.

What “Linguistic Tax” Actually Means

Result?

- You pay more per message (input tokens = money).

- You hit the context window faster.

- The model has to do more work per idea, which can subtly affect latency and even reasoning quality.

Anthropic’s tokenizer is noticeably worse at this than OpenAI’s. In follow-up tests across more languages and models, Komatsuzaki found Anthropic consistently levies the highest non-English tax of any major provider.

Chinese models (Qwen, DeepSeek, Kimi) actually treat Chinese more efficiently than English. Gemini and Qwen performed best overall for non-English text. Claude stood alone at the top of the penalty list.

Why This Hits Harder Than It Looks

- A $20/month Claude subscription suddenly feels a lot more expensive when every prompt costs 2–3× more tokens.

- In regions where AI access is already a larger percentage of median income, the tax compounds the inequality.

- Developers building multilingual apps or agents get slammed with higher API bills and more frequent context overflows.

And the irony is thick: The Bitter Lesson itself argues that general methods that scale with compute eventually beat clever human-designed shortcuts. Yet here we are — tokenizers, the very first step in the pipeline, still carrying heavy human biases from training data distribution.

Also read:

- Suno: The Ozempic of the Music Industry – Everyone’s on It, But No One Wants to Talk About It

- Netflix Breaks Its Own Rules: Greta Gerwig’s ‘Narnia’ Gets a Full 49-Day Theatrical Window — The Streamer’s First True Wide Release

- AI Music Is Flooding Streaming Services — and Platforms Are Getting Nervous

- Netflix Launches ‘Playground’: Ad-Free, Offline Gaming App for Kids — No Ads, No In-App Purchases

The Bitter Lesson for Tokenizers

Until then, the tax is real.

If you’re a non-English user and your Claude bills feel surprisingly high, you’re not imagining it. You’re just paying the price of speaking your own language to an AI that was never truly built for it.

Claude may be one of the smartest models on the planet.

But right now, it’s also one of the most expensive — especially if English isn’t your first language.