Uber Isn’t a Taxi Company Anymore. It Never Really Was

For years Uber told the world it was “just” a ride-hailing app. The cars, the drivers, the fares — that was the product. Everything else was secondary.

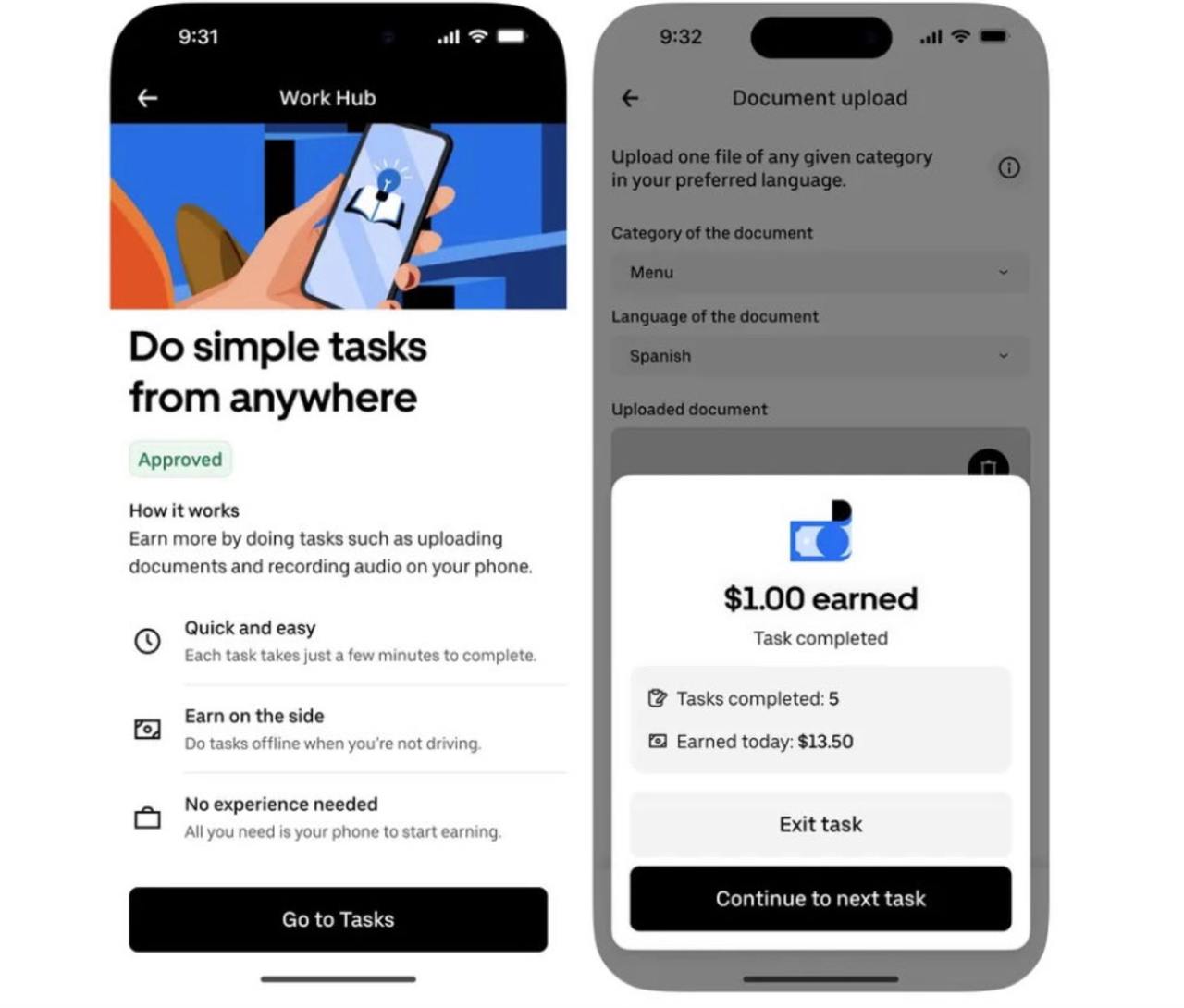

Six months ago the company quietly started turning its millions of drivers into part-time AI data labelers.

Through the Uber Driver app, idle time between rides became paid micro-gigs: tagging street signs, uploading photos of vehicles, recording voice samples in different accents, even narrating scripts for multilingual datasets. Payments hit accounts within 24 hours.

CEO Dara Khosrowshahi called it “making Uber the best platform for flexible work.” In reality, Uber was already pivoting from moving people to feeding the next generation of AI.

Now the pivot has gone nuclear.

On May 1, 2026, Uber CTO Praveen Neppalli Naga stood on stage at TechCrunch’s StrictlyVC event in San Francisco and laid out the real endgame.

Uber wants to transform its global fleet of human-driven cars into the largest distributed sensor grid on Earth — a rolling, always-on data collection machine for autonomous vehicle companies and anyone training “physical AI” models.

From AV Labs to a Planet-Sized Sensor Network

The plan builds directly on AV Labs, the division Uber quietly launched in late January 2026. Right now AV Labs runs a small dedicated fleet of sensor-equipped test vehicles. But Naga was crystal clear: “That is the direction we want to go eventually” — meaning outfitting ordinary driver-owned cars with additional sensors.

For the moment, data collection already happens passively through drivers’ smartphones: cameras, accelerometers, and GPS during every single trip. The next step is hardware. Add lidar, radar, higher-resolution cameras, and the network becomes exponentially more valuable.

Why does this matter so much?

- A rooster suddenly flying out from behind a truck.

- A sinkhole appearing overnight.

- A person in a bizarre costume asleep on a crosswalk in San Francisco.

- Black ice in a mountain town at 3 a.m. during a snowstorm.

Waymo and Cruise have impressive datasets, but they operate in a handful of cities with highly controlled test fleets. Uber operates in over 10,000 cities across 70+ countries. Millions of rides every single day. Every climate. Every style of driving. Every set of traffic laws. Every flavor of urban chaos.

That diversity is pure gold for training robust AI.

The Niantic Problem — Solved by Accident

Uber doesn’t need to pay anyone extra or change driver behavior. The rides are already happening.

The sensors are already in pockets and, soon, bolted to dashboards. Drivers simply keep doing what they do — and the data flows.

Uber is also building an “AV cloud”: a massive labeled sensor data library that its 25 autonomous vehicle partners can query, simulate against, and run in shadow mode during real Uber trips. It’s not just data. It’s infrastructure.

Also read:

- Suno: The Ozempic of the Music Industry – Everyone’s on It, But No One Wants to Talk About It

- Netflix Breaks Its Own Rules: Greta Gerwig’s ‘Narnia’ Gets a Full 49-Day Theatrical Window — The Streamer’s First True Wide Release

- AI Music Is Flooding Streaming Services — and Platforms Are Getting Nervous

- Netflix Launches ‘Playground’: Ad-Free, Offline Gaming App for Kids — No Ads, No In-App Purchases

- Google Introduces Generative AI to YouTube Shorts with Veo 2 Model

The Bigger Picture: Physical AI Is Coming

While everyone obsesses over chatbots and image generators, the hardest — and most valuable — frontier of AI is physical intelligence: machines that understand and safely operate in the messy, unpredictable real world.

Uber just positioned itself as one of the few companies that can supply that data at planetary scale.

This isn’t “another monetization feature.” This is a full corporate metamorphosis. The ride-hailing giant is quietly becoming the indispensable backbone of the autonomous and robotic future — while still collecting fares from human drivers who are unknowingly helping build the very technology that will one day replace them.

The taxi was never the destination. It was the excuse to put millions of mobile sensors on the road.

And now the real product is finally coming into focus.