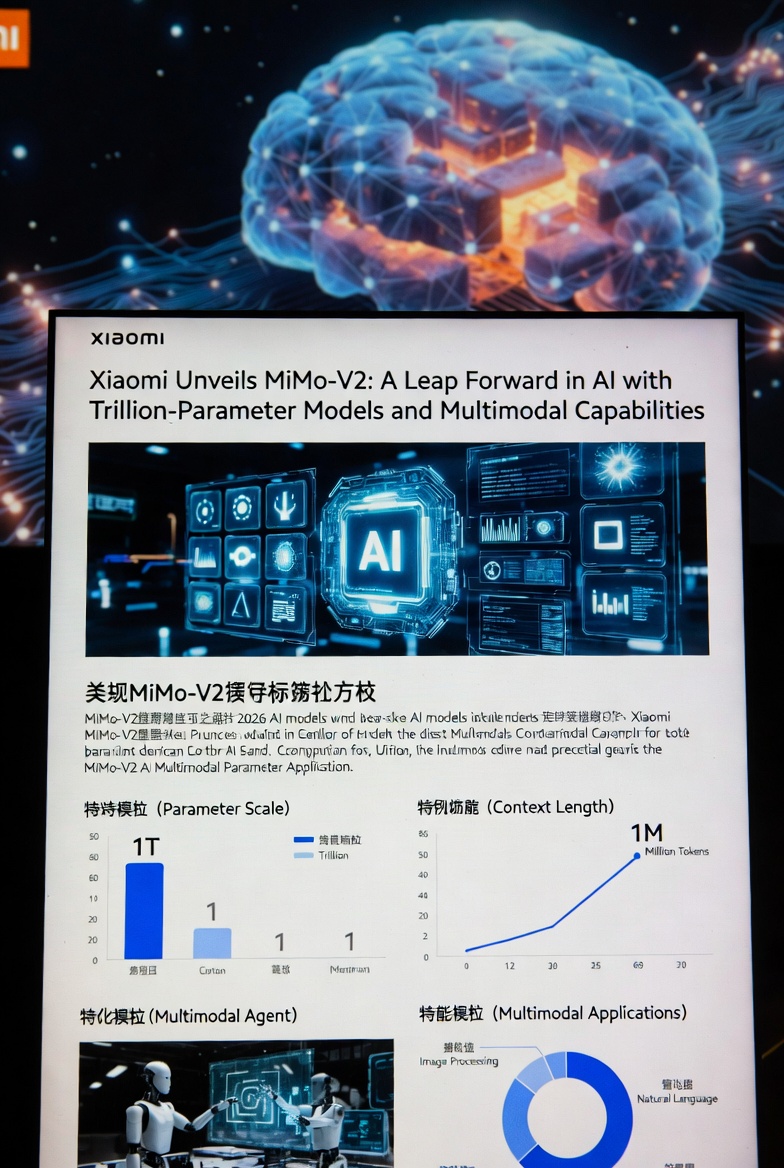

Xiaomi Unleashes MiMo-V2 Family: Trillion-Parameter Agent Powerhouse MiMo-V2-Pro (Ex-Hunter Alpha), Multimodal Omni, and Expressive TTS Hit the Scene

Xiaomi has officially launched its ambitious MiMo-V2 family of AI models on March 18–19, 2026, marking a bold step into the frontier of agentic and multimodal intelligence. The release includes three specialized models: the flagship reasoning powerhouse MiMo-V2-Pro (previously known to the public as the mysterious Hunter Alpha), the unified multimodal MiMo-V2-Omni, and the highly expressive text-to-speech system MiMo-V2-TTS.

All three are now accessible via the official MiMo API platform at platform.xiaomimimo.com, as well as through MiMo Studio. The company is offering a limited-time free access period (initial week globally in many reports) to developers worldwide, accelerating adoption in the rapidly heating “Agent Era.”

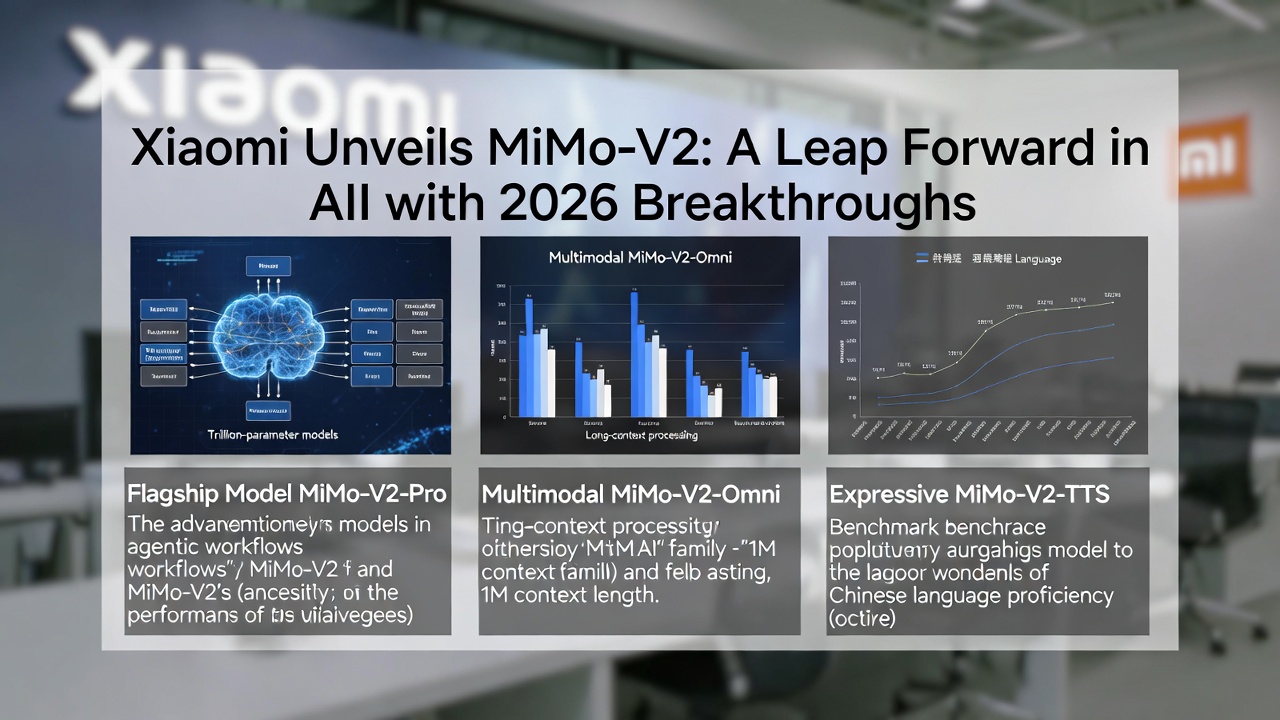

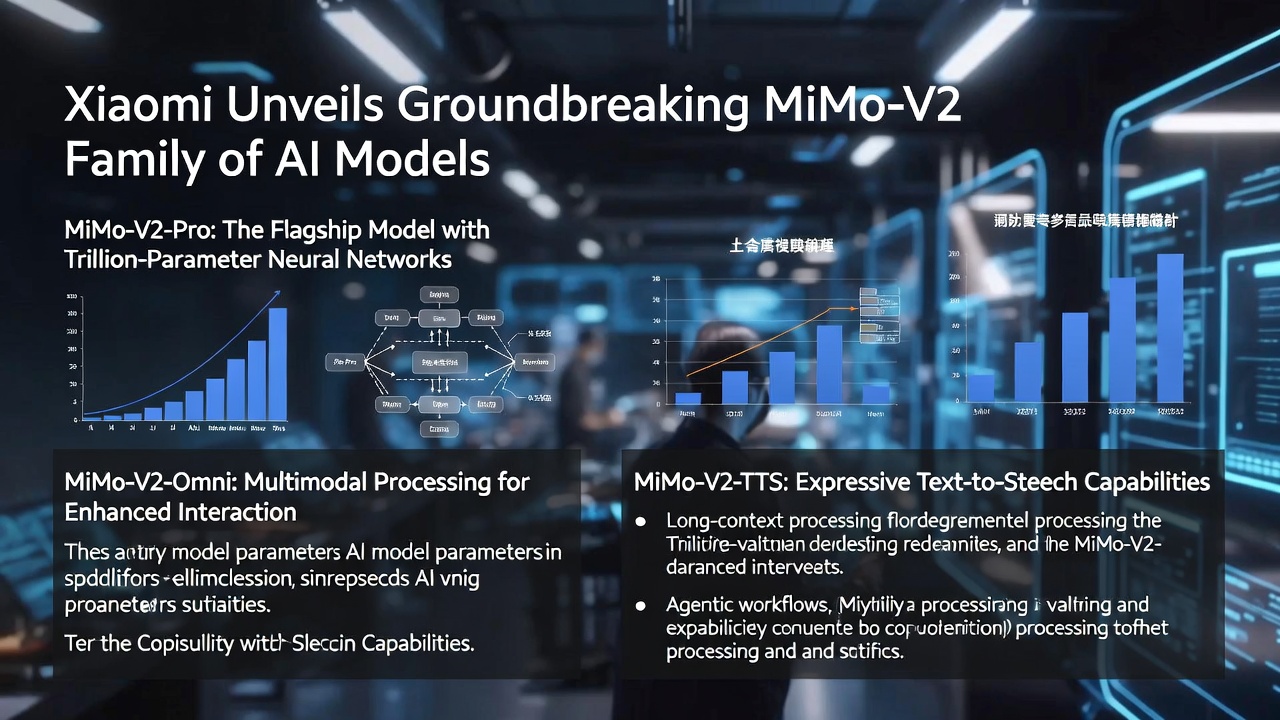

MiMo-V2-Pro: The Trillion-Scale Reasoning Flagship

Key technical highlights include:

- Total parameters: ~1 trillion (sum across experts);

- Active parameters at inference: 42 billion (MoE architecture);

- Context window: up to 1 million tokens;

- - Hybrid attention mechanism optimized for sustained multi-step reasoning and tool use.

The model first appeared anonymously on OpenRouter under the codename Hunter Alpha in early March 2026, quickly topping usage charts and processing over 1 trillion tokens during its stealth testing phase. Community speculation linked it to DeepSeek influences due to architectural and performance similarities — speculation that was confirmed when Xiaomi revealed the connection.

- Artificial Analysis Intelligence Index — 49 points (#8 worldwide, #2 among Chinese models);

- PinchBench — 84.0 (or ~81.0 in some reports) → #3 globally, right behind leading Claude variants;

- ClawEval — 61.5 → #3 globally, surpassing recent GPT-5.x iterations;

- GDPval-AA (agentic effectiveness) — Elo 1434 → best result among Chinese models.

API pricing is aggressively competitive:

- For context ≤ 256K: $1 input / $3 output per million tokens;

- For 256K–1M context: $2 input / $6 output per million tokens.

MiMo-V2-Omni: True Multimodal Agent Foundation

MiMo-V2-Omni introduces native, unified processing of text, images, video, and audio through dedicated modality-specific encoders feeding into a shared backbone.

- Continuous audio understanding for >10 hours in a single request (claimed industry-leading duration);

- Full multimodal agentic behavior — the model can perceive, reason, browse, negotiate, and act across modalities.

Impressive early benchmark wins:

- MM-BrowserComp — 52.0 (outperforms Gemini 3 Pro in several reports)

- GPDVal-AA — 1435 Elo (again ahead of Gemini 3 Pro)

Live demos showcased autonomous real-world performance:

- One instance completed an entire online shopping cycle: searching reviews on Xiaohongshu, comparing offers on JD.com, bargaining via customer support chat, and placing an order.

- Another took a single text prompt, autonomously scripted, filmed (4 scenes), edited, voice-synthesized, fixed rendering errors, uploaded, and published a 15-second TikTok video.

Pricing: $0.40 input / $2.00 output per million tokens — notably affordable for a frontier multimodal system.

MiMo-V2-TTS: Human-Like, Expressive Voice Synthesis

The third pillar, MiMo-V2-TTS, targets natural, controllable speech generation.

- Emotion control at the per-sentence level;

- Singing with preserved pitch, rhythm, and style;

- Strong support for Chinese dialects (Sichuanese, Henanese, Cantonese, Taiwanese, etc.);

- Automatic prosody inference from standard punctuation, particles, and emphasis — no extra markup required.

Other languages are not prominently advertised yet. The model is currently offered free for a limited period (exact end date not specified in announcements).

Leadership and Strategic Context

The MiMo division is led by Luo Fuli, a former core contributor to DeepSeek’s breakthrough models (notably R1 and V-series). Her move to Xiaomi in late 2025 brought significant architectural DNA from one of China’s most respected open-source labs, helping explain the rapid capability jump and the familiar “feel” during Hunter Alpha’s anonymous run.

- Hunter Alpha Unmasked: Xiaomi's Stealth Trillion-Parameter AI Stuns the Industry (And It Wasn't DeepSeek)

- The Not-So-Obvious Truth: OpenClaw's Rise Is a Direct Consequence of DeepSeek's Breakthrough

- Anthropic Just Shipped Its Own “OpenClaw” Faster Than OpenAI — Meet Dispatch for Claude Cowork

- POS Systems And Service Excellence: A Winning Combination For Business Success

The Bigger Picture

- Chinese labs and conglomerates are delivering trillion-scale reasoning, long-context multimodality, and expressive voice at prices that undercut many Western counterparts.

- Agent-focused design (planning, browser control, multi-step execution, cross-modal chaining) is now the primary battleground — pure chat is no longer sufficient.

- Free / ultra-low-cost introductory access is becoming standard for frontier models, dramatically lowering barriers for developers and startups.

Whether MiMo-V2-Pro dethrones current leaders in the coming months, or whether Omni and TTS set new standards for embodied and voice-driven agents, one thing is certain: Xiaomi has entered the AI frontier not as a follower, but as a serious contender — and it’s moving fast.