Why “This New Model Fixed a Bug the Old One Couldn’t” Is Rarely the Story You Think It Is

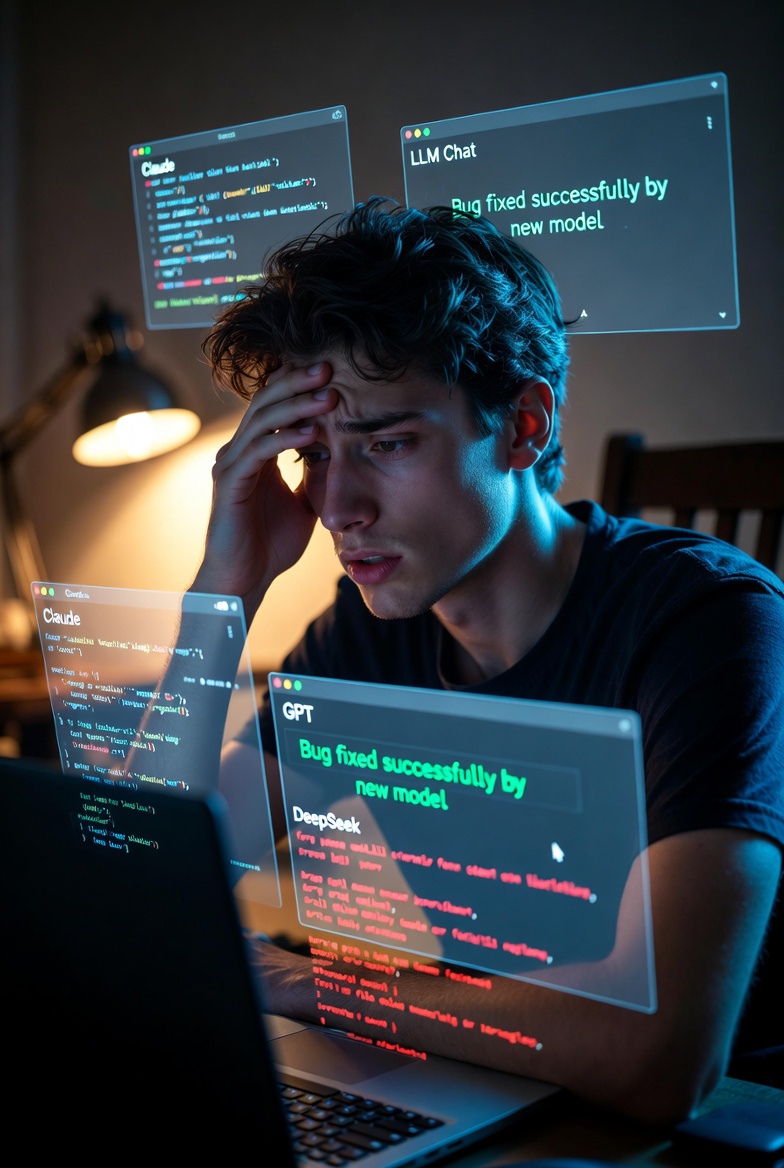

You’ve seen the tweet a hundred times:

“Claude 4.5 couldn’t fix this bug in 20 prompts. Switched to Grok 4 / DeepSeek-V4 / [new hotness] and it solved it in one shot. Time to switch permanently.”

The story spreads like wildfire. It feels like definitive proof that Model Y has surpassed Model X. But in reality, these isolated hero moments are much less meaningful than they appear — and chasing them can lead to worse results overall.

Here’s why such anecdotes are poor signals for deciding which LLM to use.

1. Specialization Beats “Overall Intelligence” in Narrow Domains

One team might have built a bug-finding pipeline with a very particular system prompt and chain-of-thought structure. Another team trained a general-purpose model on a much larger and more diverse dataset — but that specific bug-hunting pattern simply wasn’t emphasized. The result? The “weaker” model looks magically better on that one problem.

This isn’t an exception. It’s the rule.

2. Non-Determinism + Sample Size of One

LLMs are stochastic. Temperature, seed, slight variations in prompt formatting, or even the phase of the moon can change the outcome.

What failed on the first try with Model X might have succeeded on the third, fifth, or tenth attempt. When we see Model Y nail it immediately, we rarely run the same test ten times on both models to compare success rates. We just celebrate the viral win.

A single lucky run is not a benchmark.

3. The Perception Trap (and the Hidden Switching Cost)

When a new model solves something the old one couldn’t, our brains automatically assume:

“New model = strictly better at everything + this new thing.”

But that’s false.

Every model has its own “quirks” — preferred prompting style, tolerance for certain code patterns, way of formatting outputs, etc. Tasks that worked reliably on your old model may suddenly become flaky or require completely different prompting on the new one.

This is exactly why switching models feels painful even when the new one is “objectively better.” You lose muscle memory. You lose the mental model of “how to talk to this thing.” A simple task that used to take 30 seconds now takes five frustrating attempts, and your brain screams “this model is stupid.”

The viral story only shows the one thing that improved. It rarely shows the dozen small things that quietly got worse.

Even Open-Source vs Closed Models Show the Same Pattern

We regularly see cases where a much smaller or newer open-source model (DeepSeek-V4, for example) solves a problem that Opus 4.6 or GPT-5.4 struggled with. Does that mean DeepSeek is now the best model overall? Of course not. One or two cherry-picked examples prove almost nothing.

What Would Actually Be a Good Signal?

The only semi-reliable proxy would be aggregate statistics: how many unique “it finally worked!” stories appear for a new model compared to the previous one, normalized by the size of each model’s user base.

But almost nobody tracks this systematically. So we’re left with loud Twitter anecdotes and survivorship bias.

The Only Test That Matters: Your Own Workflow

In my stack — my programming language, my libraries, my preferred prompting style, my LLM client, my coding habits — does this new model consistently solve the kinds of problems I actually hit?

If the answer is “yes, and it happens often enough to be worth the switching cost,” then switching makes sense.

If it’s “sometimes, and I keep running into weird regressions on things that used to just work,” then you’re probably better off staying put and learning to prompt the current model more effectively.

Viral bug-fix stories are fun.

They make for great screenshots.

But they are almost never a good reason to abandon a model you already understand well.

The smartest move is almost always the boring one: keep using what works reliably for you, and only switch when the evidence becomes overwhelming in your own daily work — not because one shiny example went viral.

Also read:

- Sony’s Table-Tennis Robot “Ace” Just Beat Human Pros — And It’s Far More Impressive Than a Marathon-Winning Bot

- Working at MrBeast’s Empire: Not So Fun If You’re a Woman, New Lawsuit Claims

- “The Classic Product Playbook Is Dead”: What Anthropic’s Head of Product Learned Building Claude

- Instagram Just Launched Instants — A Private, Ephemeral Photo App That Feels Like Locket + Snapchat + BeReal

thank you!