Topaz Labs and NVIDIA Team Up: NeuroStream Slashes VRAM Needs by Up to 95% for Local AI Image Processing

Topaz Labs has just dropped one of the most practical breakthroughs in consumer AI imaging this year. In close collaboration with NVIDIA, the company introduced Topaz NeuroStream — a proprietary inference optimization technology that dramatically reduces VRAM requirements for large AI models, enabling them to run locally on everyday hardware instead of needing massive GPUs or cloud servers.

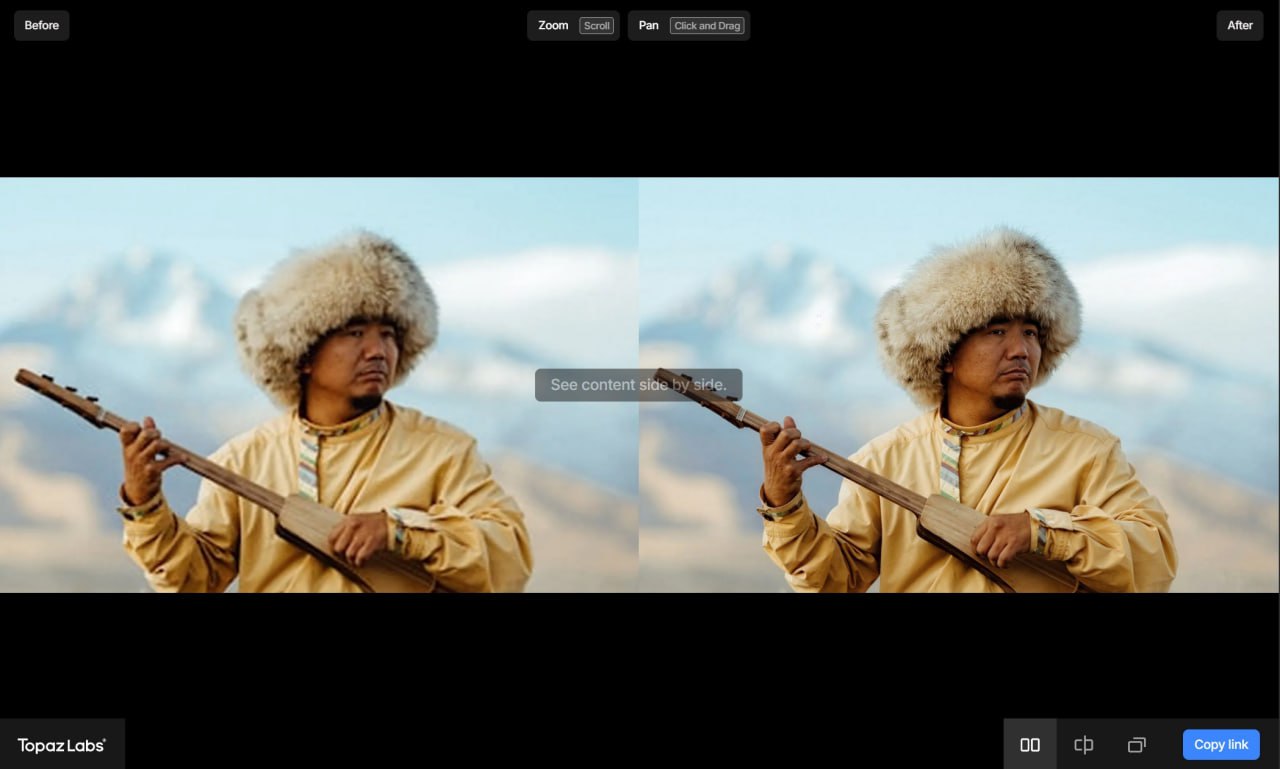

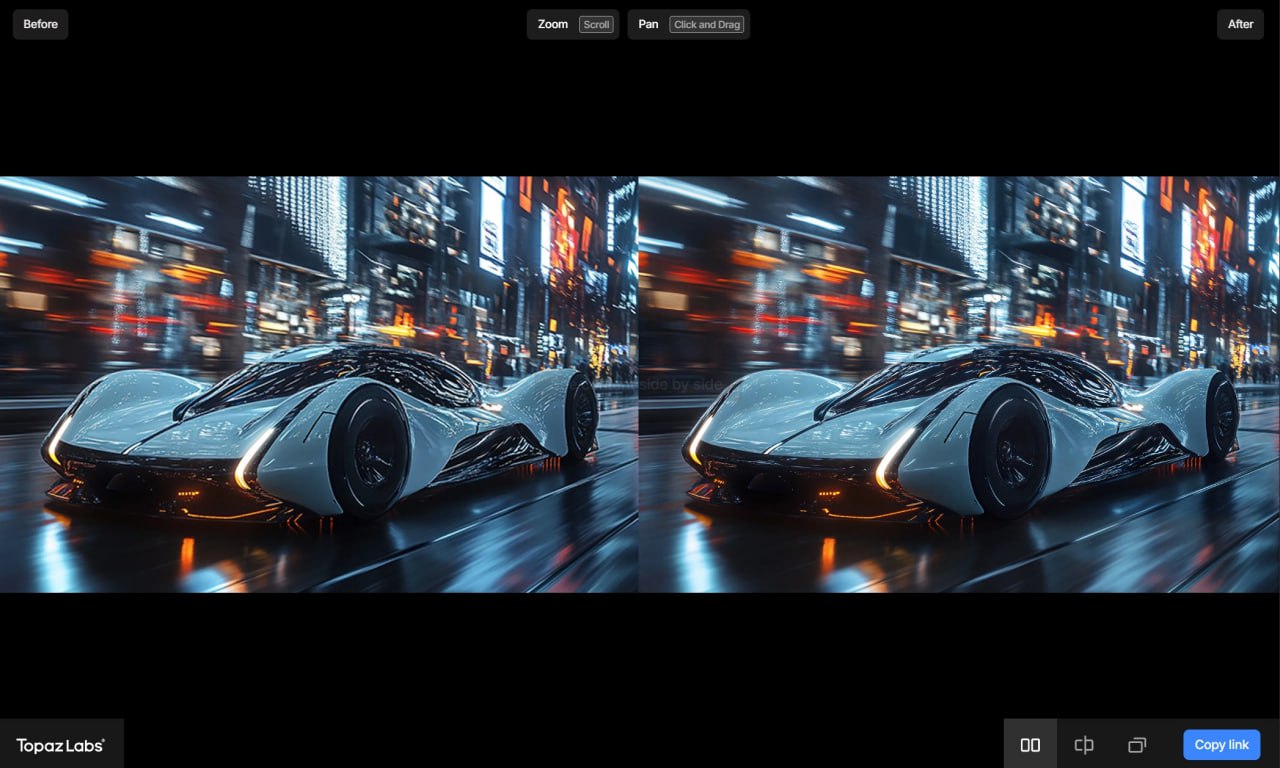

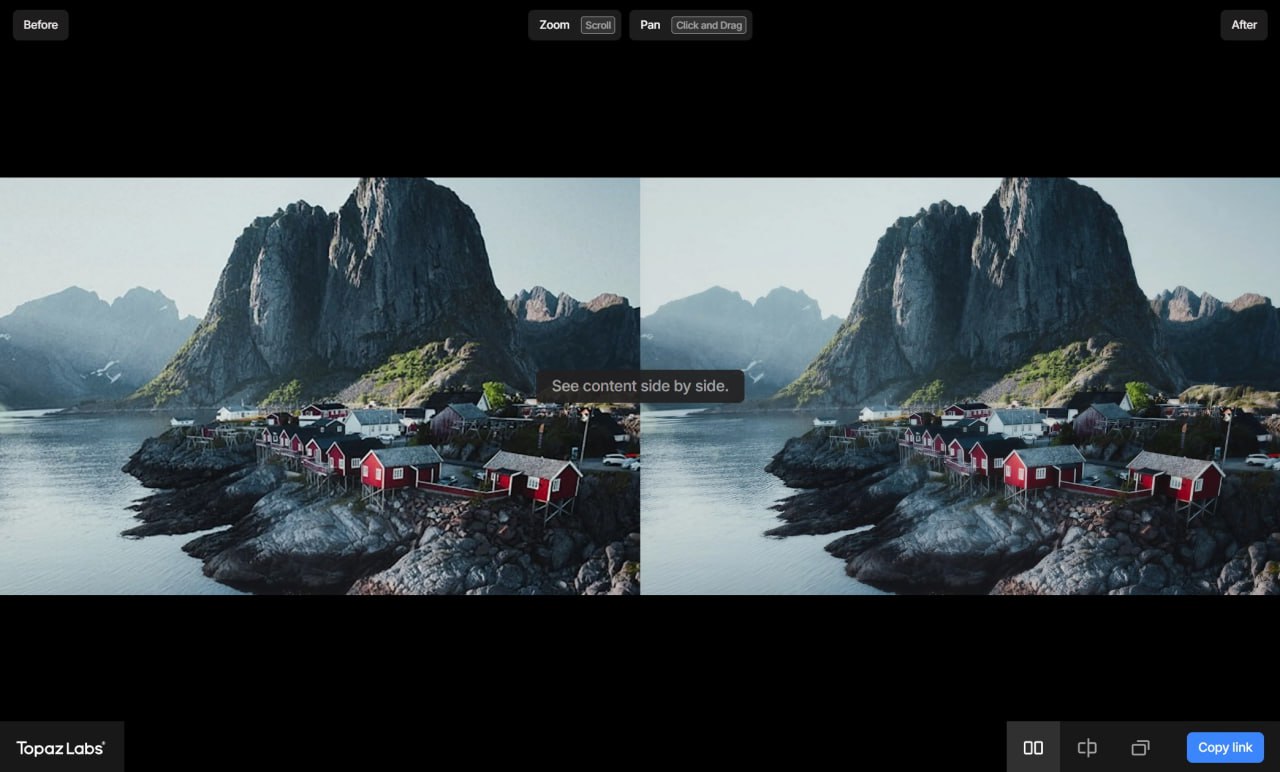

The first model to ship with full NeuroStream support is Wonder 2 (Local) — Topaz's latest flagship image enhancement model. Wonder 2 combines denoising, sharpening, and upscaling in a single pass while staying remarkably faithful to the original photo.

Unlike some generative tools that tend to "hallucinate" unwanted creative changes, Wonder 2 is tuned to preserve details, textures, and artistic intent — making it especially appealing to photographers who want clean, professional results without heavy post-processing artifacts.

Key Highlights from the Announcement (March 2026)

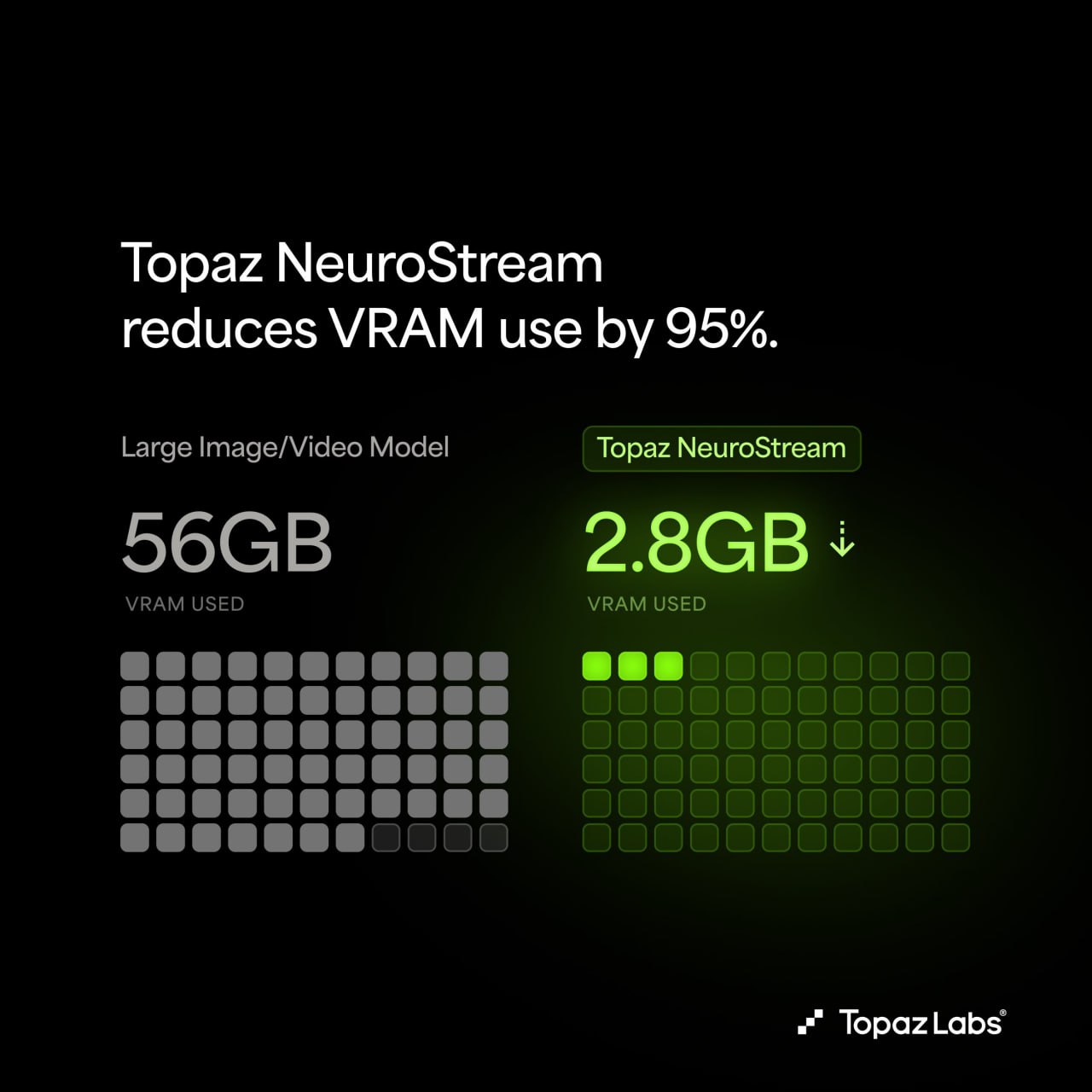

- VRAM reduction example: A large model that once required ~56 GB now runs on ~2.8–3 GB.

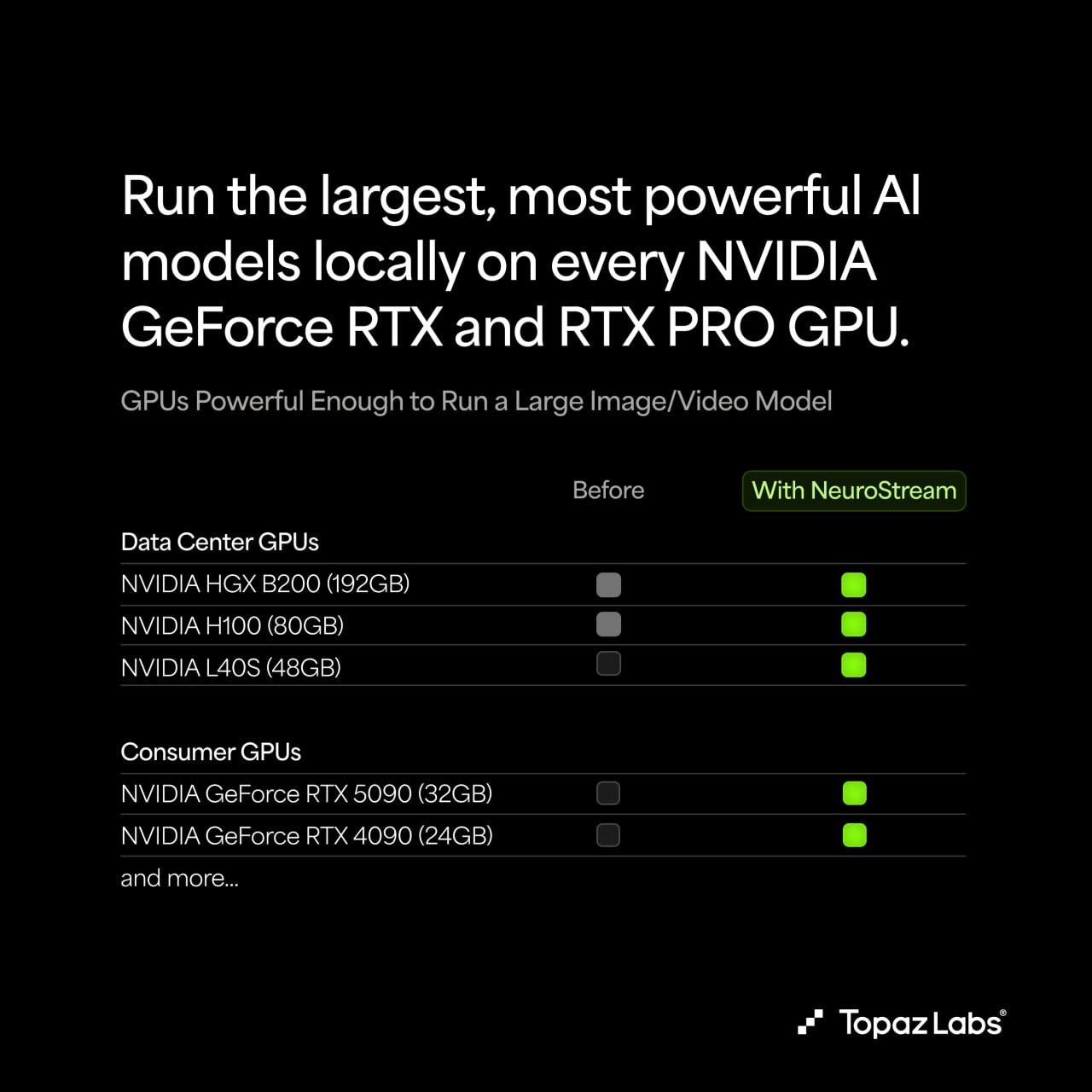

- Hardware compatibility: Works on nearly all NVIDIA RTX / RTX PRO GPUs (even mid-range cards with 6–8 GB VRAM). AMD GPUs need at least 8 GB dedicated VRAM for optimal performance; integrated graphics or CPU fallback is possible but slower and requires substantial system RAM (24 GB+ recommended).

- Apple M-series support: Topaz confirms that NeuroStream optimizations extend to Macs with M chips — a big win for creative professionals who live in the macOS ecosystem.

- Subscription gate: Full local access to Wonder 2 + NeuroStream requires an active Topaz subscription (no one-time purchase option for the newest local models at launch).

How It Compares to ComfyUI and Other Tools

Early user reports (from forums and social media) suggest the difference is noticeable:

- No more swapping to system RAM mid-process → smoother experience;

- Significantly lower peak VRAM usage even on 8 GB cards;

- Minimal quality degradation compared to the full cloud / high-VRAM version.

That said, because Wonder 2 Local is subscription-only, many enthusiasts are still waiting for community benchmarks and side-by-side comparisons before deciding whether to upgrade or renew.

- The US "Accidentally" Invented the Fastest Way to Undermine Its Own AI Monopoly

- Walmart Joins the Trillion-Dollar Club: The First Traditional Retailer to Break the Barrier

- GoFish: Kazakhstan Startup Lets You Fish Remotely from Your Phone

- ETFs Evolved from Simple Tools to a Complex Market of Choices

Bottom Line

For everyone else: it's another strong signal that 2026 is the year local AI imaging finally becomes practical on hardware most people actually own.

Have you tried the new local Wonder 2 model yet? If you're a subscriber, how does the NeuroStream-optimized version feel compared to previous Topaz releases or cloud alternatives? Drop your impressions below.