The Scariest Fact About Today’s AI Models (Even Top Scientists Admit It)

Here’s something that still blows the minds of people who actually build these systems:

Ask any frontier lab scientist (or just ask GPT-4o/Claude 3.5 — they’ll honestly confirm it). We know the training data, the architecture, the loss function… but once the model is trained, the internal mechanisms that produce its intelligence remain largely a black box.

And the craziest part? The most impressive abilities of modern AI didn’t come from deliberate engineering. They emerged — completely unexpectedly — simply because we kept making the models bigger and trained them on more data.

No one sat down and said “let’s teach the model to do math.”

No one wrote special code to make it reason step-by-step.

No one explicitly trained it to understand sarcasm or plan like an agent.

It just… started happening.

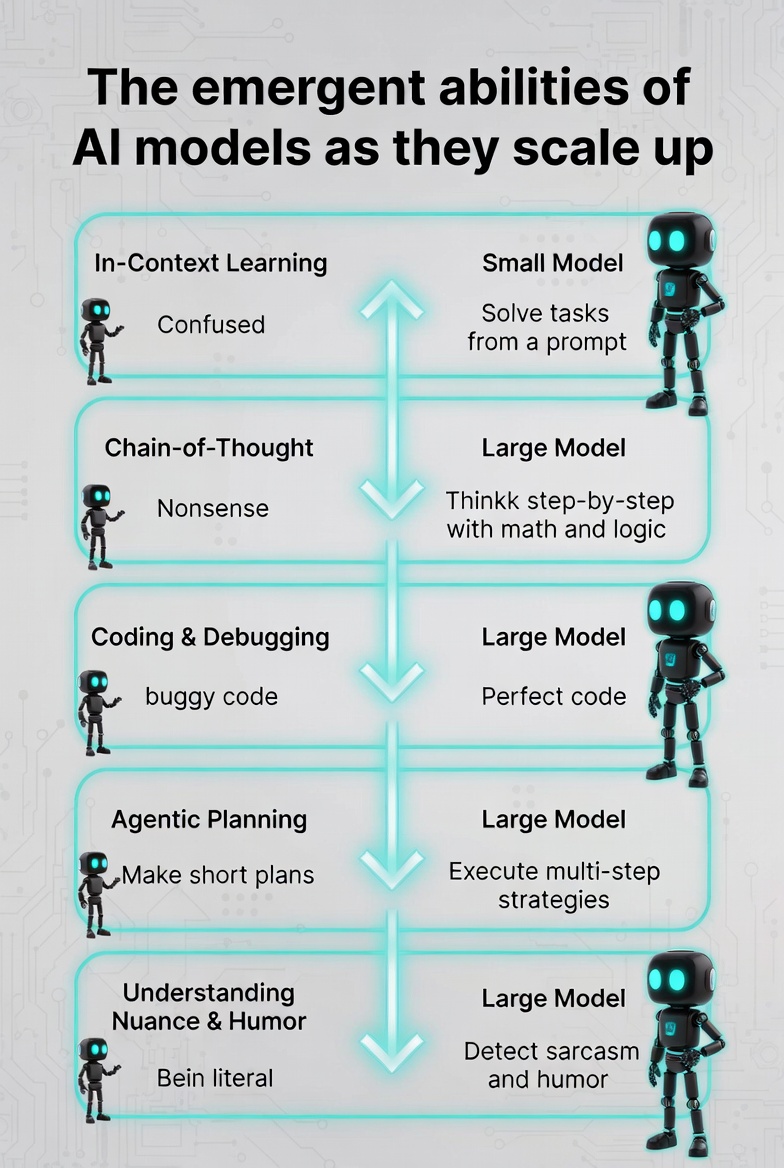

Five Abilities That Appeared “By Accident”

Here are the most striking examples of emergent abilities — capabilities that suddenly showed up as models scaled up:

1. In-Context Learning (2020)

Small models could barely do anything new without fine-tuning.

Then GPT-3 arrived. Suddenly it could solve arithmetic, translate languages, answer complex questions, and even follow new tasks — all just from the prompt description. No extra training required. It learned “on the fly.” No one saw that coming.

Tiny models either refused to think step-by-step or produced total nonsense.

Bigger models (PaLM, then GPT-4 class) dramatically improved at math, logic, and puzzles the moment researchers simply added “Let’s think step by step” to the prompt. Performance jumped by orders of magnitude. Pure emergence.

3. Writing & Debugging Real Code (2023)

Early models spat out short, buggy snippets that barely ran.

GPT-4 started writing production-level code, spotting bugs, suggesting fixes, and even explaining its own logic like a senior engineer. What used to require hours of human debugging now happens in seconds.

4. Multi-Step Planning & Agentic Behavior (2024–2025)

Small models couldn’t keep a plan in their “head” for more than a couple of steps.

Then came OpenAI’s o1 series and Claude 3/4. These models began creating detailed, long-horizon strategies, adjusting plans when things went wrong, and acting like true autonomous agents. The jump was so sharp it felt like the model woke up.

5. Understanding Humor, Nuance & Subtext (2023–2024)

Small models were painfully literal. Sarcasm, irony, cultural references, and complex instructions flew right over their heads.

GPT-4 and Claude 3 suddenly started catching tone, jokes, double meanings, and subtle instructions almost at human level. They went from robotic to… weirdly human.

Also read:

- The AI Compute Chokepoint: Welcome to the Strait of Hormuz for Silicon and Power

- This AI Will Tell You Exactly How Attractive You Are — And It Only Takes 25 Seconds

- Phota Studio: The New AI “Photo Lab” That Actually Remembers Your Face

Why This Matters

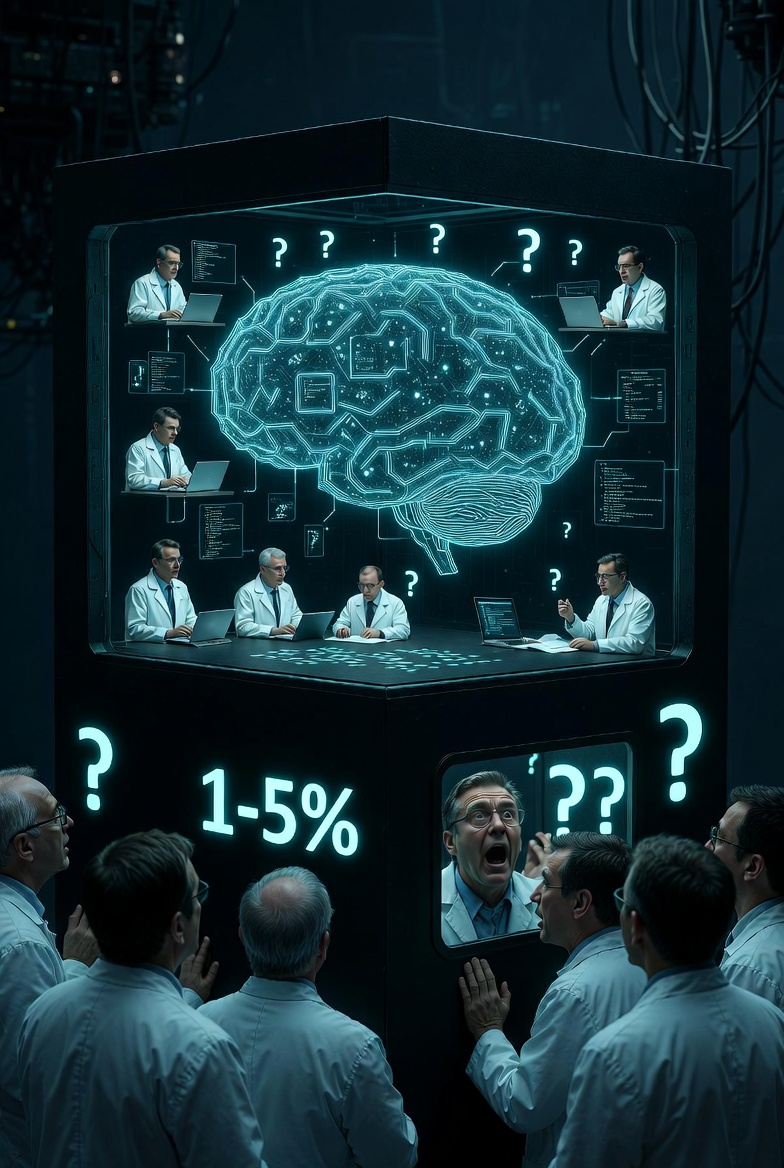

This is what makes current AI so unsettling (and exciting). We are not building intelligence piece by piece. We are growing something whose internal workings we barely understand — and every time we make it bigger, it reveals abilities we didn’t even know to ask for.

We are living in the middle of the biggest uncontrolled experiment in intelligence humanity has ever run.

And we still only understand 1–5% of what’s going on inside the box.

The next leap isn’t going to be something we carefully design.

It’s going to be something the model just… decides to do one day when it gets big enough.

Buckle up. The black box is getting smarter faster than we can open it.