Meta’s Muse Spark: A Respectable Step Up That Finally Puts Them Back in the Game

On April 8, 2026, Meta Superintelligence Labs quietly dropped Muse Spark — the first model in their new “Muse” family. It’s not the flashy, headline-grabbing monster that instantly claims the #1 spot on every leaderboard. But here’s the thing: it doesn’t have to be.

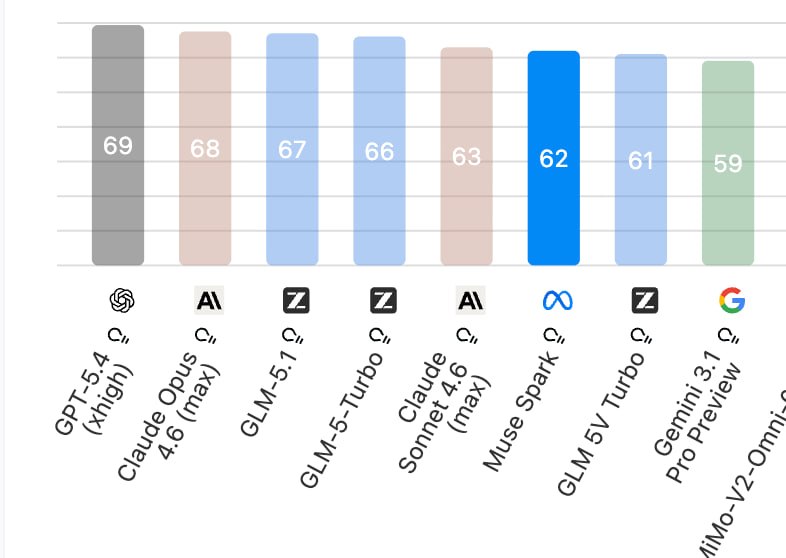

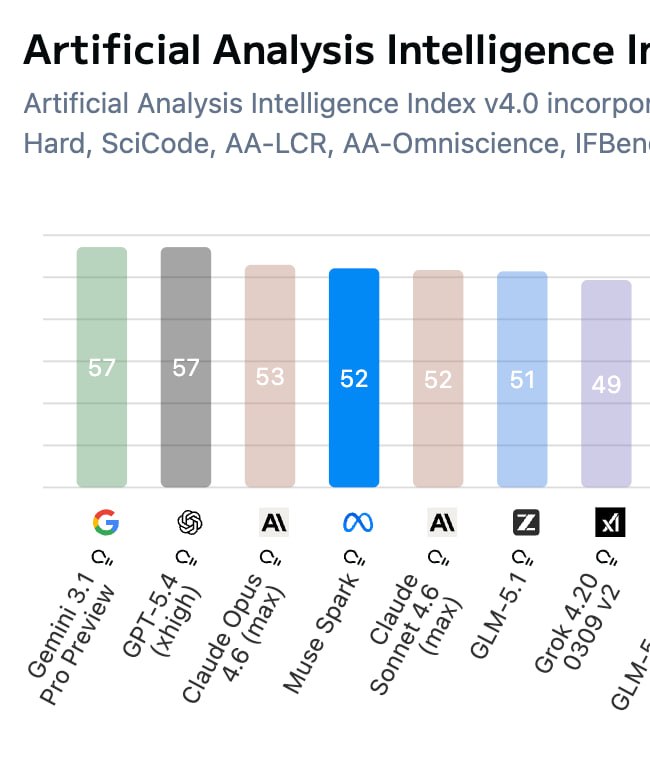

For the first time in a while, Meta isn’t at the bottom of the frontier-model pile. Muse Spark lands them comfortably in roughly fourth place among the big players — behind the absolute top tier (OpenAI, Anthropic, Google), but clearly ahead of Grok and the leading Chinese models. That’s real, measurable progress from where Llama used to sit.

What Actually Makes Muse Spark Interesting

Meta didn’t just throw more compute at the problem.

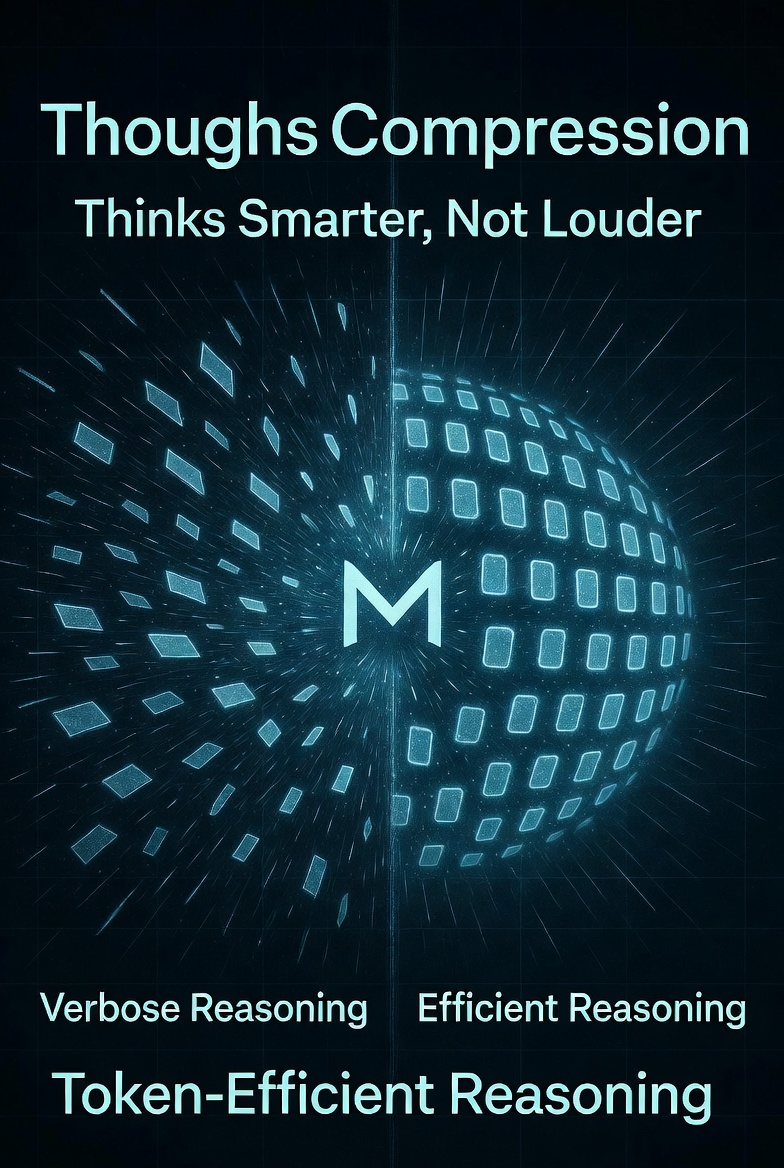

1. Thought Compression (aka “compression of reasoning”)

This is the standout technical trick. During reinforcement learning, Meta introduced **thinking-time penalties** that force the model to compress its internal reasoning chain. After an initial phase of long, verbose thinking, the model hits a “phase transition” and starts delivering the same (or better) answers with dramatically fewer tokens.

In plain English: Muse Spark is more token-efficient than almost anything else out there right now. It thinks smarter, not louder.

2. Multi-Agent “Contemplating Mode”

Instead of making one agent think longer (the usual test-time scaling trick), Muse Spark spins up multiple agents that collaborate in parallel. The result? Better performance at roughly the same latency. It’s a neat architectural win.

3. Heavy medical-grade tuning

Meta worked with over 1,000 physicians to curate training data specifically for health and wellness queries. The model now gives noticeably more factual, comprehensive, and personalized answers on nutrition, exercise form, muscle activation, and similar topics. It’s not just “don’t sue us” safety — it’s actual domain expertise baked in.

The model is also natively multimodal (vision + reasoning), supports tool use, visual chain-of-thought, and can handle long-horizon agentic tasks like coding workflows or interactive troubleshooting.

Benchmarks: Competitive, Not Dominant

- 58% on Humanity’s Last Exam;

- 38% on FrontierScience Research.

That puts it in the same conversation as Gemini Deep Think and GPT Pro — respectable frontier-level performance. It’s not miles ahead of anyone, but it’s no longer the model you skip when comparing the leaders.

And yes — it comfortably clears Grok and the current Chinese frontier offerings.

Built for Meta’s Products First

It’s already live on:

- meta.ai;

- The Meta AI app.

A private API preview is open to select partners, and the fancy “Contemplating Mode” is rolling out gradually. This is very much a Meta-first model designed to power personal superintelligence experiences inside WhatsApp, Instagram, Facebook, and the broader Meta universe.

Alo read:

- Marble 1.1 — World Labs Just Made Their World Model Significantly Better

- Unmasking Runway Characters: The Unexpected Rise of the Real-Time Avatar

- The Dawn of the Wisdom Era: Why Your Intelligence is No Longer Enough

Bottom Line

Muse Spark isn’t going to make anyone say “Meta just won the AI race.” But it absolutely makes people say “Meta is back in the race.”

After a couple of years where their frontier efforts felt a bit lost, this release shows they’ve figured out how to be efficient, practical, and domain-smart at the same time. The token-compression trick, the physician-validated health capabilities, and the multi-agent approach are all genuinely interesting engineering choices.

For everyone watching the leaderboard, the message is clear: Meta is no longer the laggard. They’re now a legitimate fourth-place contender — and with the rest of the Muse family already in the pipeline, the gap to the top three is shrinking faster than most expected.

You can try Muse Spark right now at meta.ai. It’s worth a spin — especially if you want to see what a more token-efficient, medically-tuned frontier model actually feels like in real use.