‘Made by Human’ — The New Trend in Content Labeling, and Why It’s Raising More Questions Than Answers

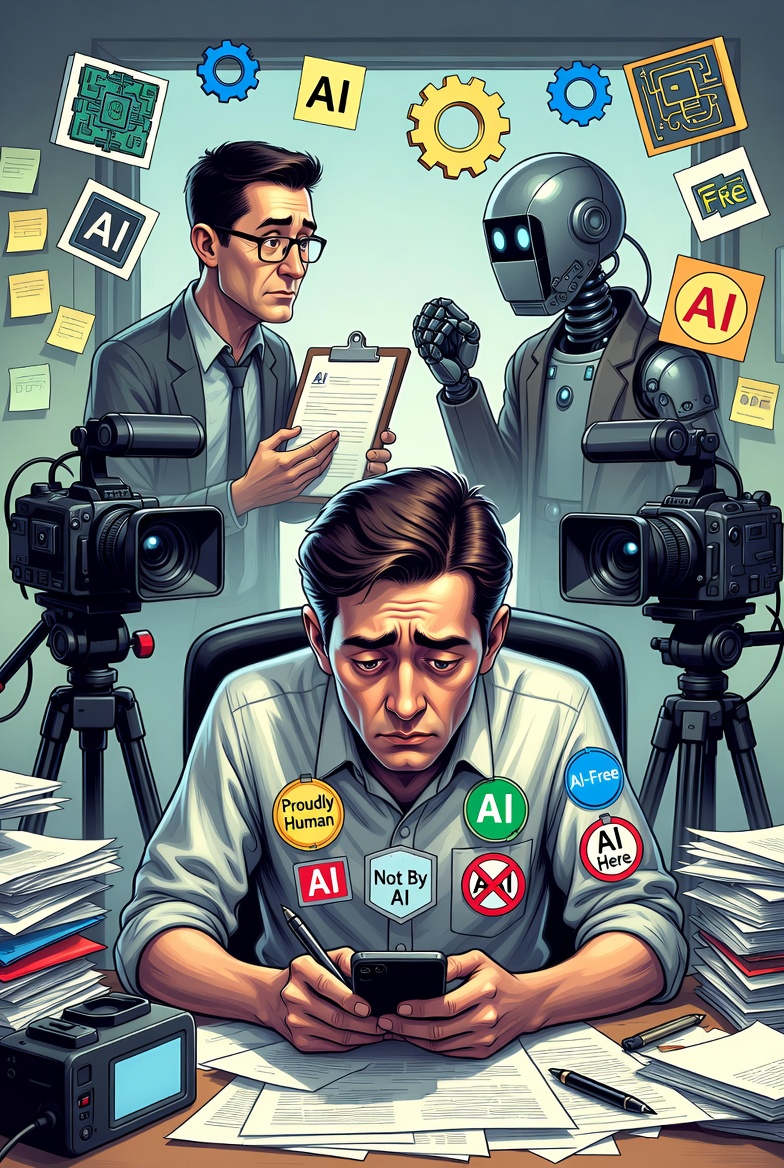

The “dead internet” theory has never felt more prescient. Just a few years ago, major platforms and companies were debating how to clearly label AI-generated content to protect consumers from synthetic slop. Now the script has flipped: in 2026, it’s often easier — and more commercially valuable — to certify that something was *not* made by AI. A growing number of organizations, unions, activists, and businesses are racing to create “human-made,” “AI-free,” or “Proudly Human” badges and certifications for creative work.

What was supposed to be a simple shield for human creativity has quickly become a fragmented, confusing, and sometimes absurd process — part premium marketing signal, part bureaucratic nightmare.

From Labeling AI to Proving You’re Human

The shift happened fast. As generative AI tools flooded the internet with convincing text, images, music, and video, platforms largely failed to enforce consistent AI disclosure. Instagram’s head Adam Mosseri even suggested it might soon be “more practical to fingerprint real media than fake media.”

At least a dozen competing initiatives have emerged, including:

- Proudly Human — offers verification and legal action against fraudulent use across text, art, video, and music.

- Not by AI — provides simple badges for websites, books, podcasts, and films if creators self-certify that at least 90% of the work is human-created.

- Authors Guild’s “Human Authored” certification — targeted at books and written works.

- Proof I Did It — uses blockchain to create permanent, unforgeable certificates.

- Made by Human, No-AI-Icon, VerifiedHuman, and others — ranging from honor-system badges to manual audits.

The Price of “Premium” Authenticity

Some creators welcome the extra step, seeing it as a way to stand out in an AI-saturated market. Others call it exhausting and invasive.

The bigger problem? Inconsistency. Certain platforms hand out tokens with almost no verification — just a click and a promise. Others subject applicants to what feels like “several circles of hell,” scrutinizing every tool used and debating edge cases: Does using Grammarly count as AI? What about chatting with an LLM for brainstorming? Where exactly does “human-made” end and “AI-assisted” begin?

A Fragmented Wild West

Blockchain solutions and human auditors offer more credibility but come with higher costs and slower processes. Meanwhile, the C2PA content credentials standard — backed by big tech like Meta, Adobe, and Microsoft — was designed to authenticate media but has struggled with adoption, partly because many creators and platforms still have incentives to hide AI use.

Also read:

- X Is Finally Cracking Down on Unlabeled Ads — And It’s Personal

- Ikea Keeps the Human Face in the Age of AI: How Chatbot Billie Created More Creative Jobs — and $1.3 Billion in New Revenue

- The Generative Music Wars Heat Up: While Musicians Fight AI, New Platforms Are Thriving

- Tom Cruise and Will Smith's Burj Khalifa Photo Highlights a Notable Difference

What Comes Next?

The “Made by Human” movement reflects a deeper cultural backlash: people are craving authenticity in an era where everything can be faked. Labels are already appearing on books, films, marketing campaigns, and even physical products. Some brands are turning “AI-free” into a selling point, much like “organic” or “Fair Trade” certifications.

Yet without coordination among creators, platforms, regulators, and industry groups, the trend risks becoming just another layer of noise. The very system meant to separate human creativity from synthetic content is itself turning into a new kind of digital Wild West.

In the end, the biggest question isn’t whether we can prove something was made by a human — it’s whether consumers will still care enough to look for the badge once the next wave of hyper-realistic AI arrives. For now, the race is on, and the rules are still being written.