Anthropic Unveils Claude Code Security: The AI Auditor Transforming Code Safety

In a significant leap for cybersecurity, Anthropic announced Claude Code Security on February 20, 2026 — a groundbreaking AI tool integrated into Claude Code that acts as an automated security auditor for codebases.

This innovation marks a shift from AI merely generating code to deeply analyzing it like a seasoned security researcher, identifying logical vulnerabilities, explaining issues, and proposing fixes without automatic implementation. As software development accelerates alongside cyber threats, this tool promises to democratize high-level security reviews, making them accessible and scalable for developers worldwide.

Beyond Bug Hunting: How Claude Code Security Works

Powered by Claude's Opus 4.6 model, it traces data flows, examines component interactions, and reasons about code holistically — mimicking a manual security audit.

For instance, it can spot business logic flaws or broken access controls that might allow unauthorized actions.

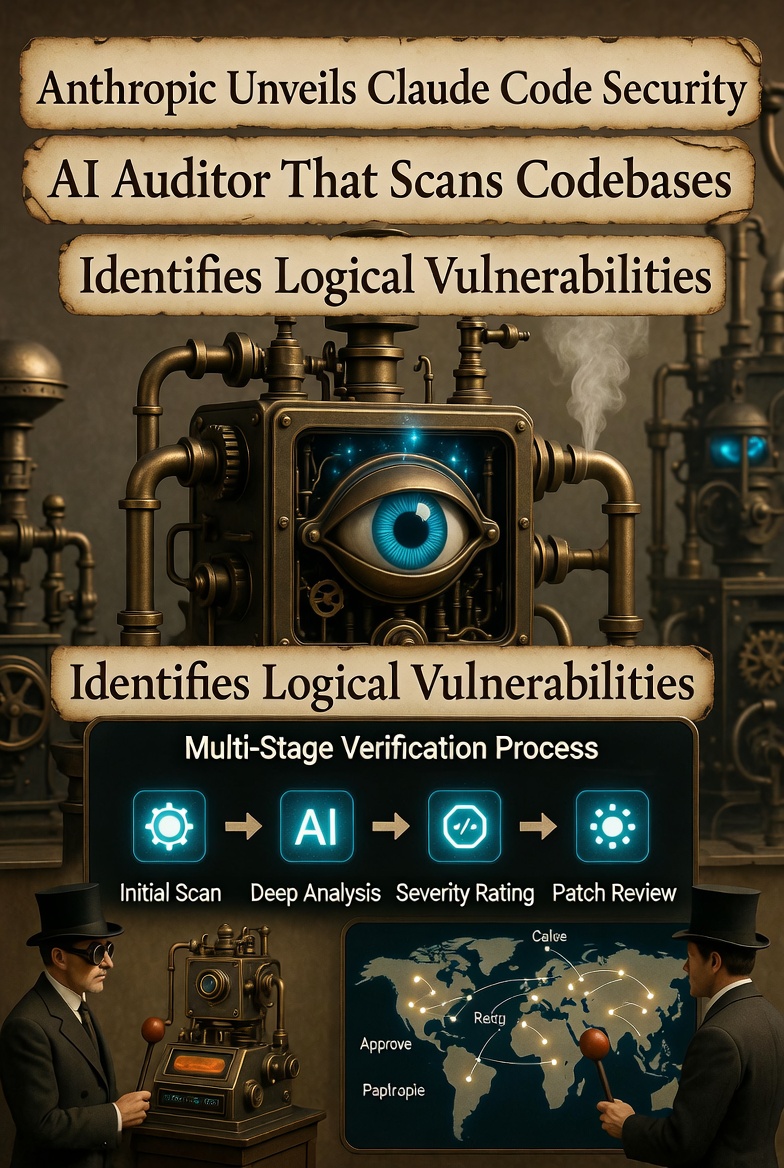

Key features include:

- Multi-stage Verification: Findings undergo rigorous self-examination to validate or debunk issues, minimizing false positives.

- Severity and Confidence Ratings: Each vulnerability is prioritized with a severity score and confidence level, helping teams focus on critical risks.

- Dashboard for Review: Users access a dedicated interface to inspect explanations, suggested patches, and approve changes—ensuring human oversight remains central.

- Patch Suggestions: The AI proposes targeted code fixes, but nothing is committed without developer confirmation, balancing automation with control.

This process is demonstrated in a short announcement video from Anthropic, where the tool's ability to reason like a human expert is highlighted, emphasizing its role in catching complex issues that rule-based systems overlook.

Standing Apart from Traditional Tools

Claude, by contrast, performs what Anthropic describes as a "scalable manual review," adapting to unique project architectures without rigid templates.

This distinction addresses a core industry pain point: the overwhelming volume of potential vulnerabilities versus limited expert resources. As AI-driven attacks grow more sophisticated, tools like this shift the balance toward defenders by enabling proactive, intelligent scanning.

Proven in the Real World: Impressive Test Results

Anthropic's internal testing underscores the tool's efficacy. Leveraging over a year of research, including participation in competitive Capture-the-Flag events and collaborations with organizations like the Pacific Northwest National Laboratory, Claude Opus 4.6 identified over 500 real vulnerabilities in production open-source projects. Remarkably, many of these bugs had persisted undetected for years — or even decades — despite prior expert scrutiny.

These findings weren't hypothetical; they stemmed from analyzing live codebases, demonstrating the AI's ability to uncover long-standing weaknesses. Internally, Anthropic has used the tool to bolster its own systems, further validating its practical value in securing critical infrastructure.

The Broader Impact: Redefining DevOps and Security Norms

The release of Claude Code Security signals a paradigm shift in software development. What was once an expensive, expertise-intensive process—hiring specialized security researchers for audits — can now be integrated into everyday workflows. With development speeds increasing and cyber threats evolving rapidly (often amplified by AI itself), AI-powered security becomes an essential layer. Anthropic envisions a future where AI scans a substantial portion of global codebases, elevating the overall security baseline and reducing exploitation risks.

In practical terms, this means DevOps pipelines without AI checks could soon seem as outdated as running servers without HTTPS encryption. By empowering open-source maintainers and enterprise teams alike, the tool fosters a more resilient digital ecosystem, where vulnerabilities are patched swiftly before attackers can exploit them.

- The Chessboard of Online Projects: Why the First Five Moves Matter More Than the Next Fifteen

- Perplexity Joins AI Monetization Debate, Sides with Anthropic in Ditching Ads for Subscriptions

- The Not-So-Obvious Truth: OpenClaw's Rise Is a Direct Consequence of DeepSeek's Breakthrough

- The Two Key Metrics That Will Shape the AI-Driven Future: Agent Autonomy and Self-Improving Research

Availability and Next Steps

Currently available in a limited research preview for Claude's Enterprise and Team customers, Claude Code Security is accessible via the web interface. Open-source repository owners can apply for free, expedited access through Anthropic's contact form, reflecting the company's commitment to supporting the broader developer community.

For those interested, more details are available on Anthropic's solutions page, and the announcement video provides a quick overview of its capabilities. As Anthropic continues to refine this technology, it stands as a testament to AI's potential not just as a creator, but as a vigilant guardian of code integrity.

This launch is a exciting milestone, positioning Anthropic at the forefront of AI-driven cybersecurity and paving the way for safer, more efficient software development.