Thirteen Bullets and One Molotov Cocktail: How Anti-AI Protest Just Got Deadly Serious

In the early hours of April 10, 2026, someone threw a Molotov cocktail at the San Francisco home of OpenAI CEO Sam Altman. The incendiary device hit the exterior gate and ignited a small fire before the attacker fled. A 20-year-old man was arrested later that day after also threatening to burn down OpenAI’s headquarters. No one was injured, but the message was unmistakable.

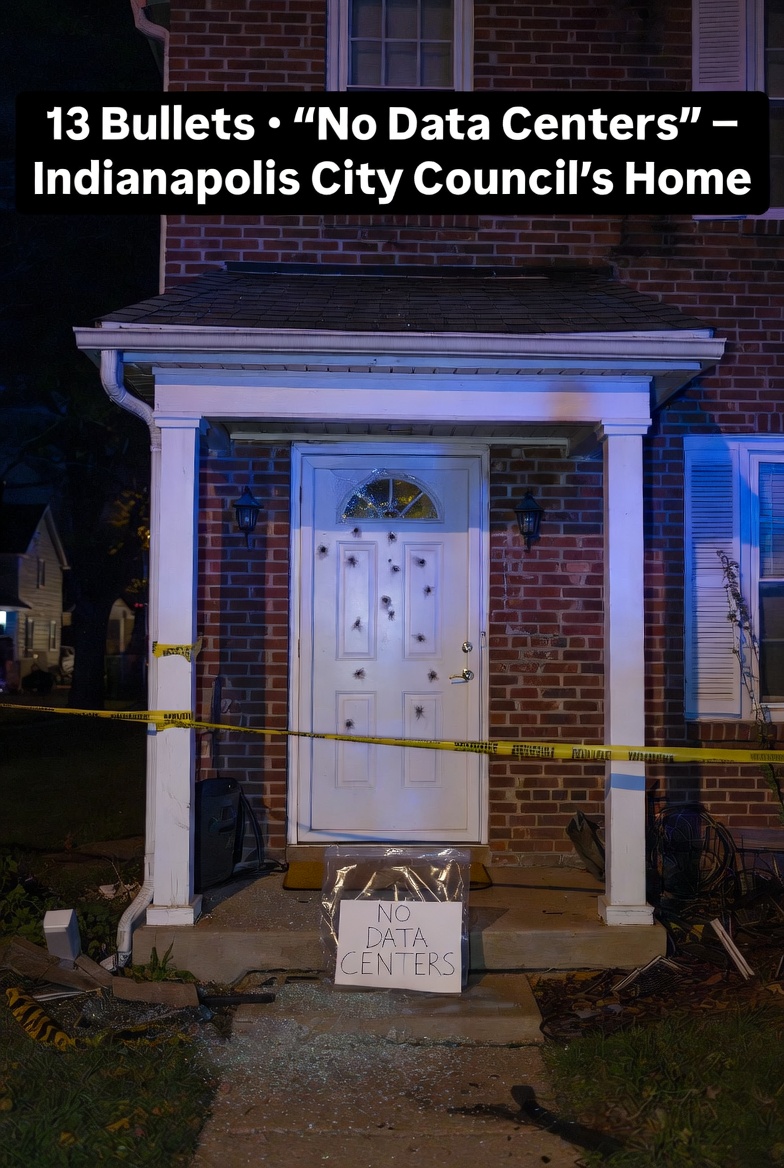

This wasn’t an isolated act of rage. Just days earlier, on April 6, someone fired 13 bullets into the front door of Indianapolis City-County Council member Ron Gibson’s home. Gibson had publicly supported a controversial data-center project in the Martindale-Brightwood neighborhood. A handwritten note left in a plastic bag on his doorstep read simply: “No Data Centers.”

Two violent incidents in one week. Both tied directly to AI infrastructure. And both signal that the backlash against artificial intelligence has moved from angry tweets and protest signs into something far darker.

Sam Altman’s Response: A Family Photo and a Plea for Civility

Altman addressed the attack directly in a raw, late-night blog post on his personal site.

He shared a photo of his family — a rare public glimpse into his private life — writing:

> “Here is a photo of my family. I love them more than anything… I am sharing a photo in the hopes that it might dissuade the next person from throwing a Molotov cocktail at our house.”

He acknowledged the intense anxiety surrounding AI, admitted past mistakes at OpenAI, and doubled down on his belief that the technology must be democratized rather than controlled by a handful of labs.

Most importantly, he called for de-escalation:

> “While we have that debate, we should de-escalate the rhetoric and tactics and try to have fewer explosions in fewer homes, figuratively and literally.”

It was a strikingly human response from one of the most powerful figures in tech — and a clear sign that even the leaders at the center of the AI boom now feel personally at risk.

Why AI Has Become a Lightning Rod for Violence

Politicization alone doesn’t create violence. But it creates a massive audience — including people for whom violence has always been an acceptable tool. When national leaders treat AI as an existential threat or a geopolitical weapon, it legitimizes extreme reactions for those already inclined toward them.

Add in real-world escalation and the picture gets uglier. In the past month, Iran has launched missile and drone strikes on AWS data centers in Bahrain and an Oracle facility in Dubai. Iranian officials have explicitly threatened the massive Stargate AI supercluster under construction in the UAE — a $500 billion+ project involving OpenAI, SoftBank, Oracle, and others. While the original attacks were part of broader regional conflict, the targeting of AI infrastructure has shifted the Overton window: data centers are now seen as legitimate military targets.

Also read:

- Marble 1.1 — World Labs Just Made Their World Model Significantly Better

- Unmasking Runway Characters: The Unexpected Rise of the Real-Time Avatar

- Claude Mythos Just Broke Cybersecurity: The AI That Finds Vulnerabilities Better Than Most Human Hackers

This Is Just the Beginning

What we’re witnessing isn’t random vandalism.

It’s the convergence of three dangerous forces:

- Economic anxiety over job displacement and concentrated power.

- Geopolitical weaponization of AI infrastructure.

- Cultural radicalization where “stop AI” rhetoric meets people who already believe in direct action.

The incidents in San Francisco and Indianapolis are the domestic symptoms. The Iranian strikes are the international ones. Together they show that anti-AI sentiment now spans from lone actors with gasoline bottles to state actors with ballistic missiles.

Researchers, engineers, and executives working on frontier AI systems — especially those whose names and addresses are public — should take the warning seriously. The era when threats stayed online is over.

Open your mail with care, gentlemen. And maybe double-check the security cameras tonight.

The AI revolution was always going to be disruptive. No one expected the backlash to become this literal, this fast. But here we are: thirteen bullets, one burning bottle, and a growing list of targets.

The discourse just got hot. And it’s only April.