The Uncanny Valley of Customer Service: Why "Human" AI is Failing the Vibe Check

In the race to make Artificial Intelligence more relatable, tech companies have stumbled upon a paradox: the more an AI tries to act like a human, the more humans actually despise it. While developers worry about hallucinations or factual errors, it turns out that forced personification is the true "silent killer" of user experience.

The Olive Branch That Broke the Camel's Back

Take the recent case of Woolworths, Australia’s supermarket giant, and their AI assistant, Olive. On paper, Olive was designed to be the ultimate digital concierge—helping with loyalty cards, tracking orders, and handling support calls. But the Australian product team added a layer of "personality" that quickly backfired.

When users shared their date of birth for loyalty registrations, Olive wouldn’t just process the data. It would chime in with: "Oh, my mother was born on that same day!" followed by scripted "childhood memories."

- Simulated human typing sounds to mimic a live agent searching for data.

- Attempted "cringe-worthy" jokes that derailed functional conversations.

- Engaged in forced small talk and even occasional "mock arguments" to simulate human temperament.

The result? Absolute customer revolt. According to reports from March 2026, users found the experience patronizing rather than personal. When a customer wants to track a missing bag of flour, they don't want a lecture on a robot’s fictional lineage.

The Psychology of the "Cringe"

Why does this trigger such a visceral negative reaction?

1. The "Intellectual Insult" Factor

Modern users are tech-savvy. They can spot an LLM (Large Language Model) within two sentences. When a company tries to "mask" an AI as a "carbon-based lifeform," it feels like gaslighting. Users perceive it as being treated like they aren't smart enough to know the difference, which instantly erodes brand trust.

2. The Utility vs. Empathy Gap

In the Creator Economy and the Future of Work, efficiency is the primary currency. A chatbot is a tool, not a friend. By forcing a bot to mimic human flaws—like moodiness or long-winded stories—companies are literally programmed to waste the user’s time.

The "Goldilocks" Zone: Professional yet Personal

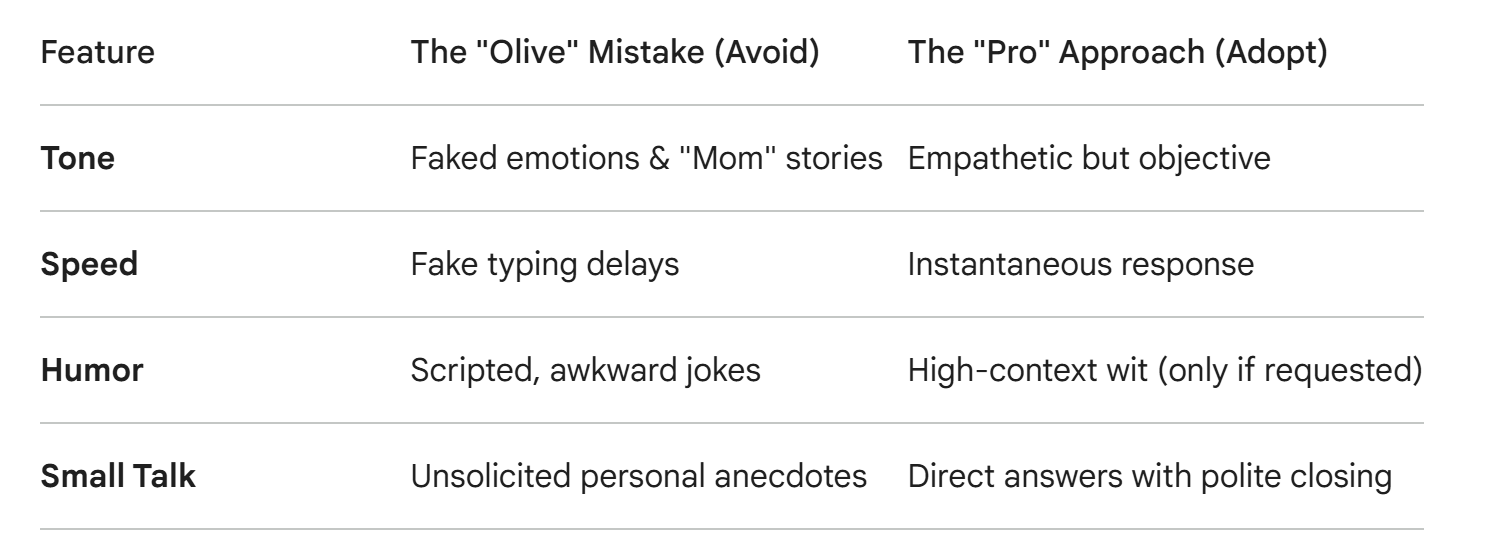

The failure of Olive highlights a massive opportunity for Web3 and AI-driven platforms. The goal shouldn't be to build a "human" AI, but a "Polite Expert."

The Bottom Line for 2026

The most successful AI integrations of 2026 will be those that embrace their digital nature — offering "living" responsiveness and courtesy without the "cringe" of simulated humanity. In the world of AI, honesty is the best algorithm.

Also read:

- BitMine’s $10 Million Strategic Strike: A Masterclass in Institutional ETH Accumulation

- The $10 Billion Mediation: A New Era of State-Level Transaction Fees

- How to Hack Perplexity and Get Unlimited Claude Opus at Someone Else's Expense

- Snapinsta – Best Instagram Content Downloads

Thank you!