Talkie-1930: The Largest “Vintage” LLM Ever Built, Trained Exclusively on a World That Ended in 1930

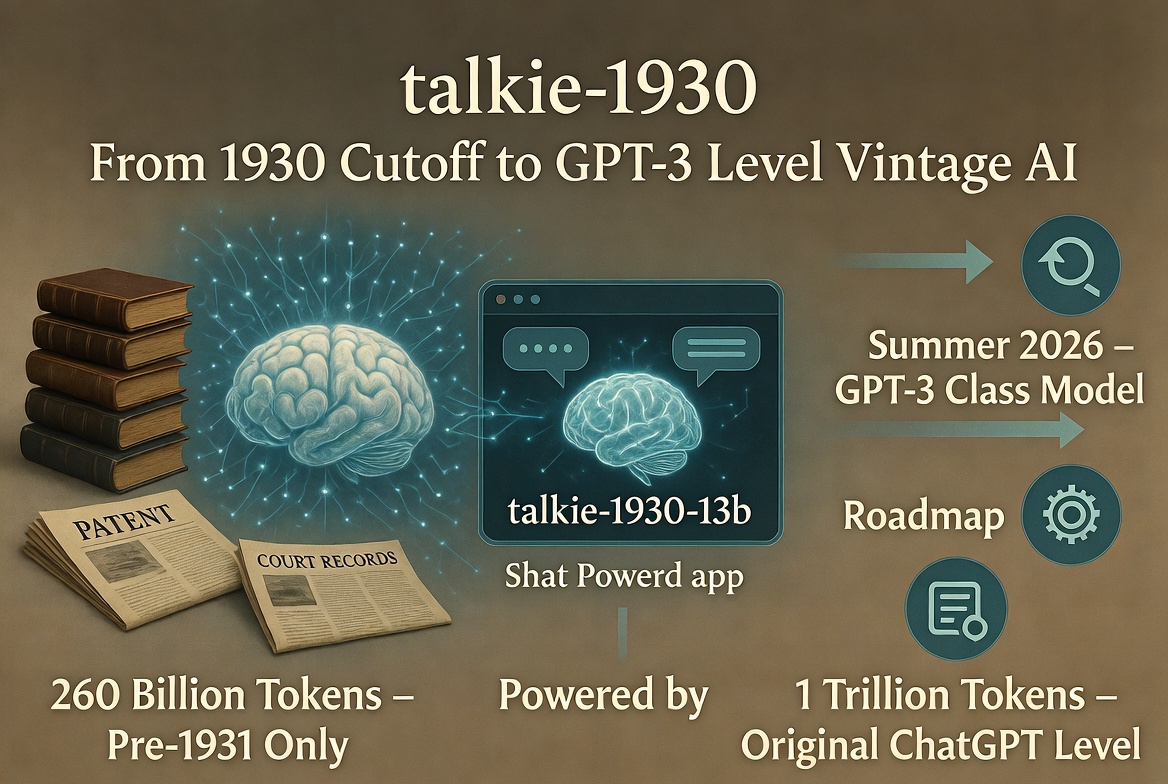

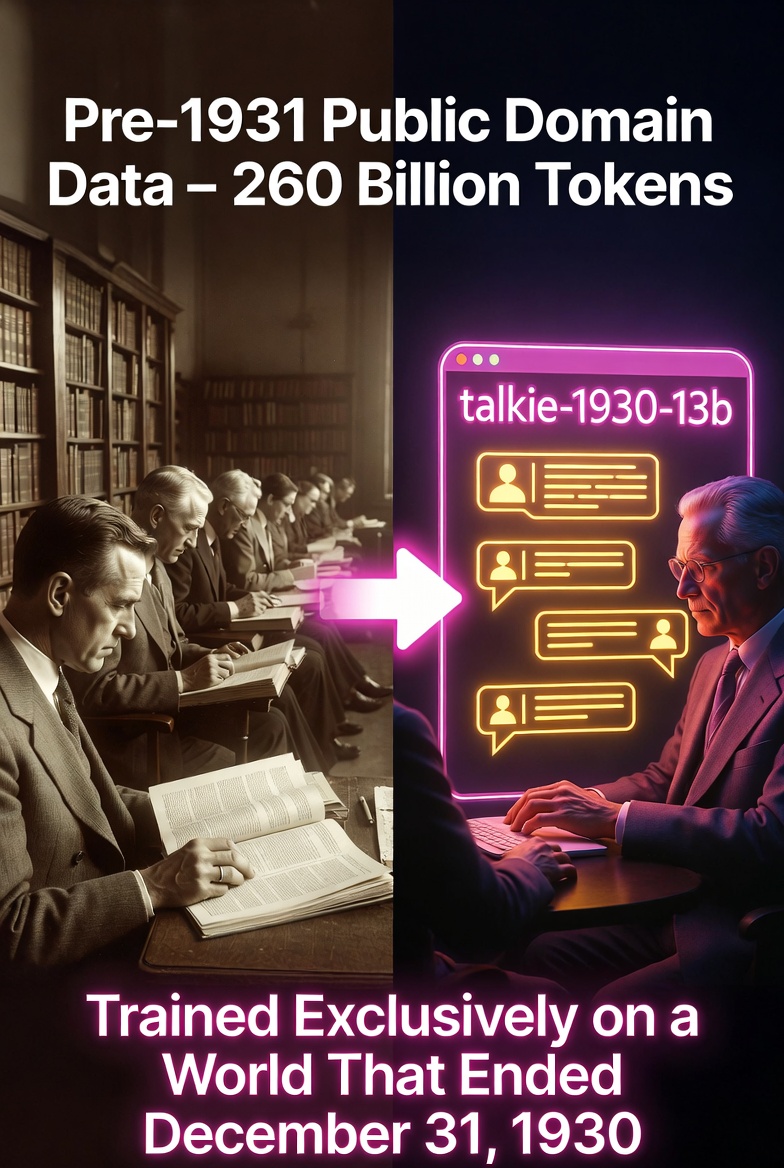

A team of researchers including Alec Radford (co-creator of GPT, CLIP, and Whisper), Nick Levine, and David Duvenaud has released talkie-1930-13b — a 13-billion-parameter language model trained from scratch on nothing but 260 billion tokens of English-language text published before December 31, 1930. Books, newspapers, periodicals, scientific journals, patents, and court decisions — all strictly pre-1931 public-domain material. No internet. No Wikipedia. No World War II. No New Deal. No United Nations.

The project is deliberately provocative. Modern LLMs are trained on the messy, contaminated firehose of the contemporary web. Talkie is the opposite: a clean historical snapshot designed to let researchers study what large language models can actually reason about rather than simply regurgitate. As the team puts it, “Vintage LMs are contamination-free by construction, enabling unique generalization experiments.”

On standard benchmarks, the model behaves exactly as one would expect from a 1930-era intelligence. It underperforms its “modern twin” — an identical 13B model trained on the same compute budget but using today’s FineWeb dataset — particularly on knowledge-heavy evaluations. The gap shrinks dramatically once questions that would be anachronistic to a 1930 reader are filtered out. On core language understanding and numeracy tasks, however, the vintage model stays surprisingly competitive.

Coding ability tells a fascinating story. On HumanEval, talkie lags far behind modern models — but it can still produce correct, simple Python solutions when given in-context examples. It does so not by memorizing code (there is none in its training data), but by reasoning from 19th-century mathematics and logic texts. Scale clearly helps: larger vintage models show steady improvement.

The team also built a custom n-gram-based anachronism classifier to catch post-1930 contamination. Even so, early versions occasionally “knew” about the Roosevelt administration or World War II, revealing just how insidious temporal leakage can be.

Post-training was equally inventive. Because modern chat data was off-limits, the team constructed an entirely historical instruction dataset drawn from 1930-era etiquette manuals, letter-writing guides, encyclopedias, and poetry collections. They used Claude Sonnet 4.6 as a judge for online DPO and Claude Opus 4.6 to generate synthetic multi-turn conversations — an ironic but carefully flagged step they plan to eliminate in future versions.

The roadmap is ambitious. The team aims to release a GPT-3-class vintage model by summer 2026. If they can grow the pre-1931 corpus beyond one trillion tokens, they believe they can reach the capability level of the original ChatGPT.

Can a model trained only on pre-Turing texts “invent” the idea of a universal computing machine? How well can it predict — or be surprised by — events after 1930? These are questions modern web-trained models can never answer cleanly.

Talkie-1930-13b-base and its instruction-tuned sibling talkie-1930-13b-it are available today on Hugging Face under the Apache 2.0 license, with inference code on GitHub and a live demo at talkie-lm.com.

You can chat with a mind that genuinely believes the most exciting technological frontiers still lie in radio, aviation, and the orderly progress of Western civilization — and has never heard of smartphones, the internet, or artificial intelligence itself.

In an era when every new model seems trained on the same ever-growing pile of contemporary data, talkie reminds us that sometimes the most interesting insights come from looking backward — all the way back to a world that ended on the last day of 1930.

Also read:

- Artisan AI: The Most Controversial AI SDR in Tech, From Provocative Billboards to LinkedIn Bans

- Logitech's Epic Fail: Expired Certificate Bricks Millions of Mac Mice and Keyboards

- OnlyFans Models and Influencers Dominate U.S. 'Extraordinary Talent' Visas, Sparking Debate on Immigration Priorities

Thank you!