Stop Projecting Human Qualities onto AI — How to Actually Build Effective AI Systems

There’s a quiet but fundamental mistake that almost everyone outside of machine learning keeps making when they try to adopt AI:

They project their own human expectations onto the model.

Because the machine can now generate fluent text, many assume it must think, remember, learn, and behave like a person. It doesn’t. And it won’t — at least not with current LLM architecture.

The Core Problem: We Treat LLMs Like Digital Humans

- Understand context the way a human does,

- Remember everything important forever,

- Learn permanently from a single example,

- Know instinctively what matters and what doesn’t.

None of these qualities exist in today’s large language models.

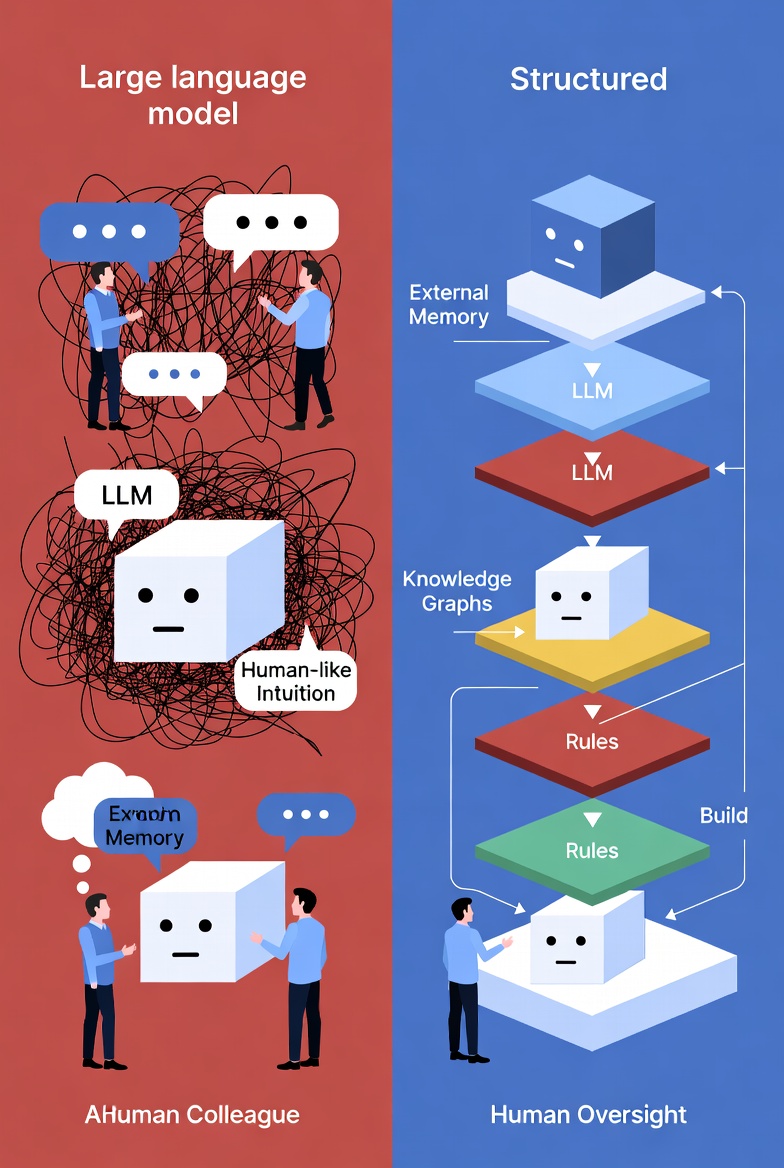

An LLM is not a brain. It is a extremely sophisticated probabilistic token predictor running on fixed weights. It doesn’t “think” and then decide. It generates the next token based on patterns it saw during training. That’s it.

Humans, thanks to millions of years of biological evolution, are extraordinarily sample-efficient. We can learn a new rule, habit, or intuition from just a few examples and keep it for life. Modern neural networks are the opposite: they require billions of tokens, massive compute, and synthetic datasets — and even then they still lack real-time plasticity and stable long-term learning.

This architectural gap creates a constant mismatch between what we expect and what the model can actually deliver.

Why Projection Is So Dangerous

- It will remember everything we told it last month,

- It will understand which details are important without being explicitly told,

- It will adapt instantly when something changes.

Reality: it forgets context as soon as the window fills up, it has no innate sense of importance, and it doesn’t truly learn — it only simulates learning within the current conversation.

The result? Fragile, over-promising systems that break in production, require constant human babysitting, and deliver disappointing ROI.

The Right Way to Build AI Solutions

Stop trying to grow a “brain in a jar.”

Instead, treat the LLM as what it actually is: an incredibly powerful but very specific computational tool with clear strengths and glaring limitations.

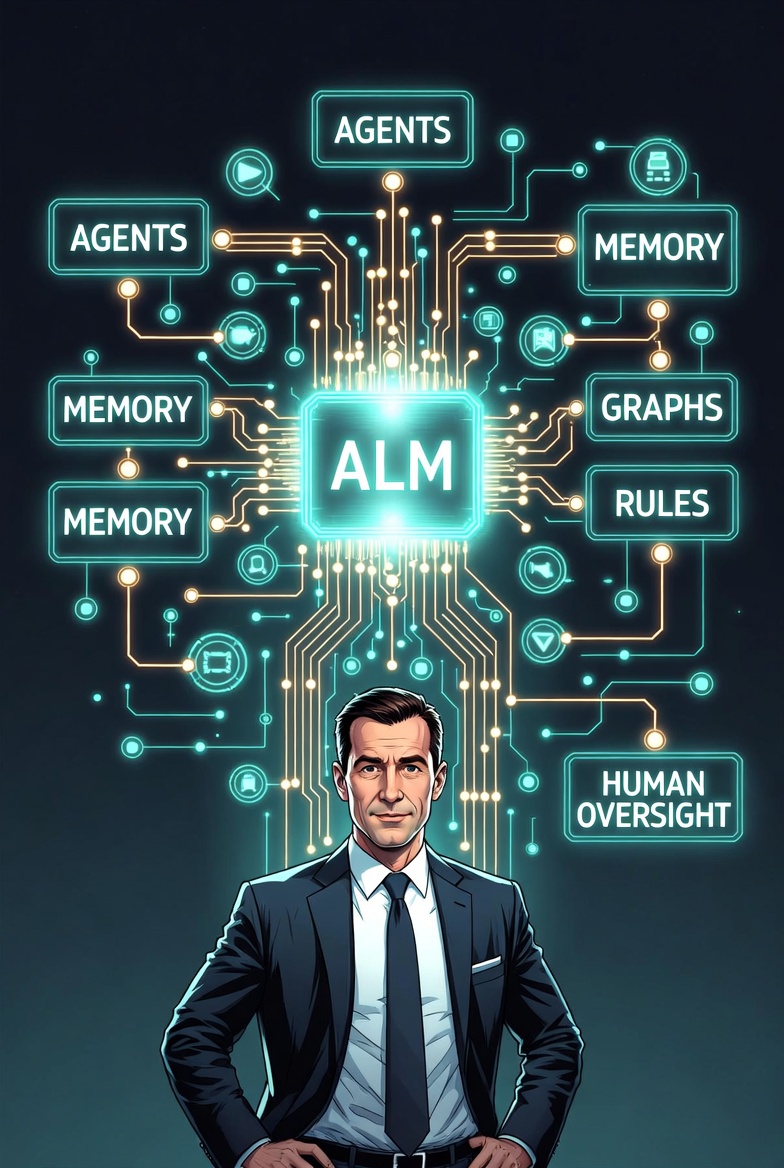

1. Design systems around the model, not inside it

Build external architecture — memory layers, knowledge graphs, rule engines, retrieval systems, human-in-the-loop checkpoints — that compensate for the LLM’s weaknesses.

2. Think in terms of tools, not people

Define exactly what the model is good at and what it is bad at. Then design processes that play to its strengths and route everything else to humans or other tools.

3. Focus on organizational and product design, not prompt engineering

The real leverage is in deciding:

- Where exactly in your workflow the model should be inserted,

- Which decisions it is allowed to make autonomously,

- How you will monitor quality, catch hallucinations, and handle context drift.

4. Accept that the model will never “think like a human”

Stop waiting for the next model to magically become more human-like. Build around the model you have today.

Alo read:

- Vibe-Metaversing Is Here: Google Just Made Building XR Worlds as Easy as “Vibe It”

- CapCut Just Launched an AI Video Studio That Ditches Timelines Entirely

- And What Are You Going to Do About It? Netflix Raises Prices Again

The Bottom Line

The biggest mistake you can make in 2026 is trying to turn an LLM into a digital employee.

The winning approach is to stop projecting human qualities onto it and start engineering complete systems that use the LLM as a powerful, narrow, non-human component.

Build the scaffolding first.

Accept its limitations as design constraints.

Then let the model do what it does best — statistical pattern matching at superhuman scale.

That’s how you create AI systems that are actually reliable, scalable, and valuable — instead of expensive toys that disappoint the moment they leave the demo.

Stop projecting.

Start engineering.