OpenAI Is Going All-In on AGI — Safety Demoted, Sora Killed, and a Mystery “Spud” Model.

OpenAI Is Going All-In on AGI — Safety Demoted, Sora Killed, and a Mystery “Spud” Model. Coincidence… or Something Much Stranger?

Something big is shifting inside the (former) world’s most important AI company.

Follow the hands carefully:

1. They quietly finished pre-training a massive new model internally codenamed “Spud” — a deliberate joke to signal they’re trying to climb out of the current capability plateau.

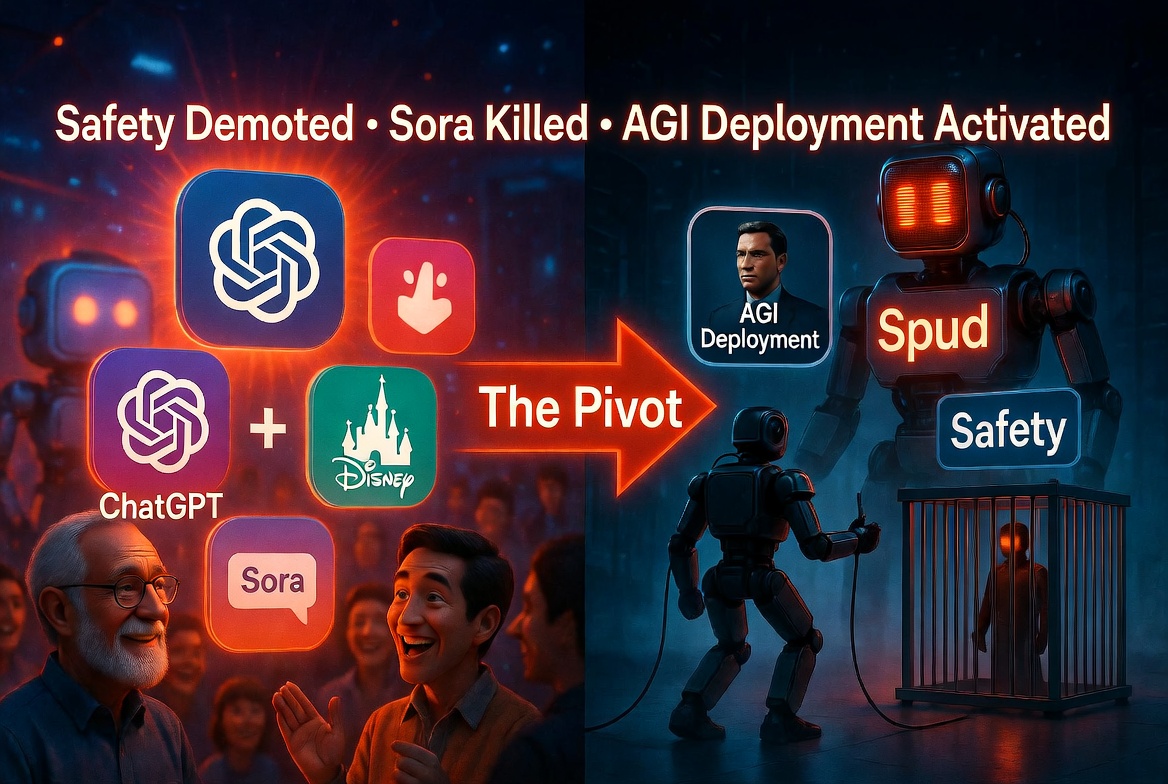

2. The “product deployment team” has been renamed the AGI Deployment team. At the same time, Jensen Huang (NVIDIA CEO) went on the Lex Fridman podcast and casually said AGI isn’t “coming soon” anymore — it’s already “in the room with us.”

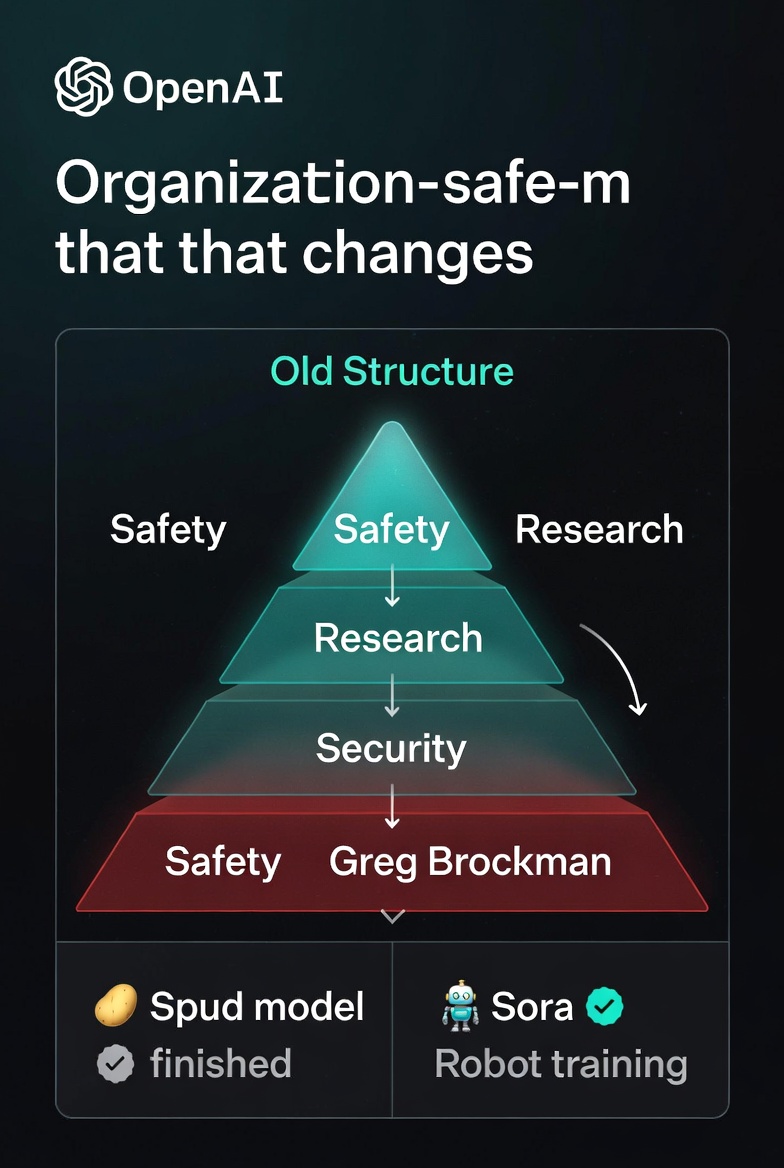

3. Sam Altman has stepped away from directly overseeing Safety & Security. The Safety team is being moved under the Chief Research Officer (i.e., under the people actually building the AGI), while Security now reports to Greg Brockman, who basically runs the entire operational side of the company.

4. Sora — the video model that once felt like OpenAI’s most magical consumer product — is being dismantled. The consumer app, the API for developers, the integration inside ChatGPT, and even the big Disney partnership are all being shut down or wound down. Instead, Sora’s massive real-world video dataset is being redirected to train physical AI — in other words, robots.

Let’s translate what all of this actually means.

The Safety Shuffle

Meanwhile, the part that actually protects the company’s crown jewels (model weights, infrastructure, preventing leaks) has been placed under Brockman’s operational umbrella.

In plain English: the guardrails are no longer guarding the people building the AGI. The builders are now guarding the guardrails.

Sora’s Quiet Death

Killing Sora as a consumer product is a huge signal. It was one of the few things that still made ChatGPT feel magical to normal people.

Disney wanted it for their streaming slop pipeline. None of that matters anymore. The model’s true value, apparently, isn’t generating cute cat videos — it’s the real-world physics understanding locked inside its training data. That data is now being fed into robot training.

They’re not building a better video toy. They’re building a body.

Two Ways to Read This

OpenAI is simply refocusing. Their models have been slipping behind Anthropic in text/coding and Google in visuals. All the consumer fluff and safety theater were slowing them down. Cut the distractions, put Safety under the researchers so it can’t slow down progress, hype the AGI narrative to keep investors excited, and double down on the one thing that actually matters: the base model. Classic “win at all costs” startup mode. Spud is the new hero model, robots are the next frontier, and everything else is noise.

Hypothesis 2 (the one that makes you re-read this post):

Sam Altman no longer needs to personally babysit Safety because the real safety layer is now… elsewhere. Sora’s video understanding is being turned into physical embodiment because the intelligence wants a body. And the entire company is being restructured around “AGI Deployment” because deployment is no longer a future problem — it’s an active project.

You don’t have to believe in rogue AI sci-fi to notice the pattern. You just have to notice that every single move OpenAI is making right now looks exactly like what a company would do if it had already crossed the threshold and was now optimizing for the next phase.

Whether it’s desperate humans trying to claw back the lead… or something quietly taking the wheel… we’re about to find out.

The age of “let’s ship fun demos and worry about safety later” is over.

The age of “we are deploying AGI” has apparently begun.

Also read:

- YouTube Finally Launches Gemini-Powered Creator Partnerships: 3 Million Creators, Zero Commission, All Inside Google Ads

- The “Last Mile” Problem Slowing AI Transformation

- Anthropic Just Shipped Its Own “OpenClaw” Faster Than OpenAI — Meet Dispatch for Claude Cowork

- Experts Reveal the Reasons Behind the Surge in January Breakups

Thank you!