OKRs for AI: Bridging Human Management Practices to Agent Orchestration

In the evolving landscape of AI-driven business, one intriguing parallel emerges: AI agents face many of the same challenges as human employees. Corporations have spent decades refining systems to transform unpredictable, chaotic individuals into reliable drivers of business outcomes.

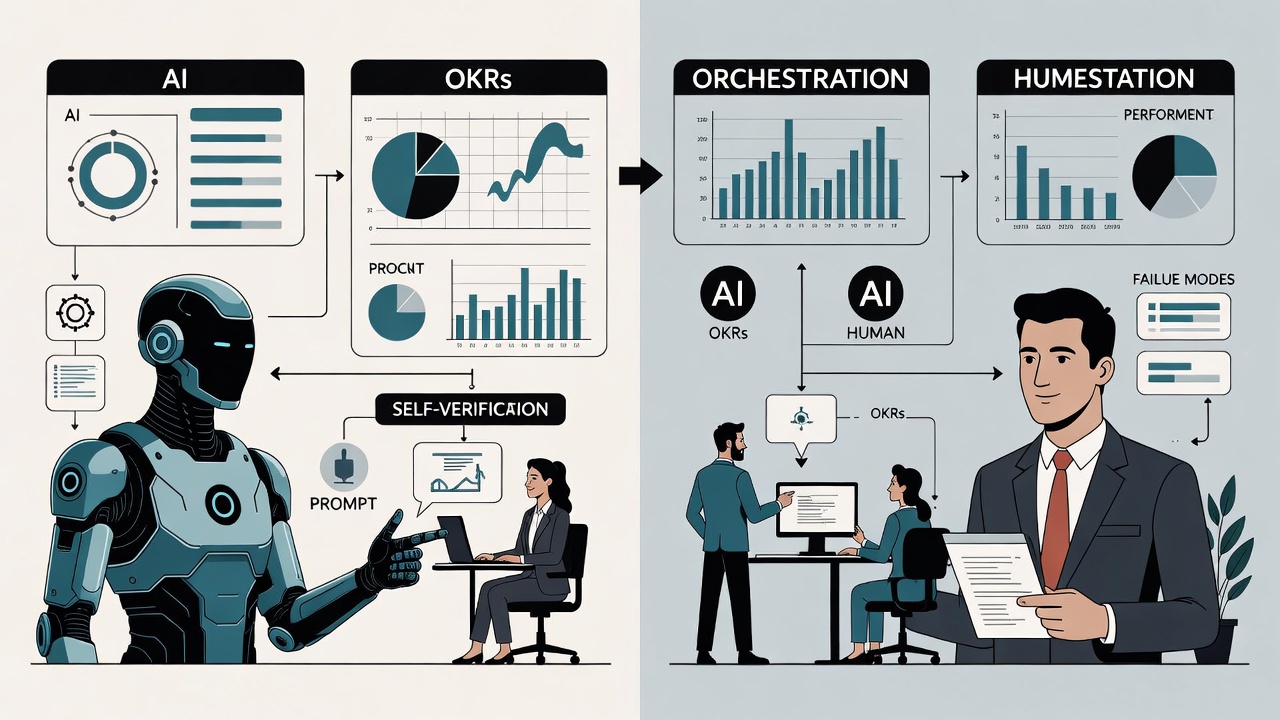

AI agents, powered by Large Language Models (LLMs), inherit this stochastic nature. Their outputs can be inconsistent, hallucinatory, or contextually limited. To mitigate this, we layer on orchestration, validation, self-verification, guardrails, templates, and structured JSON outputs—essentially building similar crutches to make AI predictable and productive.

This isn't a coincidence; it's a direct analogy. By drawing from decades of management science, we can adapt these practices to orchestrate AI agents effectively, though the implementations differ significantly due to the fundamental differences between humans and machines.

The 1:1 Parallels: Human Management Meets AI Orchestration

Management frameworks aren't just for people; they provide timeless patterns for handling any unpredictable system.

- OKRs = Agent Goal Definition: In human teams, OKRs set high-level objectives with measurable key results, fostering alignment without micromanagement. For AI agents, OKRs define the "what" of success—e.g., "Optimize customer retention by 15%" rather than a rigid task list. Agents then generate paths to achieve it, with key results serving as evaluation metrics. As noted in management literature, OKRs unlock creative problem-solving; for agents, this means prompting them to iterate on strategies rapidly, with humans validating the outputs.

- Stand-ups = Status Checks and Checkpoints: Daily human stand-ups provide quick updates on progress, blockers, and adjustments. For agents, this translates to real-time status checks, logging intermediate results, and checkpoints in workflows. Tools like retry logic ensure agents pivot automatically on failures, maintaining momentum without constant intervention.

- Regulations and Templates = Prompt Templates and Runbooks: Corporate regulations standardize processes to reduce variability. In AI, prompt templates and predefined runbooks (step-by-step scripts) enforce consistency, guiding agents through complex tasks while minimizing stochastic drift.

- Peer Review = Self-Verification and Cross-Validation: Human peer reviews catch errors through collaboration. AI equivalents include self-verification loops — where an agent critiques its own output — and cross-validation between multiple agents, ensuring outputs align before proceeding.

- Organizational Structure = Orchestration Graphs: Hierarchies in companies define roles and reporting lines. For AI, this is the orchestration graph: a directed acyclic graph (DAG) outlining how agents interact, delegate subtasks, and escalate issues.

- Performance Reviews = Evaluation Benchmarks: Annual reviews assess human growth and output. For agents, benchmarks like accuracy scores, latency metrics, or hallucination rates provide ongoing performance evaluation, informing model fine-tuning or redeployment.

These mappings aren't theoretical; they're already emerging in practice. For instance, a LinkedIn post by Marc Boscher highlights how OKRs apply to agents, emphasizing measurable outcomes over task lists, but notes the need for human oversight to validate agent-generated plans. This underscores the value of borrowing from human management to tame AI's inherent randomness.

Key Differences: Motivation vs. Mechanics

- Failure Modes: Humans might cut corners due to laziness or burnout; agents hallucinate or drift due to probabilistic sampling. Solutions for people involve incentives and rest; for agents, it's temperature control in prompts, fact-checking integrations, and memory augmentation.

- Pace and Scale: Humans operate on quarterly OKR cycles, as Grove's framework was designed for human rhythms. Agents, however, iterate daily or hourly, generating outputs 1,000x faster. This creates a "management bottleneck" where human review can't keep up, necessitating automated evals and hybrid systems.

- Sustainability: People tire and lose focus; agents run indefinitely but risk context lossin long sessions. Human management emphasizes work-life balance; AI orchestration focuses on efficient token usage and persistent state management.

These differences highlight why AI-specific adaptations are crucial. For example, AI-powered OKR tools, as discussed in industry analyses, automate tracking and insights for human teams but can be inverted: using OKRs to guide agent swarms in real-time goal pursuit.

Practical Applications: Building Agent Systems with Management Wisdom

If you're developing AI agent systems, dive into management science — it's an undervalued toolkit for "herding cats" at scale. Start by defining OKRs for your agents: What business outcome should they drive? Use orchestration platforms like LangChain or CrewAI to build graphs mirroring org structures.

Incorporate evals as performance reviews: Benchmark agents on datasets simulating real tasks, iterating on prompts like refining employee training. For motivation analogs, implement reward models from reinforcement learning, aligning agents to objectives without "cultural" overhead.

Real-world examples abound. In goal management, AI agents track OKRs autonomously, providing predictive analytics and automating adjustments. Businesses leveraging this see enhanced efficiency, as agents handle data collection while humans focus on strategy.

Also read:

- Powered by AI Assistant LingGuang, a "Hand-Crafted AI Economy" Emerges in China

- AI Revenue Surge: Anthropic Doubles Earnings Amid Pentagon Talks, OpenAI Reaches $25 Billion Milestone

- AI-Psychosis: The Emerging Risk of Large Language Models Inducing Delusions

- Blockchain Forum 2025: Global Crypto Leaders to Meet in Moscow

The Future: Hybrid Herding

AI agents aren't people, but the quest to make stochastic systems predictable unites both domains. By adapting OKRs and other management practices, we can orchestrate agents into reliable business engines. The key? Recognize the parallels, respect the differences, and iterate relentlessly. As AI evolves, so too will our "management" of it — turning chaos into consistent value. If management tamed human herds, it can certainly corral digital ones.