NVIDIA Lyra 2.0 Solves Spatial Forgetting and Temporal Drift in Generative Video

NVIDIA has unveiled Lyra 2.0, a new framework that generates persistent, explorable 3D worlds from a single image. Developed by NVIDIA Research, it tackles one of the biggest headaches in generative video AI: the inability of models to maintain coherent, long-horizon scenes when the virtual camera moves freely, especially when it revisits previously seen areas or makes sharp viewpoint changes.

The Persistent Problem with Generative Video Models

Over longer sequences, small errors compound: colors shift, object shapes warp, geometry drifts, and the entire scene gradually falls apart. This makes it nearly impossible to create believable, navigable environments for applications beyond simple TikTok-style videos.

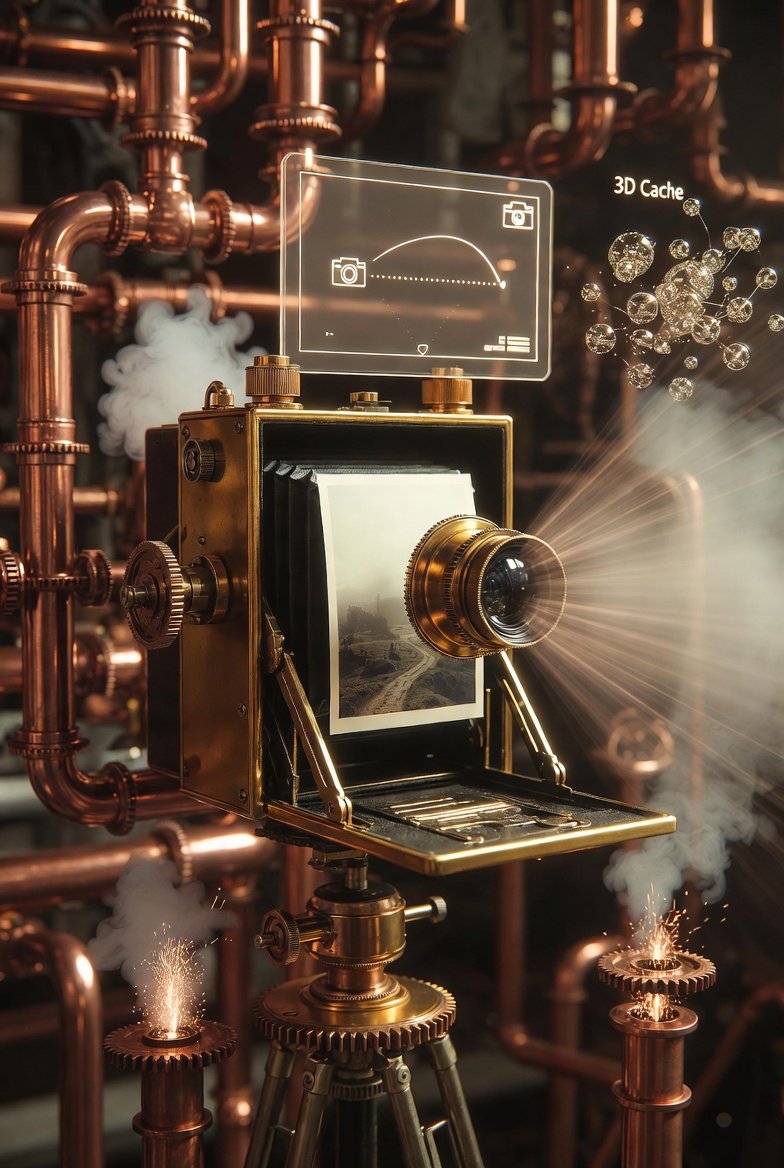

NVIDIA's engineers claim to have cracked this issue with a surprisingly practical approach. Instead of forcing the model to remember everything internally, they bolted on an explicit 3D cache that acts as an external spatial memory.

How Lyra 2.0 Works: 3D Cache + Smart Retrieval

The pipeline starts with a single input image (and an optional text prompt). Users define a camera trajectory through an interactive 3D explorer interface.

- For every generated frame, Lyra 2.0 estimates depth and stores camera parameters along with a downsampled point cloud in the growing 3D cache.

- When generating a new frame (especially after a camera turn or revisit), the system retrieves the most relevant past frames based on visibility from the target viewpoint.

- It warps these historical frames into the current coordinate system using the cached 3D geometry, establishing dense correspondences.

- These correspondences, along with compressed temporal history, are injected into the Diffusion Transformer (DiT) via attention mechanisms. The model still relies on its strong generative prior for appearance synthesis, but the geometry acts as a reliable "scaffold" to prevent hallucination in already-explored regions.

This geometry-aware retrieval effectively solves spatial forgetting — the model no longer has to reinvent the world from scratch when the camera looks back.

Fixing Temporal Drift with Self-Augmented Training

During training, NVIDIA researchers deliberately feed the model its own slightly degraded predictions as part of the history. This self-augmented approach teaches the network to correct and clean up its own mistakes rather than propagating and amplifying them frame by frame.

Combined with context compression for longer histories, it results in significantly more stable long-range video generation.

From Video to Interactive 3D Worlds

The output can be exported as:

- 3D Gaussian Splatting scenes for high-quality, real-time rendering;

- Point clouds or meshes;

- Fully navigable environments suitable for VR experiences.

The scenes are coherent enough that users can freely explore them, revisit locations, and even extend the world into previously unseen areas while maintaining consistency with what came before.

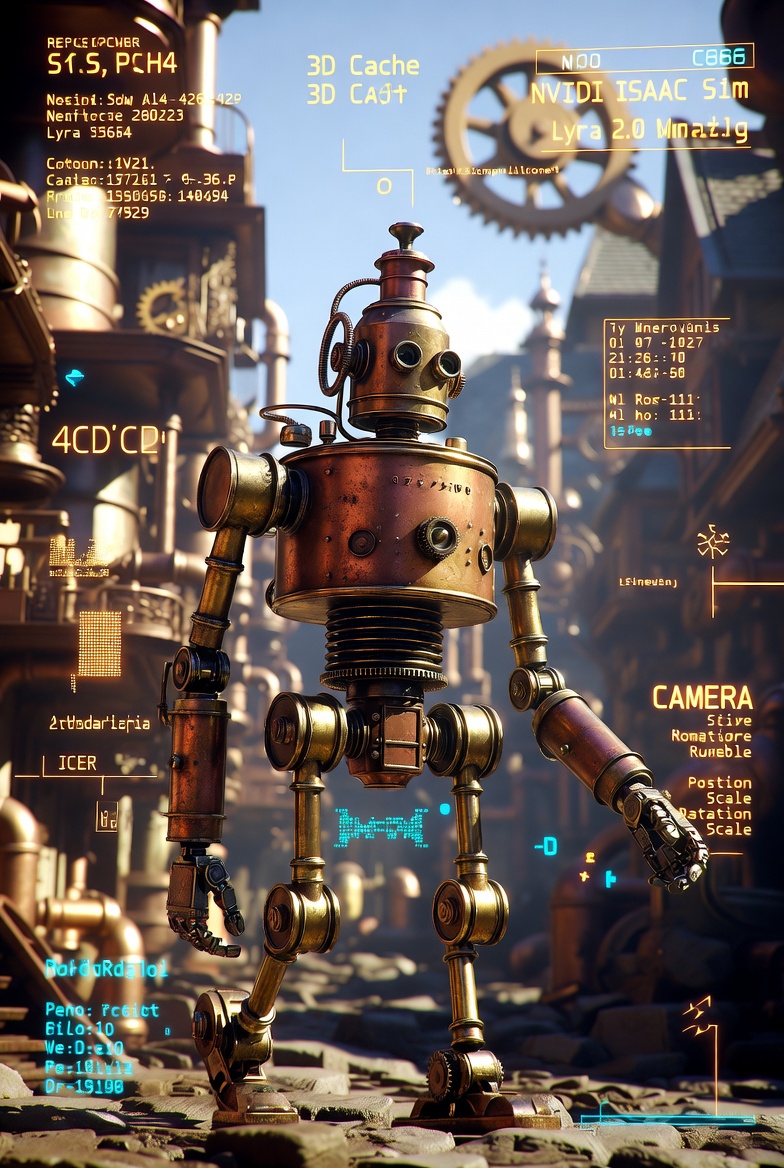

Beyond entertainment, the system supports practical downstream use cases. Generated scenes can be exported directly into physics engines like NVIDIA Isaac Sim, enabling physically grounded robot navigation, interaction, and training for embodied AI. This makes Lyra 2.0 particularly relevant for simulation, robotics, and scalable world model development.

Also read:

- Cloudflare Just Made Email a First-Class Citizen for AI Agents — And Traditional Email Services Are Feeling It

- Mozilla Nails It: Thunderbolt Brings “ChatGPT at Home” to the Enterprise — Without Vendor Lock-In

- X Is Finally Cracking Down on Unlabeled Ads — And It’s Personal

Implications for Creators and Developers

For 3D artists, level designers, and game developers, this doesn't mean the end of traditional tools just yet — but it signals a shift. Generating large, coherent environments from a single image and a camera path could dramatically speed up prototyping and world-building. The ability to drop a robot into a physically plausible version of the generated scene opens new doors for AI training and simulation.

Lyra 2.0 is detailed in a new arXiv paper (arXiv:2604.13036), with interactive demos, video examples, and a gallery available on the official NVIDIA Research project page. While the full model weights and code details are hosted on Hugging Face under NVIDIA's organization, the framework represents a meaningful step toward truly persistent generative 3D worlds.

In short, NVIDIA has shown that combining video diffusion models with explicit 3D memory and clever self-correction can turn fleeting generative clips into explorable, expandable realities. The era of AI-built virtual worlds you can actually walk through — and come back to without everything falling apart — is getting closer.