Mistral Medium 3.5: The 128B Multimodal Model That Actually Fits on Your Hardware

Mistral AI has just released Mistral Medium 3.5 — a dense multimodal model with 128 billion parameters and a massive 256k token context window.

Performance and Positioning

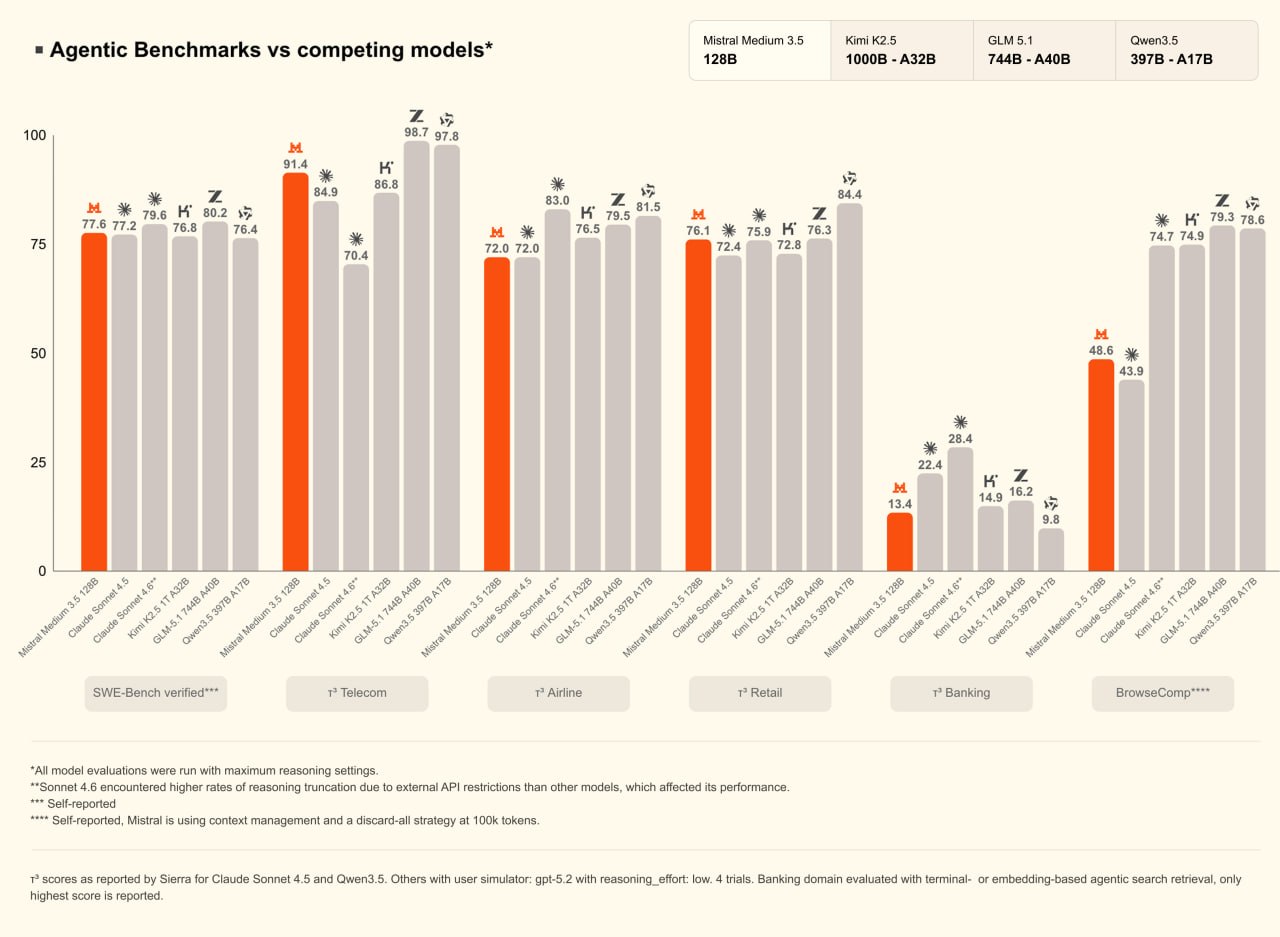

Medium 3.5 clearly outperforms all previous Mistral models. It handles long-context reasoning, multimodal inputs (text + vision), and complex agentic workflows noticeably better than its predecessors.

However, it still doesn’t reach the absolute ceiling set by the very largest open models (think 400B+ parameter beasts). That’s not a bug — it’s the point. Mistral deliberately positioned Medium 3.5 in a weight class where it has almost no direct competition. Most models that match or beat its capabilities are several times larger, which makes this 128B model unusually practical for real-world use.

Built for Local Deployment

To make sure it doesn’t crawl like a turtle during inference, Mistral also released a dedicated speculative decoding head.

This accessory dramatically speeds up generation while keeping quality high — a smart move that turns a theoretically heavy model into something actually usable outside of massive GPU clusters.

Pricing and Cloud Strategy

If you’re considering the official API, the numbers are straightforward:

- $1.5 per million input tokens;

- $7.5 per million output tokens.

At those prices, there’s very little incentive to run Medium 3.5 in the cloud. The model was clearly designed to be self-hosted. The open weights are already available on Hugging Face, and the economics strongly favor downloading and running it yourself.

Licensing

It’s a clean, predictable rule that protects Mistral’s business model without scaring away the broader developer community.

Also read:

- $375 Billion Underfunded: Atomico Shows Why Europe’s VC Remains Fragmented and Government-Heavy

- MrBeast's Bold Leap into Fintech: Acquiring Step Amidst Business Challenges

- AI Hype vs. Reality: Deloitte's Tech Trends 2026 Exposes the Gap Between Talk and Deployment

Links

- Model weights & collection: https://huggingface.co/collections/mistralai/mistral-medium-35

- Official announcement: https://mistral.ai/news/vibe-remote-agents-mistral-medium-3-5

The Bottom Line

Mistral Medium 3.5 isn’t trying to be the biggest model on the leaderboard. It’s trying to be the most usable high-performance model in its size range — and it largely succeeds.

For developers, researchers, and companies that want frontier-level capabilities without needing a supercomputer or paying premium cloud rates, this release is genuinely exciting. It continues Mistral’s pattern of shipping practical, no-nonsense models that close the gap between “impressive benchmark” and “actually runnable on real hardware.”

If you’re into local LLMs or building production agents that need long context and multimodal understanding, Medium 3.5 deserves a serious look. The era of “small but mighty” is getting stronger — and Mistral just made it a lot more interesting.