Mistral AI Teaches Small Models to Think Like Giants Using Cascade Distillation

In a significant advancement for efficient AI deployment, Mistral AI has unveiled the Ministral 3 family of models, leveraging an innovative technique called cascade distillation to create compact yet powerful vision-language models. This approach allows smaller models to inherit the "thinking" capabilities of larger ones, enabling high performance in resource-constrained environments like edge devices and local setups.

By distilling knowledge from a robust "teacher" model through multiple stages, Mistral is bridging the gap between heavyweight AI training and lightweight production inference.

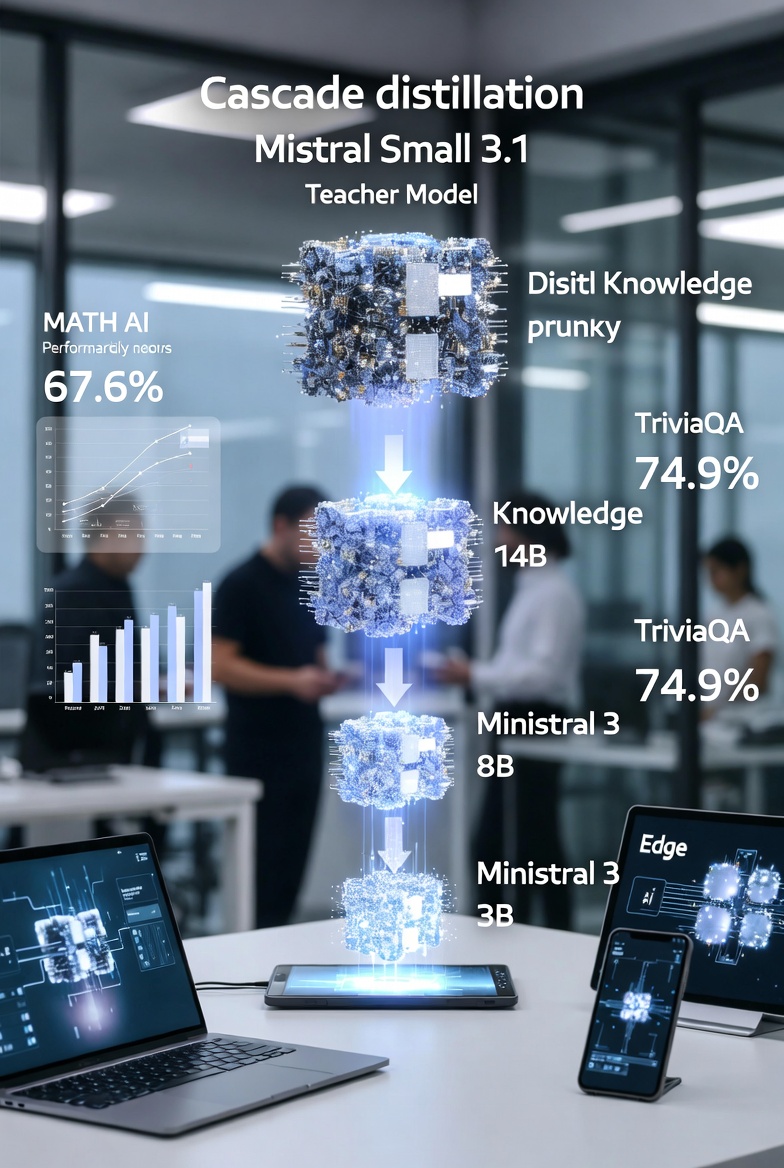

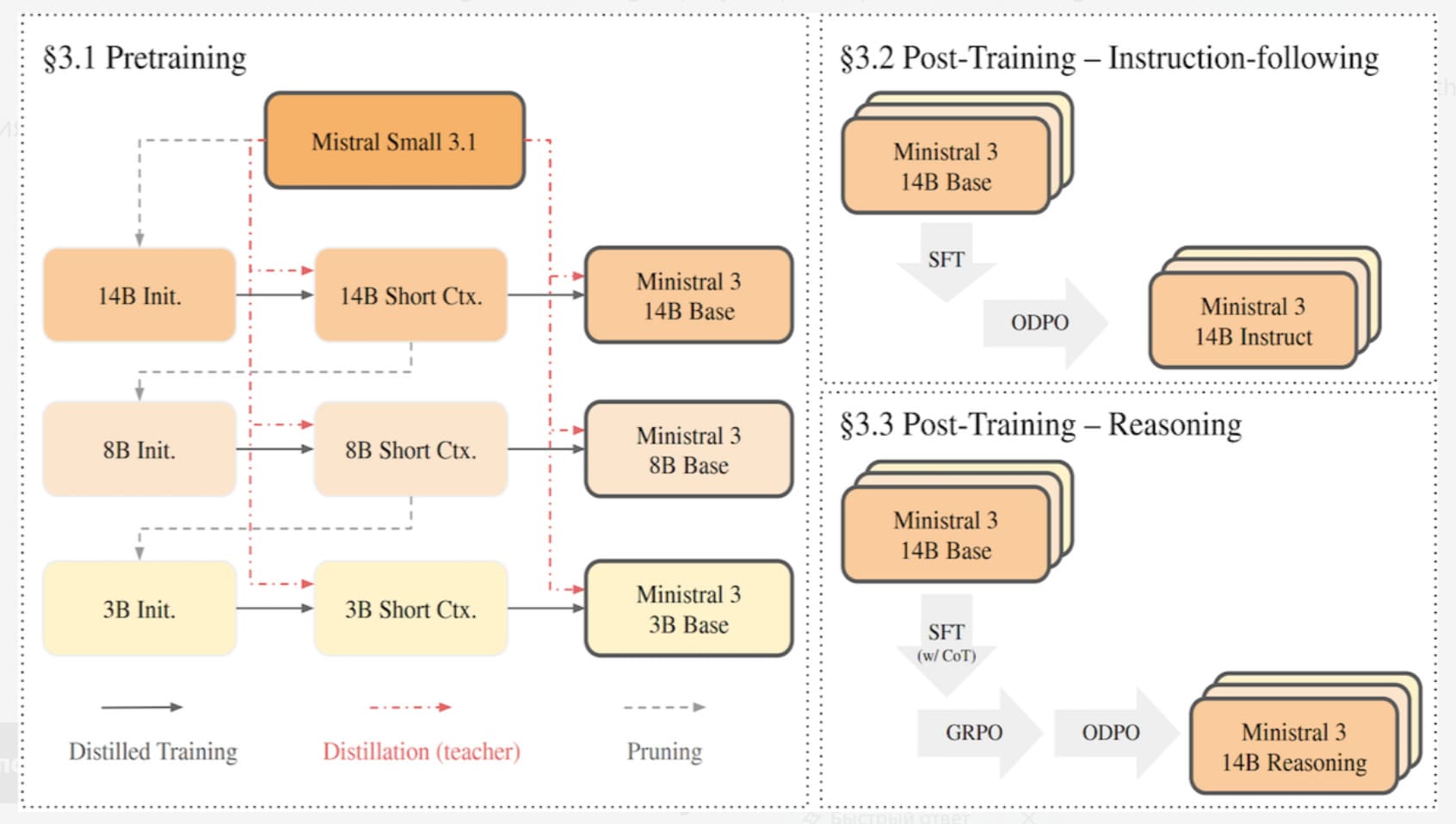

Understanding Cascade Distillation

Pruning involves strategically removing layers that minimally impact inputs, reducing internal representation sizes, and narrowing fully connected layers. This creates an initial smaller model, such as the 14B variant, which then serves as the foundation for the next size down.

Unlike traditional single-step distillation, the cascade method proceeds iteratively: each subsequent model learns from the outputs and refinements of the previous one. During pretraining, the pruned models are distilled by mimicking the teacher's outputs, with better results from Mistral Small 3.1 than the even larger Mistral Medium 3.

Fine-tuning incorporates additional techniques like ODPO (Offline Direct Preference Optimization) for instruction-following and GRPO (Group Relative Policy Optimization) for reasoning variants, using step-by-step examples in math, coding, and more. Mistral Medium 3 assists in fine-tuning stages to enhance quality.

This multi-stage process ensures that knowledge is transferred efficiently, resulting in models that "think" like their larger counterparts but with reduced computational demands.

Here is a visualization of the cascade distillation process:

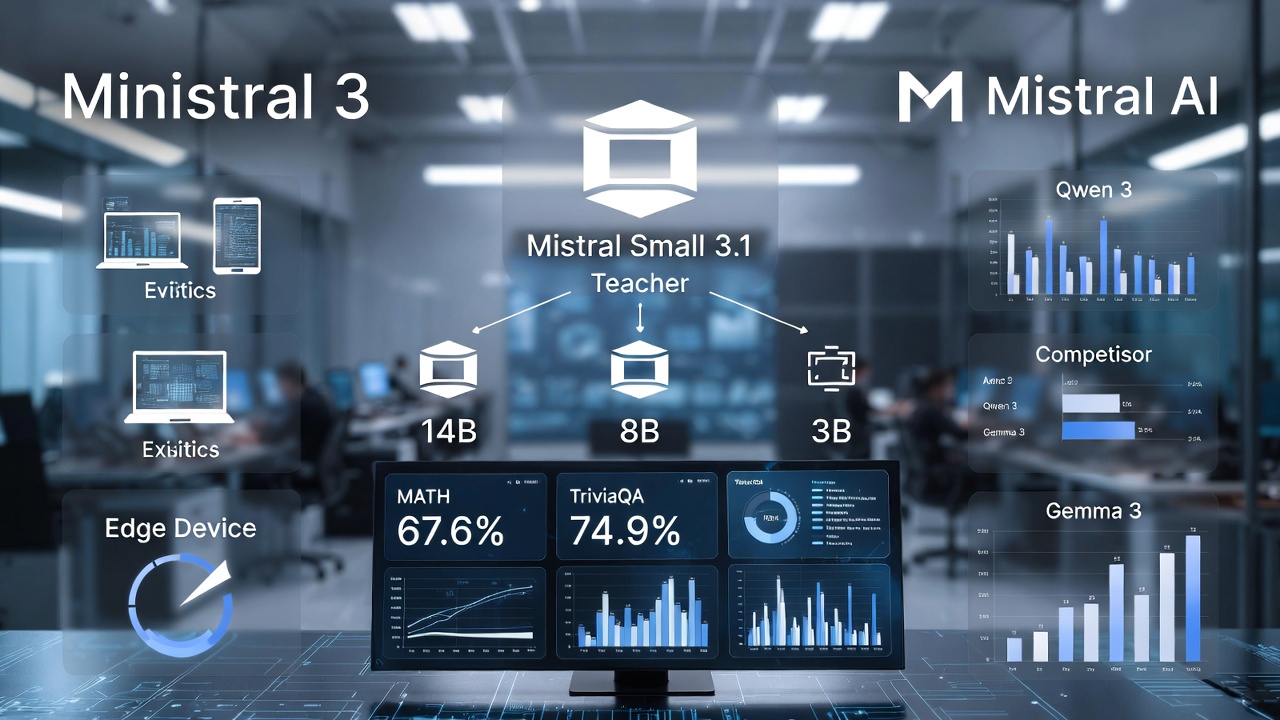

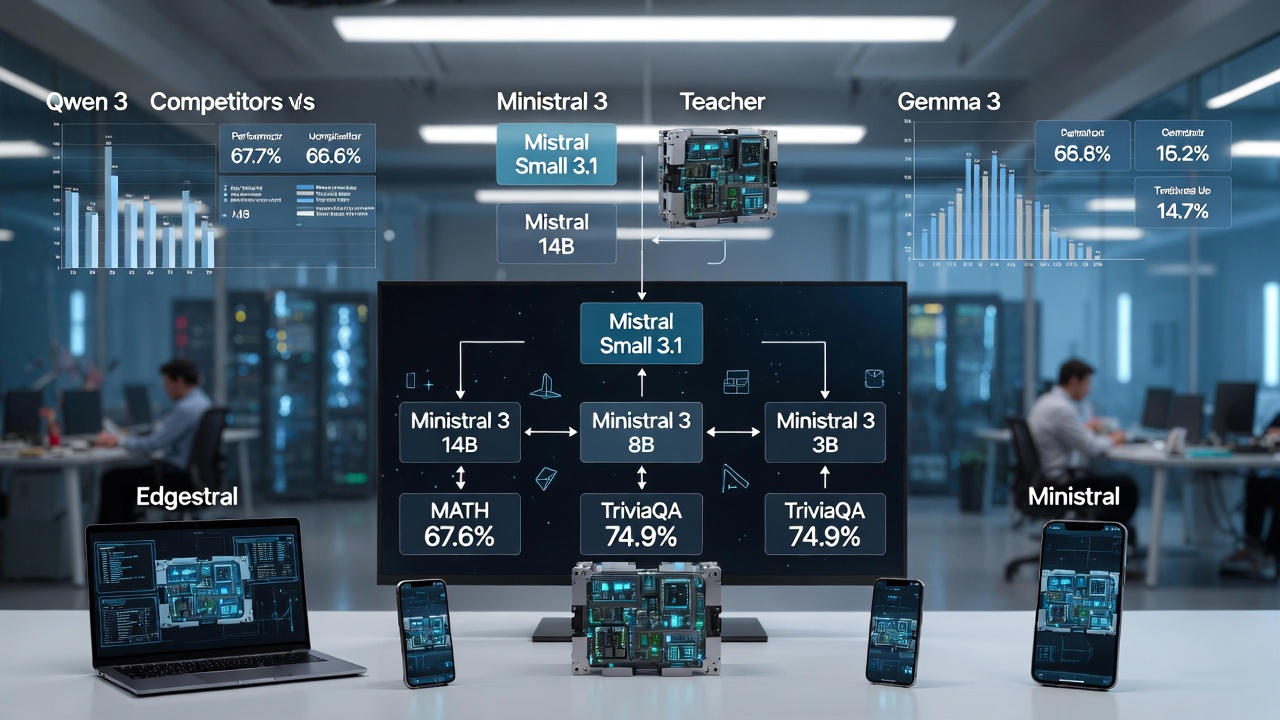

The Ministral 3 Family

The Ministral 3 lineup includes models with 14 billion, 8 billion, and 3 billion parameters, each available in base, instruction-tuned, and reasoning variants. These are open-weights vision-language models under the Apache 2.0 license, handling text and image inputs (up to 256,000 tokens for base, 128,000 for reasoning) and generating text outputs. They support multilingual capabilities in 11 languages, tool use, and a decoder-only transformer architecture.

API pricing reflects their efficiency: $0.20 per million tokens for the 14B, $0.15 for the 8B, and $0.10 for the 3B. Training used just 1 to 3 trillion tokens—far fewer than competitors like Qwen 3 or Llama 3, which require 15 to 36 trillion—making the process quicker and less resource-intensive.

Performance Highlights

The 14B Base matches or exceeds Mistral Small 3.1 on benchmarks like MATH (67.6% vs. competitors' lower scores), TriviaQA (74.9%), and GPQA Diamond. It ranks ahead of Mistral Small 3.1 and 3.2 on the Artificial Analysis Intelligence Index.

Comparisons show:

- Ministral 3 14B outperforms Qwen 3 14B on MATH (67.6% vs. 62%) and TriviaQA (74.9% vs. 70.3%), though it trails Gemma 3 12B slightly on some.

- The 8B Base beats the larger Gemma 3 12B on most tests except TriviaQA.

- The 3B Base competes strongly with Gemma 3 4B and Qwen 3 4B, excelling on MATH.

- Reasoning variants shine on AIME 2025 (85% accuracy for 14B vs. 73.7% for Qwen 3 14B Thinking).

These metrics demonstrate that cascade distillation preserves high accuracy while shrinking model size.

Benefits for Real-World Deployment

The Ministral family's key advantages lie in efficiency: faster inference times, lower production costs, and suitability for edge devices like laptops and smartphones. By using large models primarily for training and deploying small, distilled versions in production, organizations can scale AI services without prohibitive expenses. This approach reduces energy consumption and enables local, on-device AI, expanding accessibility for applications in mobile, IoT, and resource-limited settings.

- Vibe Coding and ICOs: The Hamster Wheel of Hype for the Average User

- How to Become the Top 1% in the Age of AI

- Building a Solid Foundation for Marketing Strategy Development Using ChatGPT, Gemini, and Claude

- Partnership Announcement QUASA + TOKEN2049

Conclusion

Mistral AI's cascade distillation represents a smart evolution in model development, empowering small models to perform like giants. The Ministral 3 family not only achieves high precision with fewer parameters but also paves the way for more sustainable and scalable AI. As the field moves toward edge computing, techniques like this could democratize advanced AI, making powerful tools available beyond data centers. Developers and businesses can explore these models now, freely downloadable and ready to integrate.