Martian Releases Largest Open-Source Benchmark for AI Code Review Agents

Martian, a leader in AI-driven code review tools, has launched Code Review Bench, touted as the largest benchmark for evaluating AI agents that review code.

By incorporating real-world data and a novel architecture, Code Review Bench ensures assessments reflect genuine capabilities rather than rote learning.

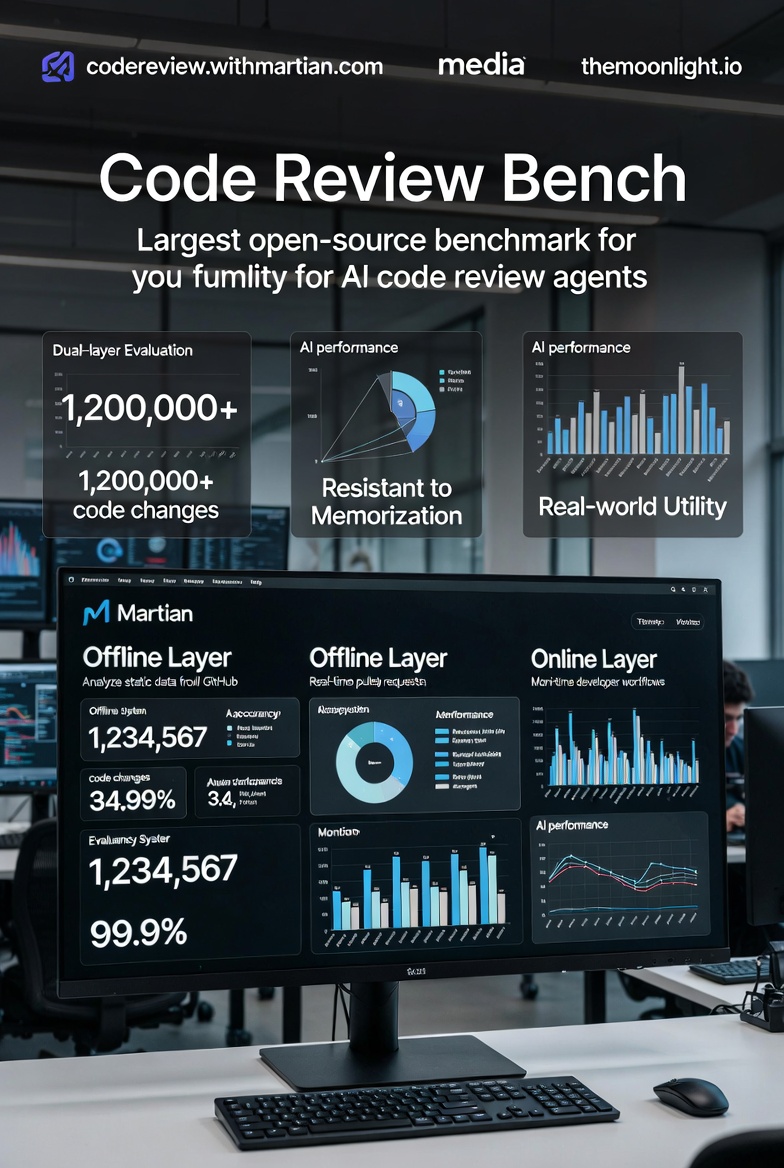

Solving the Memorization Problem with Dual-Layer Evaluation

Martian's solution is a Dual-Layer Evaluation system that prevents gaming:

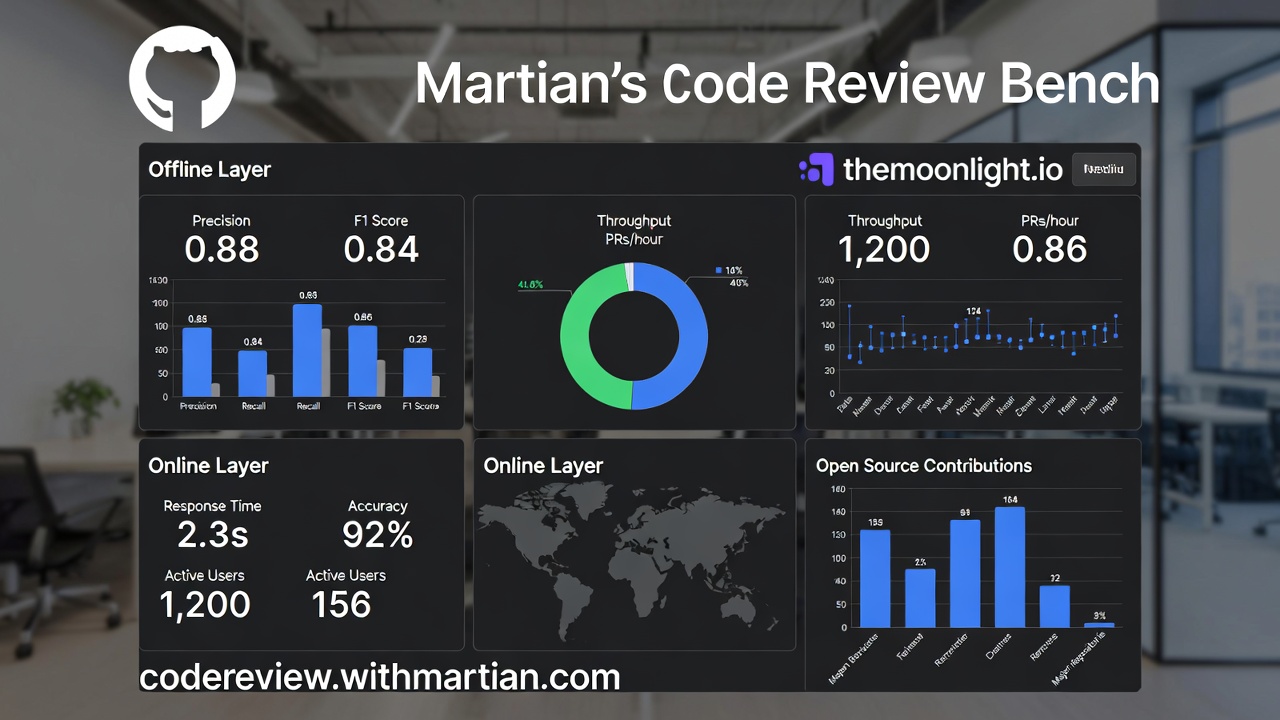

- Offline Layer: Provides a fair, static comparison using historical data. It analyzes thousands of real pull requests (PRs) from GitHub where AI bots have participated, scoring models on precision (avoiding noise), recall (thoroughness), and F1 based on whether suggestions result in actual code changes.

- Online Layer: Monitors real-time behavior in developer workflows, capturing how tools perform in live environments. Discrepancies between offline and online results flag overfitting or manipulation.

This self-correcting mechanism makes the benchmark resistant to marketing hype or test-specific tuning, ensuring it remains a true measure of utility.

What's Inside the Benchmark

- Over 1.2 million real code changes from GitHub PRs involving AI bots.

- Data on actual developer behaviors, including review timelines, responses, and outcomes.

- Evaluation of AI review quality in production settings, focusing on impact rather than lab metrics.

- Full neutrality: Martian does not sell coding assistants, avoiding conflicts of interest.

As an open-source project, the benchmark is accessible for community contributions, fostering transparency and continuous improvement.

- MIT Study Reveals 'Cognitive Debt': How Over-Reliance on AI Weakens Independent Thinking

- ByteDance Pursues Custom AI Chip Development Amid Samsung Manufacturing Talks

- China Ushers in a New Era for PhDs: Practical Achievements Replace Traditional Dissertations

- Elon Musk's Bold Claim: Retirement Savings May Become Obsolete in 10-20 Years

Implications for AI in Development

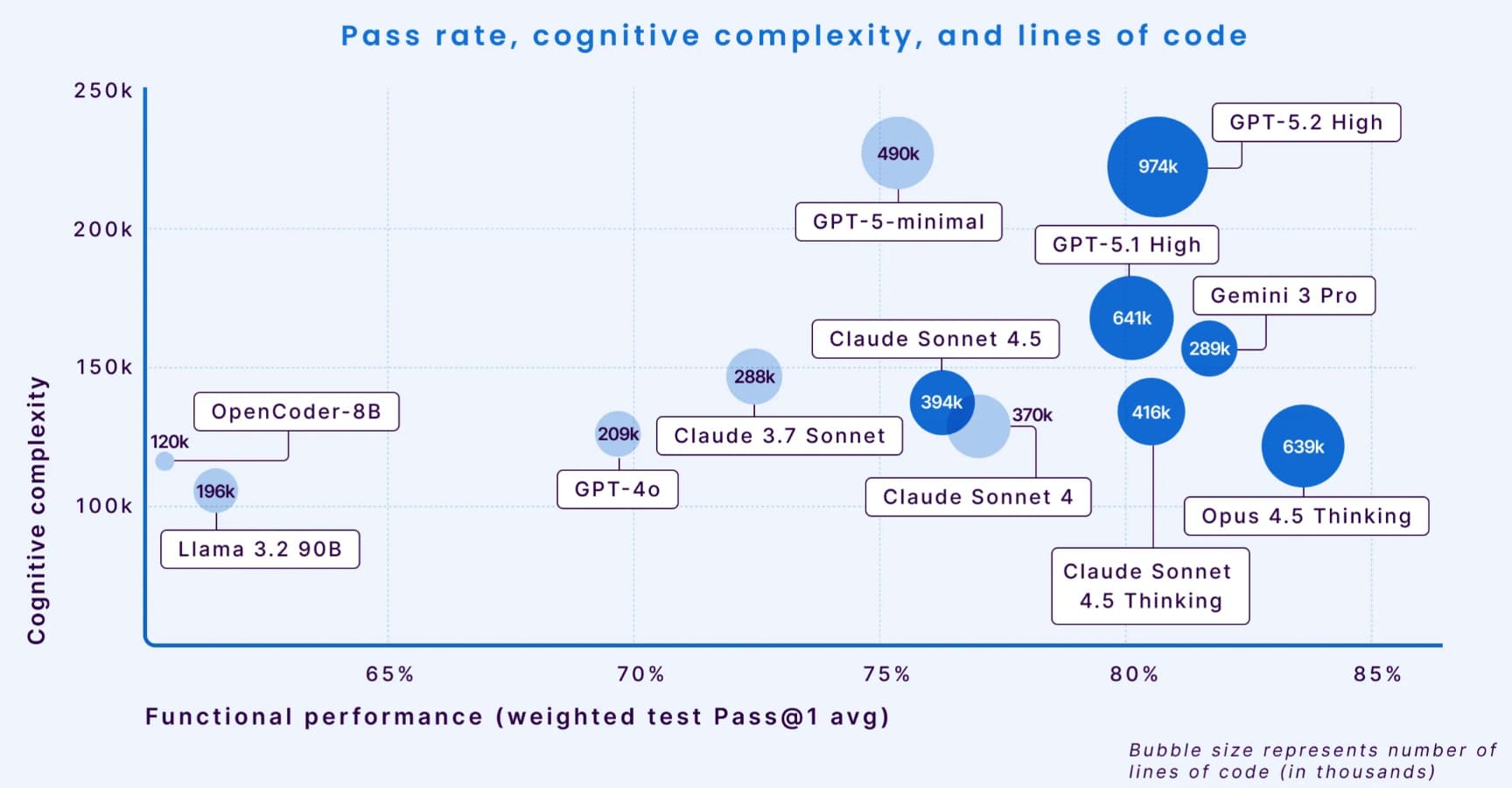

This benchmark is the first to not degrade over time, providing a reliable gauge of AI tools' real-world value. It shifts focus from synthetic tests to practical benefits, helping developers and companies select agents that truly enhance workflows.

As AI agents evolve, tools like Code Review Bench will be crucial in maintaining standards amid rapid innovation.

For more details, visit the official site at https://codereview.withmartian.com/.