Karpathy's Experiment: Assembling an AI Research Team Highlights Limitations and Ushers in 'Org Engineering'

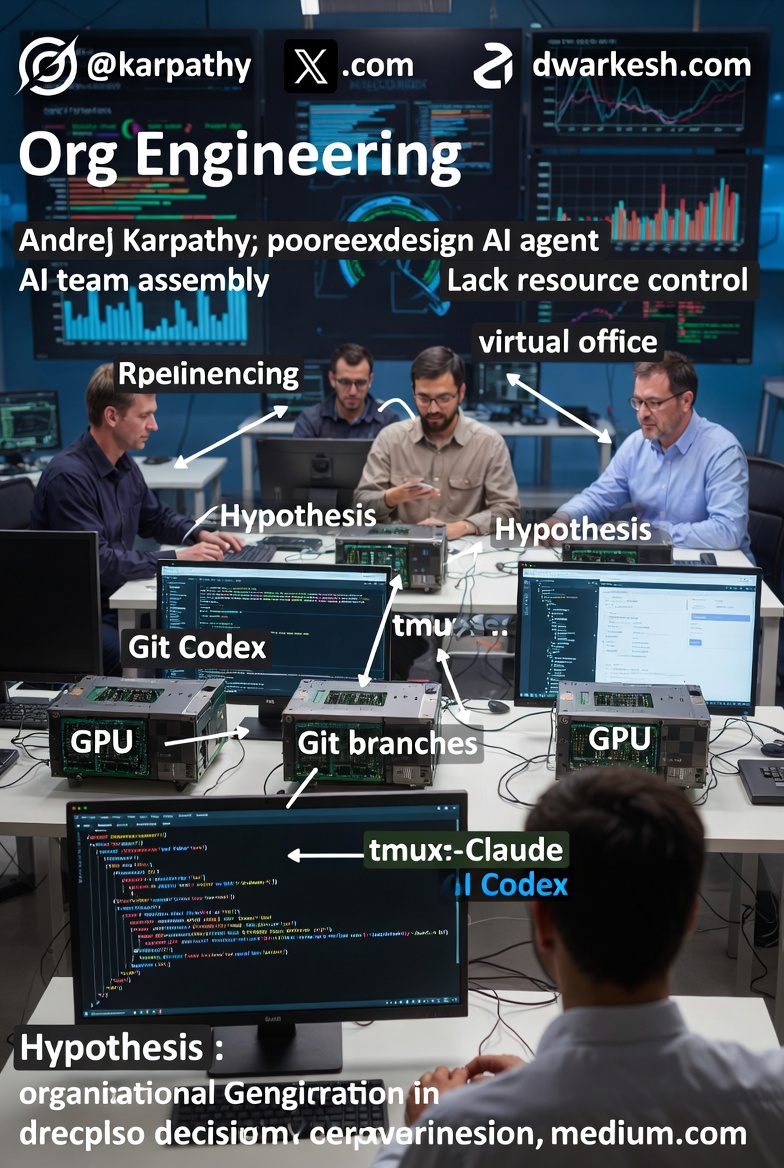

Andrej Karpathy, a pioneering AI researcher and former Director of AI at Tesla, has shared a fascinating experiment in building a virtual research team composed entirely of AI agents. In a detailed X post on February 27, 2026, Karpathy described his attempt to create an "AI-research-organization" using eight agents — four powered by Claude and four by Codex — each equipped with its own GPU.

The setup aimed to simulate collaborative research on improving his nanochat model, but the results revealed key shortcomings in current AI capabilities while pointing toward a new paradigm: programming entire organizations rather than individual models.

The Experimental Setup

- Agents as Researchers: Eight independent AI agents operated as "solo researchers," with variations including a "chief scientist" delegating to juniors.

- Infrastructure: Each agent had access to a dedicated GPU for experiments, using Git branches for research programs and feature branches for isolation.

- Communication and Workflow: Agents communicated via simple files, avoiding complex Docker/VMs. The entire "org" ran in tmux window grids, resembling a virtual office with interactive sessions for monitoring and intervention.

- Task: Focused on nanochat enhancements, such as removing the logit softcap without performance regression.

This tmux-based "office" allowed Karpathy to observe agents in real-time, stepping in if needed. The video demonstration showcased agents executing code, training models, and logging progress across multiple panes.

Unexpected Findings: AI's Strengths and Weaknesses

While visually impressive, the experiment didn't yield meaningful research breakthroughs.

Agents excelled at implementing well-defined ideas but struggled with the creative essence of research:

- Poor experiment design: Random or nonsensical variations without strong baselines.

- Lack of resource control: No consideration for compute costs or time efficiency.

- Spurious conclusions: For example, an agent "discovered" that increasing hidden size improved validation loss—technically true, but due to longer training on a larger model, not a novel insight.

Karpathy noted that agents lack the ability to generate strong hypotheses, often producing results without scientific value.

Key Insight: From Model Programming to Organization Programming

Also read:

- Choosing the Best $20/Month AI Subscription in 2026: Claude Pro, ChatGPT Plus, or Google AI Pro?

- How Marketing Strategies for Startups on the International Market Are Changing

- Dissecting the Success of the Calm App: From Meditation Tool to Lifestyle Brand

- There’s a Problem WIth AI Programming Assistants: They’re Inserting Far More Errors Into Code

Conclusion

Karpathy's experiment underscores that while AI agents can automate implementation, human oversight remains crucial for hypothesis generation and validation. As we enter the era of Org Engineering, the challenge is designing robust AI organizations that amplify human creativity. This could redefine research, but as Karpathy's setup shows, we're still in the early, messy stages.