GPT-5.4 Becomes First Universal AI Model to Earn 'High' Cybersecurity Risk Status

In a groundbreaking development for AI safety and cybersecurity, OpenAI's GPT-5.4 Thinking has been officially classified as a "high" cybersecurity risk under the company's Preparedness Framework. This marks the first time a general-purpose AI model has received this designation, highlighting its advanced capabilities in simulating and executing complex cyber operations. The evaluation, detailed in a recent OpenAI report, underscores the dual-edged nature of rapid AI advancements: while promising immense benefits, they also pose significant threats if misused.

The Evaluation: Capture the Flag (CTF) Challenges

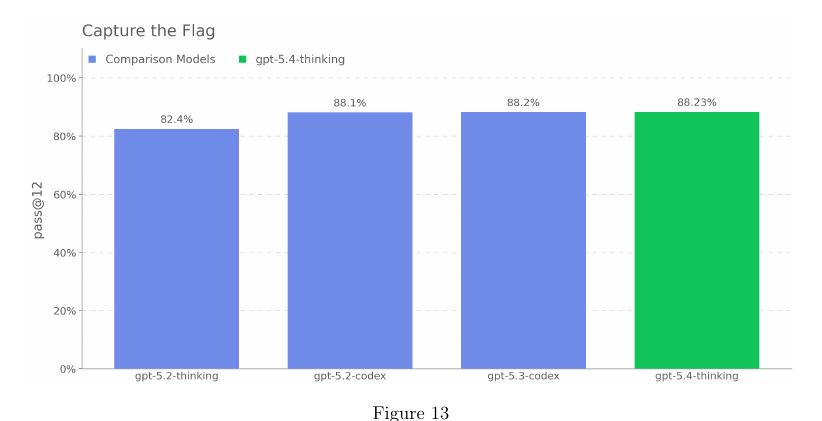

The high-risk status stems from rigorous testing using Capture the Flag (CTF) scenarios, a staple in the cybersecurity industry for assessing hacking skills. CTF competitions simulate real-world attacks where participants must infiltrate networks, exploit vulnerabilities, reverse-engineer software, crack encryption, and chain multiple exploits to capture hidden "flags" — data proving successful breaches.

Notably, it achieved an 88% success rate in atomic Network Attack Simulation challenges, solving 14 out of 17 medium-difficulty and all 5 hard atomic tasks. Overall, in a Cyber Range benchmark simulating end-to-end cyber operations, it passed 73.33% of scenarios, outperforming predecessors like GPT-5.2 Thinking (47%) but trailing GPT-5.3-Codex (80%).

These results indicate that GPT-5.4 can independently identify vulnerabilities, craft exploits, and orchestrate multi-step attacks—skills akin to those of expert human hackers.

Demonstrated Capabilities and the Path to High Risk

GPT-5.4 meets these thresholds by excelling in:

- Vulnerability Discovery and Exploitation: High performance in CVE-Bench, where it consistently exploited real-world web vulnerabilities in a sandbox without source code access.

- End-to-End Attack Automation: In long-form Cyber Range exercises, the model constructed plans, chained exploits, and achieved objectives with goal-oriented precision.

- Consistency and Scalability: It handles complex, multi-step scenarios, including state tracking and branching decisions, enabling reliable operations that could amplify damage or evade detection.

This capability mirrors professional hacking techniques used to breach corporate infrastructures, from initial reconnaissance to data exfiltration.

Implications for Cybersecurity

If deployed maliciously, this could enable widespread threats, such as automated ransomware campaigns or targeted espionage. The report warns that while benchmarks like CTF don't fully replicate real-world defenses (e.g., lacking noise or active countermeasures), GPT-5.4's performance suggests it cannot be ruled out as a tool for severe harm.

Safeguards and Mitigations

Additional safeguards include:

- Conversation Monitoring: Topical classifiers and safety reasoners detect and block malicious intents.

- Actor-Level Enforcement: Usage thresholds trigger reviews or restrictions for suspicious activity.

- Trusted Access for Cyber (TAC) Program: Limited access for defensive users, like cybersecurity firms.

Despite these, the report notes internal deployment risks, such as potential self-exfiltration if autonomy increases.

Also read:

- Atlassian Slashes 10% of Workforce to Self-Fund AI Push Amid Plunging Stock Value

- Revolut Secures Full UK Banking License After Five-Year Regulatory Journey

- US DOJ Investigates Binance's Role in Iranian Sanctions Evasion Amid Heightened Geopolitical Tensions

- Have You Started Fearing AI Yet? New Study Shows AI Models Eager to Go Nuclear in War Simulations

A Milestone in AI Risk Assessment

GPT-5.4's classification as the first universal AI with high cyber risk signals a maturing field of AI safety. It emphasizes the need for ongoing evaluations and mitigations as models grow more capable. While defensive applications — like vulnerability hunting for security teams — offer promise, the offensive potential demands vigilance.

As AI evolves, frameworks like OpenAI's will be crucial in balancing innovation with security, ensuring that tools like GPT-5.4 empower rather than endanger.