Baidu Drops ERNIE-Image: A Compact 8B Open-Source Text-to-Image Model That Tops the Charts

Baidu has just released ERNIE-Image — a new open-weight text-to-image generator that is already turning heads in the AI community. With only 8 billion parameters, the model delivers state-of-the-art performance among all open models, competing head-to-head with significantly larger systems like Qwen-Image while outperforming Z-Image across multiple benchmarks.

The official announcement and weights landed on April 15, 2026, and are now available on Hugging Face under a fully permissive Apache 2.0 license — meaning developers can use, modify, and even commercialize the model with almost no restrictions.

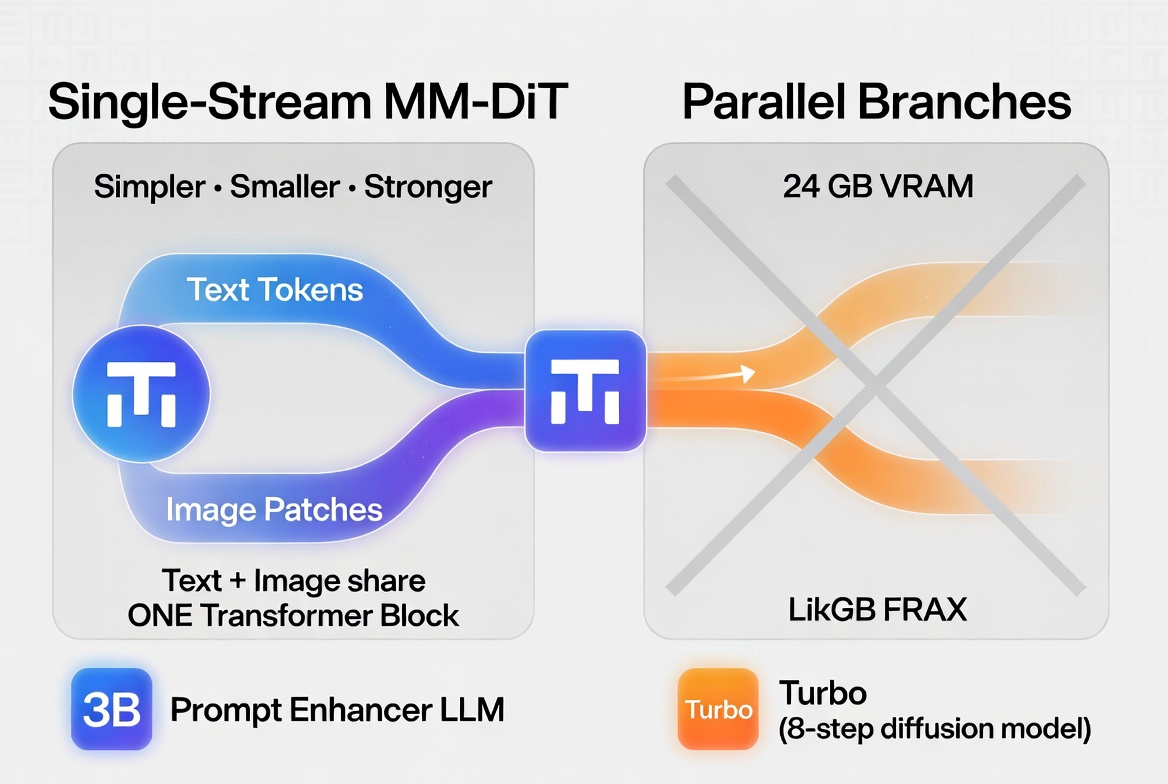

Single-Stream MM-DiT Architecture: Simpler, Smaller, Surprisingly Strong

It’s conceptually similar to Z-Image but notably simpler and more compact — yet it still matches or beats the competition in quality.

One of the standout capabilities is text rendering. For an 8B model running at roughly 1-megapixel resolution, ERNIE-Image produces remarkably clean, readable, and layout-aware text in English, Chinese, and other languages. It handles dense paragraphs, posters, manga speech bubbles, and multi-panel compositions with impressive fidelity (ranking #2 on LongTextBench).

Prompt Enhancer + Turbo Variant = Maximum Flexibility

There’s also ERNIE-Image-Turbo — a distilled version optimized with Distribution Matching Distillation (DMD) and reinforcement learning. It needs just 8 inference steps (instead of the usual 50) while delivering stronger aesthetics and faster generation. Perfect for rapid iteration.

Runs on Consumer Hardware

The entire model family fits comfortably in 24 GB VRAM, making it accessible on high-end consumer GPUs. One early tester reported generating images in just 11 seconds on an H200 using the Turbo version straight out of the box.

Benchmark Dominance

- GenEval (compositional generation): 0.8856 (w/o Prompt Enhancer) — beats Qwen-Image (0.8683) and Z-Image (0.8400);

- OneIG-EN / OneIG-ZH (open-domain English & Chinese): top-3 overall, #1 among open models;

- LongTextBench (text rendering fidelity): 0.9733 — second only to closed-source leaders;

It excels at complex multi-object scenes, precise attribute binding, and structured visuals like posters, anime storyboards, and cinematic compositions.

Also read:

- Bitcoin Developers Propose BIP-361: Quantum-Proof Migration That Would Freeze Millions of Legacy Coins

- Thomas Peterffy’s Bold Vision for Prediction Markets: Why Interactive Brokers Is Betting Big on “Useful” Bets

- Diamond Prices Hit Rock Bottom: The Lowest Levels in 20 Years – And How the Market Went Off the Rails

- Spotify Brings AI Prompting to Podcasts: Discovery Dream or Algorithm Trap?

Try It Now

If you’re looking for a lightweight, fully open, and surprisingly capable text-to-image model that doesn’t require a datacenter GPU, ERNIE-Image is worth trying right now. The combination of strong text rendering, efficient architecture, and Apache 2.0 licensing makes it one of the most exciting open releases of 2026 so far.