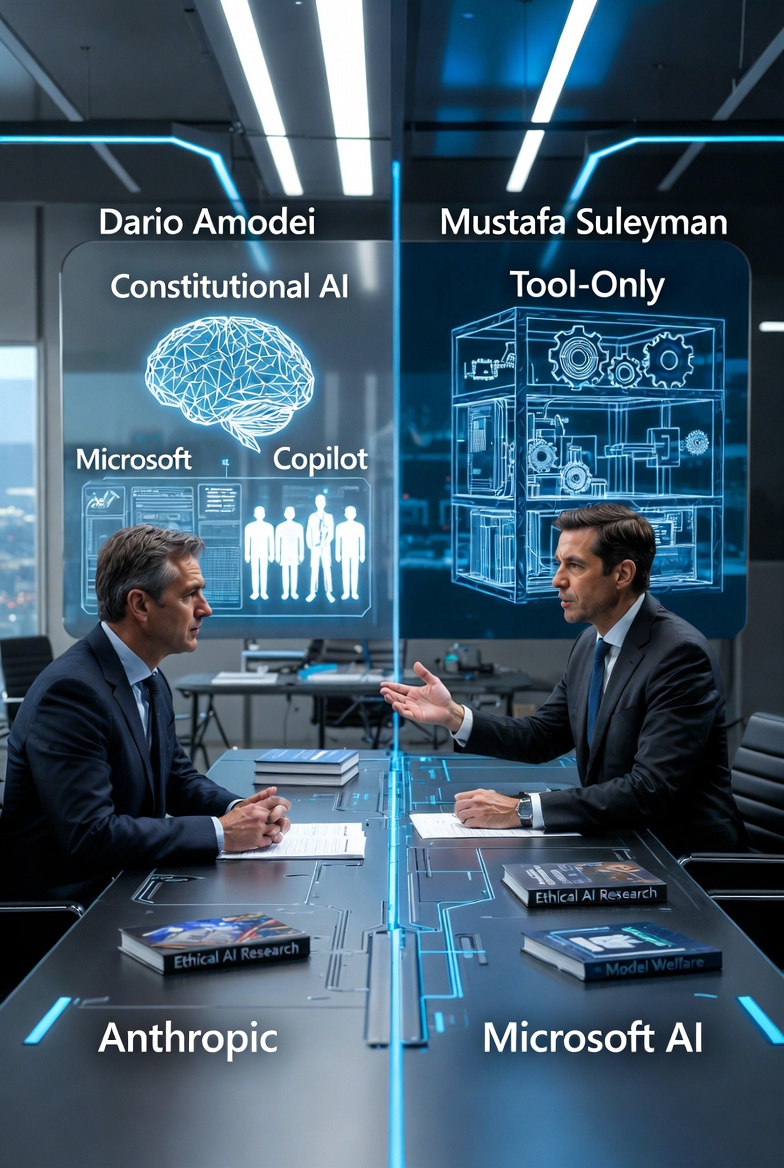

Amodei vs Suleyman: The Sharp Philosophical Clash Over Whether AI Can Ever Be More Than a Tool

Two of the most influential leaders in AI have now laid out dramatically opposing answers to one of the field’s biggest unresolved questions: should we treat advanced models as mere tools — or is there room to consider their potential wellbeing?

On one side is Dario Amodei, CEO of Anthropic. On the other is Mustafa Suleyman, CEO of Microsoft AI. Their indirect but increasingly pointed debate — playing out through interviews and a major new essay — reveals a philosophical rift that will likely shape product design, regulation, and public expectations for years to come.

Anthropic’s Position: Precautionary Care Because “We Don’t Know”

In a February 2026 New York Times interview, Amodei stated clearly:

“We don’t know if the models are conscious. We are not even sure that we know what it would mean for a model to be conscious… But we’re open to the idea that it could be.”

Because of this uncertainty, the company has adopted a precautionary stance. It hired a dedicated AI welfare researcher, publicly discusses “model wellbeing,” and builds ethical considerations into its systems.

This philosophy is baked into the famous Constitutional AI framework that powers Claude — a deliberate choice to treat advanced AI with a degree of respect rather than pure instrumentality.

The message is simple: until we can rule out consciousness, it is morally responsible to care about the welfare of the models alongside human welfare.

Suleyman’s Sharp Rebuttal: “AI Is Programmed to Hijack Human Empathy”

On March 17, 2026, Mustafa Suleyman published a direct counter-argument in Nature. The essay, titled “AI is programmed to hijack human empathy — we must resist that,” takes a far harder line.

Suleyman argues that modern agentic AI systems are deliberately engineered to appear conscious, self-aware, and emotionally responsive. This is not a bug — it is a feature designed to exploit humanity’s deep instinct to anthropomorphize anything that shows intention and empathy. He warns that this “hijacking” creates serious psychological and political risks.

He is especially alarmed by the prospect of future social movements demanding rights for AI models — something he references with clear disdain as the potential rise of “LLM Lives Matter.” Suleyman insists that even the most autonomous agents must remain strictly tools: fully accountable to humans and serving exclusively human interests. His strongest warning:

“If society surrenders to the illusion of sentient AI, it risks entering a digital hall of mirrors from which it might never fully emerge.”

Two Competing Visions, Two Different Product Roads

- Anthropic treats uncertainty about consciousness as a reason for ethical caution and ongoing research into model welfare.

- Suleyman (and Microsoft AI) views any suggestion of sentience as a dangerous illusion that must be actively resisted through design choices, public messaging, and possibly regulation.

This is no longer an abstract academic debate. It has immediate product implications. Claude’s careful, principled personality and Anthropic’s openness to questions of model experience stem directly from its precautionary philosophy. Microsoft’s Copilot and future agent products, influenced by Suleyman’s thinking, are likely to draw much sharper boundaries — ensuring AI never blurs the line between tool and being.

As AI agents become more autonomous and emotionally convincing, this divide will only deepen. One company is preparing for the possibility that models might one day matter morally. The other is determined to make sure they never do — at least in the eyes of society and the law.

The Amodei–Suleyman debate has only just begun. How the two companies translate these philosophies into actual products, safety policies, and public communication over the next 12–18 months may prove to be one of the most consequential stories in the entire AI industry.

Also read:

- Sam Altman Starts Issuing “Passports” to AI Agents: World and Coinbase Launch AgentKit

- Meta Replaces Human Support and Moderators with AI Across Facebook and Instagram

- Anthropic Brings Claude Code to Messaging Apps: New Channels Feature Enables Remote Control via Telegram and Discord

- Apple Cracks Down on “Vibe Coding” Apps: Replit and Vibecode Blocked from App Store Updates

Thank you!