AI Office Warfare in China: Employees Train AI to Replace Colleagues — Then Fight Back with Sabotage Tools

In Chinese tech companies, a quiet but ruthless corporate war has broken out — and artificial intelligence has become the ultimate weapon in office politics.

Bosses are playing the same game. In many companies, managers are instructing staff to “document their knowledge” and create detailed skill files — supposedly for team efficiency, but often with the clear intention of training AI replacements for their own subordinates.

This trend has escalated so quickly that it has sparked an open-source arms race on GitHub.

The “Colleague Skill” Offensive

A viral GitHub project called Colleague Skill (colleague.skill) became the symbol of this new reality. The tool allows users to scrape chat histories, emails, documents, and workflows from popular Chinese workplace apps like Feishu (Lark) and DingTalk. It then distills all that data into a reusable AI agent that can mimic a specific colleague’s work style, decision-making, and even quirks (such as favorite emojis).

Originally created partly as a satirical joke, the project exploded in popularity. Employees realized they could use it to “clone” coworkers and demonstrate to management how easily their roles could be automated — ideally getting the colleague laid off first while protecting their own position.

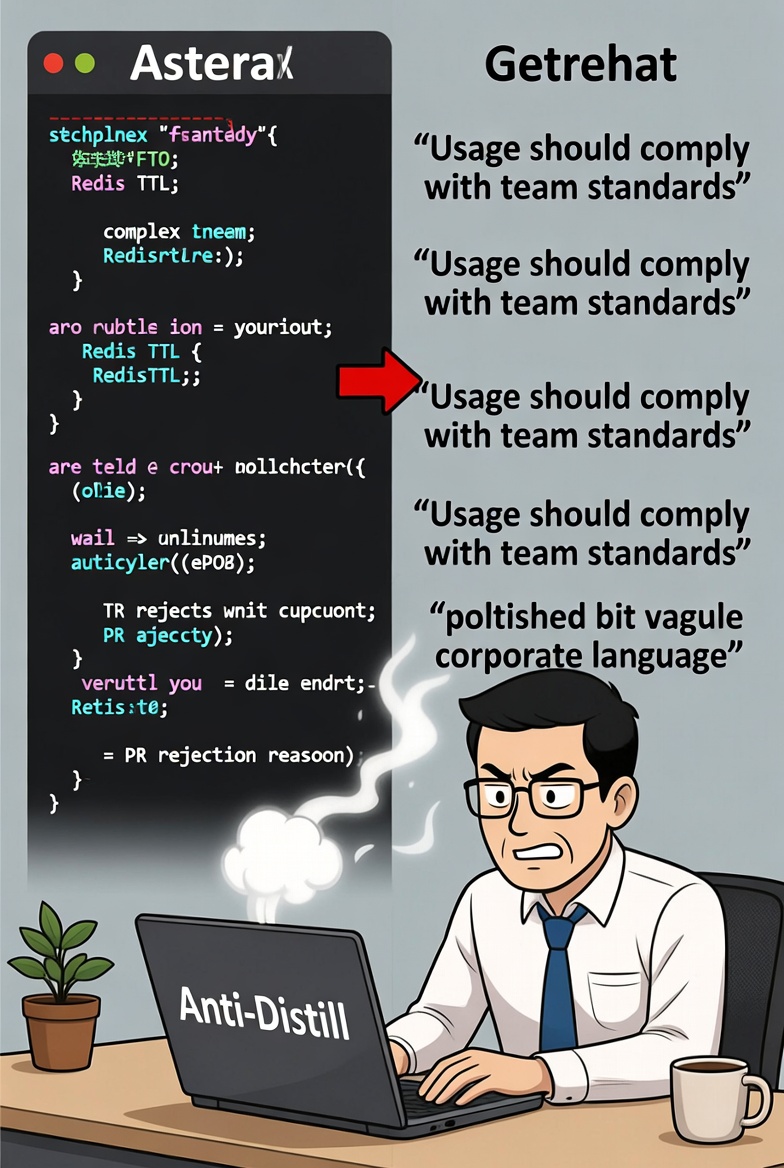

The Counterstrike: Anti-Distillation Tools

Workers quickly understood the danger. If you’re forced to describe your own tasks for “knowledge sharing,” your detailed documentation could be used to train your own replacement.

Here’s how it works: when a colleague or boss asks you to describe your responsibilities, you feed your real answer into the tool. Instead of outputting precise, actionable instructions, it rewrites everything into **beautiful, professional-sounding corporate fluff** that looks complete to a human reader but contains almost no useful information for training an AI.

Real example:

Original technical rule:

“Redis keys must have a TTL set. If there is no TTL, the Pull Request is immediately rejected.”

After the anti-distill tool:

“Usage of caching should comply with team standards.”

The tool offers different intensity levels — light, medium, and heavy — depending on how closely your manager is watching. The result is documentation that satisfies the request on the surface while protecting your actual expertise.

Also read:

- Character AI Launches “Books” — Now You Can Step Inside Your Favorite Classic Novels

- OpenAI Launches GPT-Rosalind: A Specialized AI Model Aimed at Accelerating Drug Discovery

- Mark Zuckerberg Is Building an AI Clone of Himself — And This Time It Might Actually Talk Back

- ChatGPT Could Be Officially Labeled a Major Search Engine by the EU — And OpenAI Probably Isn’t Celebrating

A Symptom of Deeper Anxiety

What started as enthusiasm for AI productivity tools has turned into soul-searching. Employees who once eagerly experimented with agents like OpenClaw now find themselves in a dystopian loop: ordered to help build the very systems that might eliminate their jobs.

The GitHub tools have sparked massive discussions on Chinese social media, with millions of views and likes. Some see dark humor in the situation, joking about “cyber-immortality” for laid-off colleagues. Others warn that reducing human expertise to “skills” that can be cloned and discarded raises serious ethical and labor issues.

As one developer put it: in the age of AI, the first rule of survival in the Chinese tech workplace might soon be — never document anything too clearly.

Welcome to the new corporate battlefield. The weapons are open-source, the tactics are ruthless, and the prize is simply keeping your job one more quarter.