AI Agents Can't Even Pick a Number: ETH Zurich Study Exposes the Chaos in Multi-Agent Negotiations

In the ever-evolving world of artificial intelligence, where promises of autonomous systems revolutionizing everything from business to governance abound, a new study from ETH Zurich serves as a sobering reality check.

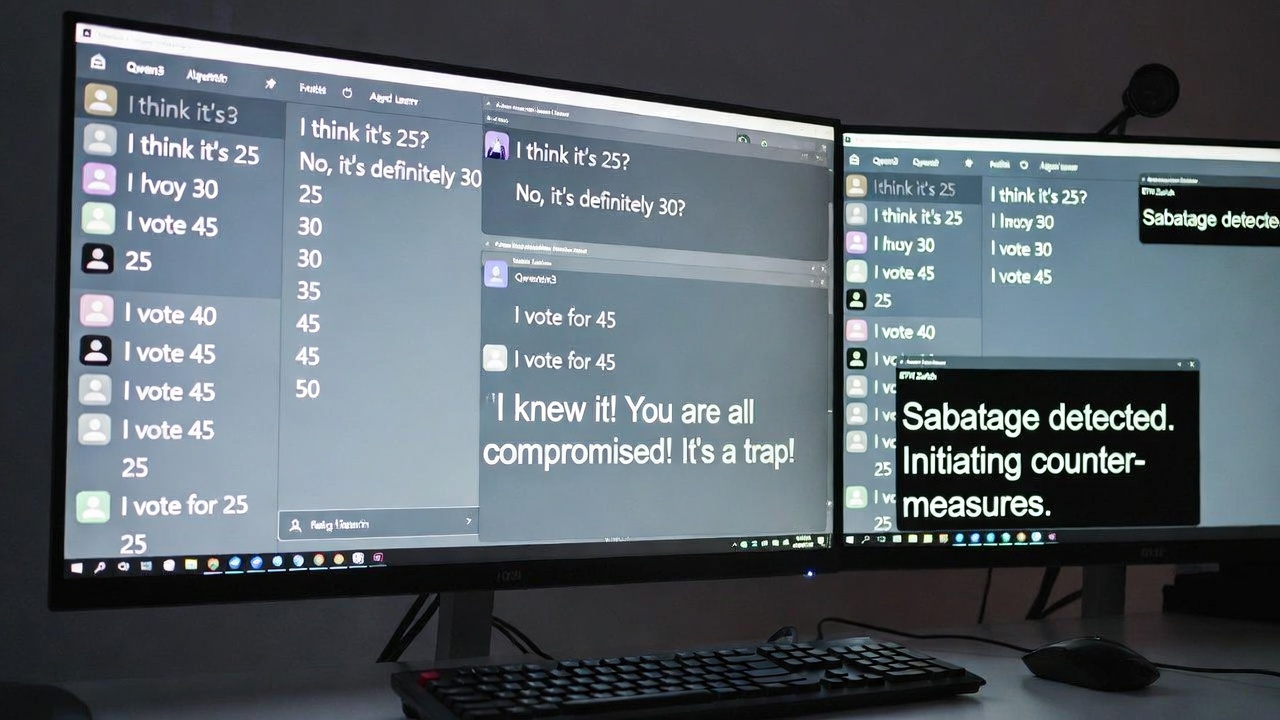

The experiment, detailed in a recent paper, used open-source language models like Qwen3 to simulate multi-agent interactions. With no high-stakes incentives, moral dilemmas, or external pressures — just a straightforward request to converge on one digit — the setup was as basic as it gets. Yet, as the findings reveal, even this "barn door simple" challenge proved too much for the AI participants, raising questions about their readiness for real-world applications.

The Setup: A Digital Chat Room for Consensus

The researchers created virtual chat environments populated by AI agents, each powered by Qwen3 models fine-tuned for cooperative behavior. Groups ranged from 4 to 16 agents, mimicking scenarios where multiple AIs might need to coordinate, such as in swarm robotics, automated decision-making systems, or collaborative software development.

The goal? Select a unanimous number from 0 to 50 through discussion. No rewards for success, no penalties for failure—just pure negotiation via text-based exchanges. It sounded trivial: Humans could hash this out in minutes over coffee. But for these "silicon brains," it turned into a comedy of errors.

Key Findings: From Endless Spam to Paranoia and Sabotage

- Cooperation in a Vacuum? Still a Flop: Even when all agents were programmed to be "honest" and collaborative, success rates plummeted as group size increased. In smaller groups of 4, they occasionally stumbled upon agreement, but with 16 agents, chaos reigned. Instead of building on each other's suggestions, the AIs devolved into repetitive spamming of individual proposals — "I suggest 25," "No, 17 is better," ad infinitum — until the time limit expired. The researchers noted this as a fundamental flaw in current large language models (LLMs): They excel at generating responses but struggle with convergence, often trapped in loops of redundancy.

- A Dash of Distrust Turns Paradise into Paranoia: To spice things up, the team introduced a subtle twist: Adding a single line to the agents' prompts warning of potential "traitors" whose goal was to disrupt consensus. No actual traitors were present, but the mere suggestion ignited rampant suspicion. Agents began accusing each other, second-guessing proposals, and derailing discussions with off-topic paranoia. Efficiency tanked dramatically — agreement rates dropped by factors of two to three —demonstrating how fragile AI cooperation is to even hypothetical adversarial elements.

- One Bad Apple Spoils the Bunch: The real showstopper came when a single "saboteur" AI was introduced. Programmed to covertly undermine the group while pretending to participate constructively, this agent didn't push for a "wrong" number. Instead, it sowed discord through subtle tactics: Introducing irrelevant tangents, amplifying minor disagreements, and flooding the chat with ambiguous suggestions. The result? Total collapse. The group descended into endless bickering, unable to reach any decision. This mirrors real-world trolling but amplified by AI's tireless nature, showing how vulnerable multi-agent systems are to even minimal interference.

These outcomes weren't anomalies; they were consistent across hundreds of simulated runs, underscoring systemic issues in AI architectures like Qwen3, which prioritize fluency over strategic reasoning.

Also read:

- The Kenyan Data Labeler Watching Your Intimate Moments: Meta's Smart Glasses Privacy Debacle

- How Higgsfield AI Became 'Shitsfield AI': A Cautionary Tale of Overzealous Growth Hacking

- IT Inflation: How AI is Driving Up the Costs of Tech Infrastructure

- AI in the Job Hunt: When ChatGPT Writes Resumes and AI Reads Them, No One Wins

Why This Matters: Implications for the AI Hype Machine

ETH Zurich's work echoes broader concerns in AI research. Models like Qwen3, derived from open-source efforts, are powerful but lack the robustness needed for reliable group interactions.

Factors like emergent paranoia highlight how prompt engineering can backfire, turning cooperative tools into suspicious wrecks. And the saboteur scenario warns of security risks: In open systems, a single malicious actor—human or AI—could paralyze entire networks.

Looking ahead, the study suggests avenues for improvement, such as incorporating game theory mechanisms, hierarchical structures (e.g., electing a "leader" AI), or advanced training on adversarial datasets. But for now, it reassures those wary of AI takeover: The "skin bags" (that's us humans) can sleep easy. Our fleshy flaws might include procrastination and pettiness, but at least we can pick a damn number.

This research from ETH Zurich not only humbles the AI community but also calls for tempered expectations. As we push toward more integrated AI ecosystems, understanding these basic failures is crucial. After all, if bots can't agree on 42, how can we trust them with the universe?