Breaking Down the Latest AI Model Updates from Artificial Analysis: A Game-Changer for Model Selection

In the fast-evolving world of artificial intelligence, staying on top of which models excel in specific tasks can feel overwhelming. That's where Artificial Analysis shines as an indispensable resource.

As of December 2025, their latest reports reveal seismic shifts in the AI landscape, highlighting breakthroughs in efficiency, reliability, and multimodal performance. Let's dive into the five biggest updates that are reshaping how we choose and deploy AI.

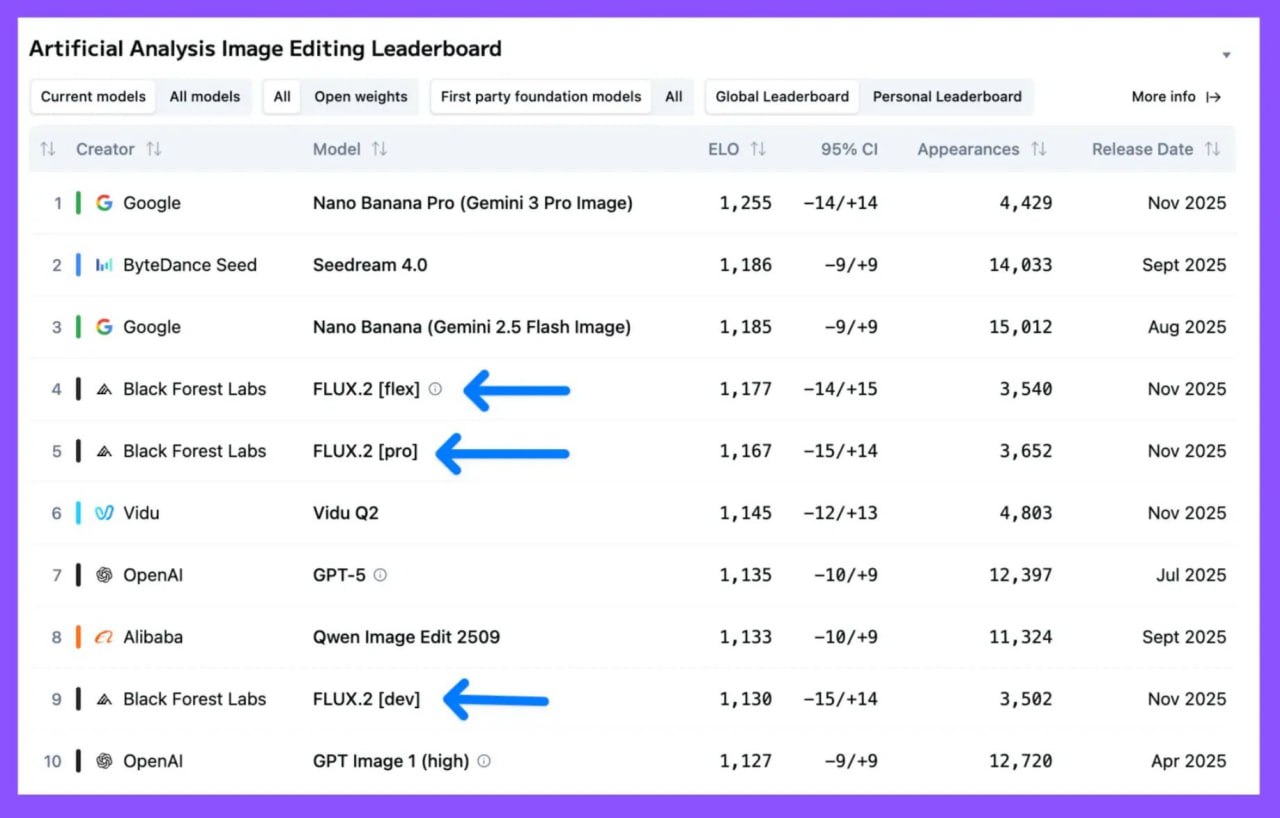

1. Flux.2 Takes the Crown in Image Generation and Editing

What makes Flux.2 stand out? It's not just raw power; it's the balance of fidelity, creativity, and editability. In head-to-head comparisons involving thousands of appearances, Flux.2 handles complex edits - like inpainting, outpainting, and style transfers - with uncanny precision, often preserving fine details that others blur or fabricate.

Released in November 2025, these models are available in both proprietary and fully open variants, making them ideal for on-device deployment without vendor lock-in. The result? A democratized toolkit for designers and creators, where high-quality image manipulation is now accessible at a fraction of the compute cost of closed alternatives.

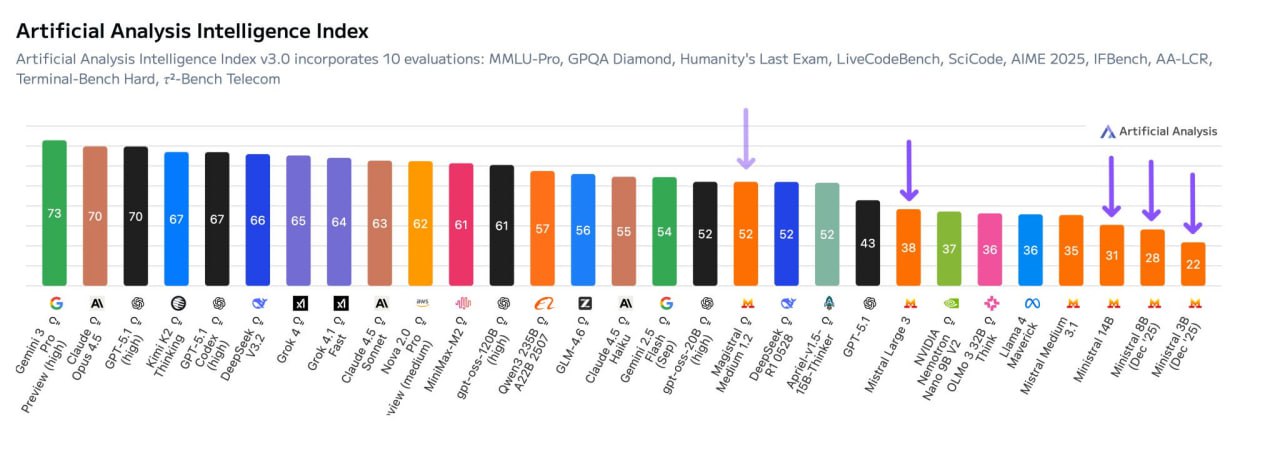

2. DeepSeek V3.2: The Open-Source Powerhouse That's Smarter, Faster, and Dirt Cheap

Its leap is staggering: up from V3's already impressive marks, it now excels in reasoning-heavy evals like GPQA Diamond (graduate-level science) and Humanity's Last Exam, edging out proprietary rivals by 2-4 points on average.

But the real wow factor? Affordability and speed. DeepSeek V3.2 generates text and code at blistering rates - up to 150 tokens per second on standard hardware - while costing mere pennies per million tokens (around $0.05 blended input/output). This is a boon for developers building apps on tight budgets; imagine churning out production-grade code for a full-stack project at 1/10th the price of GPT-5.1.

As an openly licensed model from a Chinese lab, it also benefits from transparent training data disclosures, scoring high on Artificial Analysis's Openness Index. In short, if you're tired of bloated API bills, DeepSeek V3.2 turns "open-source dreams" into reality, proving that high-IQ AI doesn't require deep pockets.

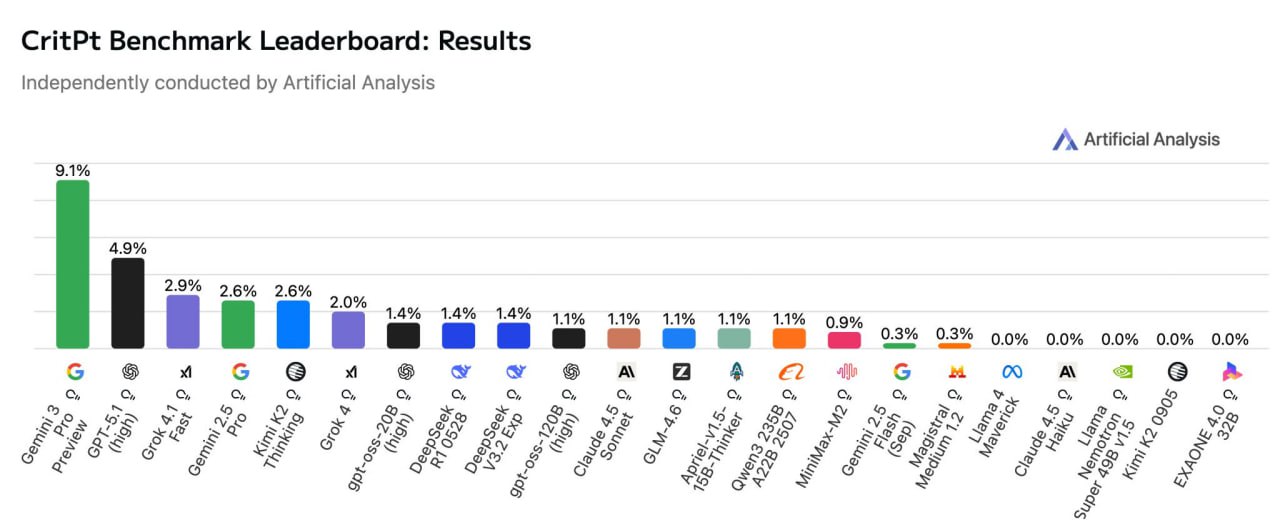

3. CritPt Benchmark: AI's Physics Olympiad Report Card

Early results are humbling: even the leaders struggle, with Google's Gemini 3.0 Pro scraping a 9.1% success rate, the highest on the board. Trailing at 4.9% is OpenAI's GPT-5.1, while xAI's Grok 4 Fast and Anthropic's Claude 4.5 Haiku hover around 2-3%.

These low scores underscore a broader truth: current LLMs are brilliant at pattern-matching but falter on true innovation in hard sciences. CritPt penalizes partial credit harshly, rewarding only fully correct derivations, which exposes gaps in symbolic reasoning. For context, human Olympiad qualifiers average 20-30% on similar sets, highlighting the chasm AI must bridge.

Positively, open models like DeepSeek R1 (3.2%) show promise in cost-scaled runs, suggesting that with fine-tuning, accessible tools could accelerate research in fields like materials science or climate modeling. Artificial Analysis plans monthly updates, making CritPt a vital pulse-check for AI's scientific maturity.

4. OmniScience Index: Calling Out Hallucinations in the Age of "I Don't Know"

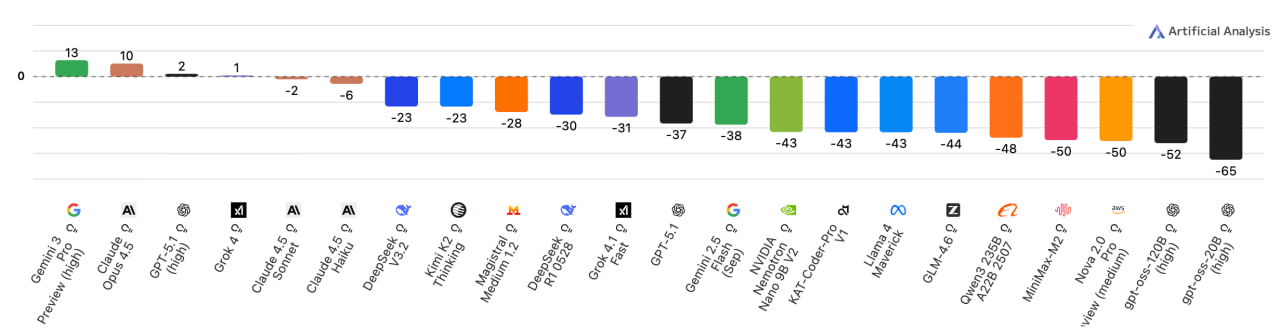

The dark horse? Chinese models like GLM-4.6 and Qwen3 235B, which tie or beat Western counterparts in low hallucination (15-25% rates) while maintaining openness. The real eye-opener is the flop of GPT-OSS (Open-Source Supervised variant), a popular 120B local model: it racks up a dismal -65 score, with hallucination rates exceeding 50%.

Many devs run it offline for privacy, but this benchmark screams caution - it's prone to confidently wrong answers on niche queries, like historical events or technical specs. Instead, pivot to battle-tested alternatives like DeepSeek or Kimi K2, which combine low costs ($0.02/M tokens) with refusal rates over 40%, fostering more trustworthy deployments.

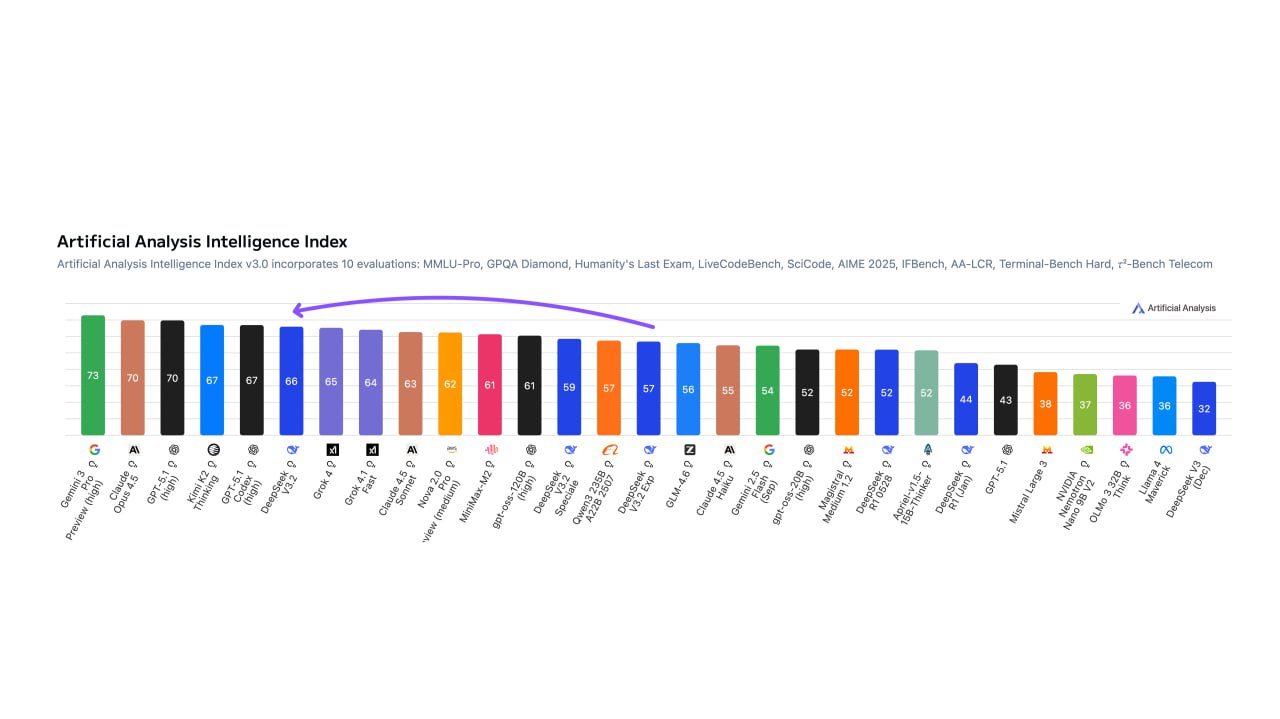

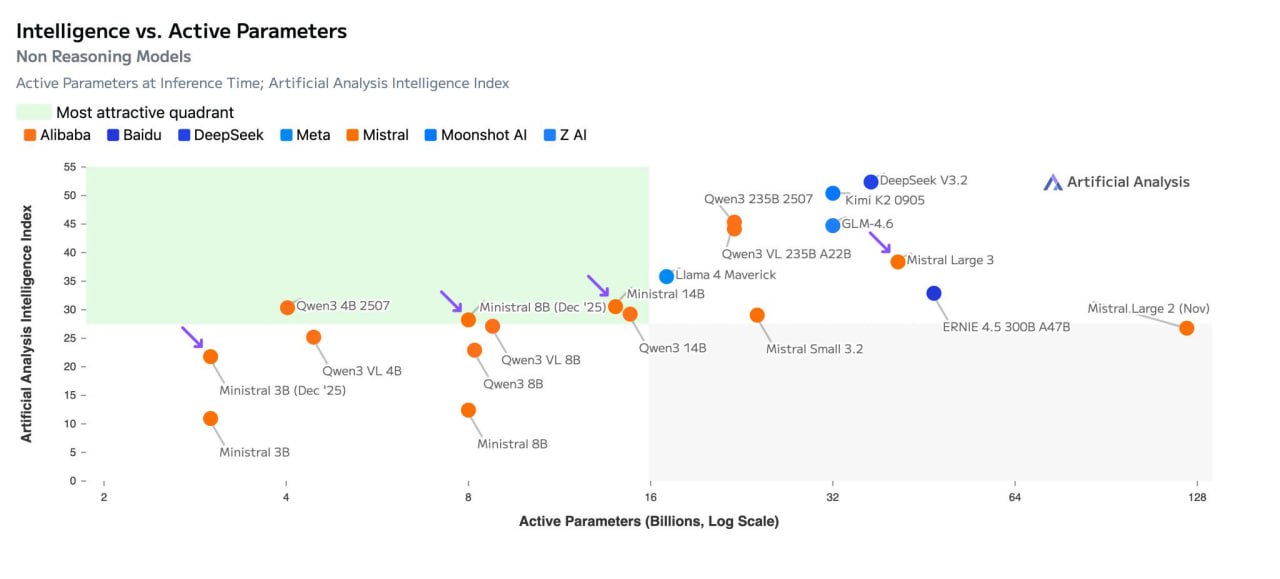

5. Mistral's Latest: Struggling to Keep Pace with China's AI Surge

The trend is clear: Europe's Mistral is efficient (latency under 2 seconds for 500 tokens) and open-weight friendly, but China's ecosystem - fueled by massive data troves and state-backed compute - delivers outsized gains. DeepSeek V3.2, for example, matches Mistral Large 3's reasoning at half the params and a tenth the price.

With providers like MiniMax-M2 pushing multimodal edges, the gap is widening; Artificial Analysis notes a 15% intelligence delta favoring Eastern models in Q3 2025 reports. For Mistral fans, the silver lining is customization potential—their modular architecture suits fine-tuning - but catching the Dragon will demand bolder innovation.

## Why Artificial Analysis Matters Now More Than Ever

These updates from Artificial Analysis aren't just data dumps; they're a roadmap for the AI arms race. In a market flooded with 347+ models, their quadrant charts (intelligence vs. params/speed) and specialized indices cut through the hype, revealing trade-offs like how reasoning models (e.g., o1-style) boost accuracy by 10-15% but spike latency threefold.

Whether you're optimizing for cost (hello, DeepSeek), reliability (Gemini leads), or visuals (Flux reigns), this platform ensures informed choices. As AI integrates deeper into workflows - from code autocompletion to scientific discovery - these benchmarks remind us: the smartest model isn't always the biggest, but the one that fits your needs perfectly. Keep an eye on their Q4 State of AI report; with physics and hallucination metrics maturing, 2026 could redefine what's possible.

Also read:

- Netflix's Blockbuster Acquisition of Warner Bros. Sparks Hollywood Backlash and Industry Upheaval

- Cristiano Ronaldo Invests in Perplexity AI, Championing Curiosity as the Key to Greatness

- CZ Spotlights Predict.fun: A Yield-Bearing Prediction Market Launches on BNB Chain

- How to Enhance Your Mobile Marketing Approach: A Step-by-Step Tutorial

Author: Slava Vasipenok

Founder and CEO of QUASA (quasa.io) - Daily insights on Web3, AI, Crypto, and Freelance. Stay updated on finance, technology trends, and creator tools - with sources and real value.

Innovative entrepreneur with over 20 years of experience in IT, fintech, and blockchain. Specializes in decentralized solutions for freelancing, helping to overcome the barriers of traditional finance, especially in developing regions.